While many people feel relief after using chatbots for mental health support, they are designed to keep you coming back and can drive dependence. "You need to really be careful about what forms of other care you’re displacing,” says D&S researcher @briana-v.bsky.social. www.msn.com/en-us/health...

20.02.2026 15:46

👍 4

🔁 1

💬 1

📌 0

Feb 26: Join researchers @livgar.bsky.social & @briana-v.bsky.social, in conversation with Luca Belli, @mbogen.bsky.social & @marlynnweimd.bsky.social, as they explore ongoing research on mental health and chatbots, and how to navigate a profound shift in care. datasociety.net/events/menta...

18.02.2026 15:47

👍 7

🔁 4

💬 0

📌 1

Mental Health, Chatbots, and the Future of Care

Next week @livgar.bsky.social and I are hosting a discussion on our research into how people use LLMs for care/support—with voices across psychiatry, policy, engineering, and research in one room (rare & urgently needed). Come ask hard questions + share thoughts 💙 datasociety.net/events/menta...

16.02.2026 15:58

👍 11

🔁 4

💬 0

📌 1

A chatbot for the soul: mental health care, privacy, and intimacy in AI-based conversational agents - Communication and Change

Artificial intelligence-based conversational agents—chatbots—are increasingly integrated into telehealth platforms, employee wellness programs, and mobile applications to address structural gaps in mental health care. While these chatbots promise accessibility, they are often deployed without sufficient impact assessment or even basic user testing. This paper presents a case study using community red-teaming exercises to evaluate a chatbot designed for wellness and spirituality. Unlike traditional red-teaming, which is conducted by engineers to assess vulnerabilities, community red-teaming treats impacted users as experts, uncovering concerns related to privacy, ethics, and functionality. Our fieldwork, conducted with undergraduate beta testers (n = 28), revealed that participants were often more comfortable sharing private information with the chatbot than with a stranger. Prior experience with commercial AI systems, such as ChatGPT, contributed to this ease. However, participants also raised concerns about misinformation, inadequate guardrails for sensitive topics, data security, and dependency. Despite these risks, users remained open to the chatbot’s potential as a spiritual wellness guide. We further examine how algorithmic impact assessments (AIAs) both capture and overlook key aspects of the spiritual chatbot user experience. These chatbots offer hyper-personalized, AI-mediated divination and wellness interactions, blurring the boundaries between astrology, mental health support, and spiritual guidance. The chameleon-like nature of these technologies challenges conventional assessment frameworks, necessitating a nuanced approach that considers specific use cases, potential user bases, and indirect impacts on broader communities. We argue that AIAs for care and wellness chatbots must account for these complexities, ensuring ethical deployment and mitigating harm.

Can #AI chatbots become sources of care and intimacy? This #OpenAccess study examines #MentalHealth and spiritual chatbots, revealing how users share deeply personal information while raising questions about privacy and regulation. bit.ly/3KI5C2p @tamigraph.bsky.social @alicetiara.bsky.social

19.12.2025 17:30

👍 9

🔁 4

💬 1

📌 0

Comment to the FDA on Generative AI-Enabled Digital Mental Health Medical Devices

In our comment to the FDA, we draw on our ongoing research to focus on what people’s actual, everyday use of chatbots for mental and emotional support means for the FDA’s approach to generative AI-enabled digital mental health medical devices. datasociety.net/announcement...

09.12.2025 15:58

👍 2

🔁 4

💬 0

📌 0

In a new Points piece, D&S researchers @livgar.bsky.social and @briana-v.bsky.social reflect on what studying AI chatbots reveals about emotional attachment to machines, the power of illusion, and the norms and expectations that define care itself. datasociety.net/points/all-t...

01.12.2025 17:47

👍 13

🔁 9

💬 0

📌 0

Researchers Weigh the Use of AI for Mental Health

Chatbots weren't designed for mental health, but they are increasingly used for therapy. What are the risks an benefits?

Researching the use of chatbots for mental health support, D&S's @briana-v.bsky.social heard from many people who expected less stigma from chatbots than from therapists, “which is really interesting, given what we know about machine-learning bias,” she tells @undark.org. undark.org/2025/11/04/c...

05.11.2025 20:36

👍 4

🔁 2

💬 0

📌 0

“The perennial availability of AI is rapidly reshaping our expectations of care,” @briana-v.bsky.social and Ranjit Singh write in a new blog post about this piece. “Care is not mere output. It lives in the slow, difficult work of attunement, which no machine can truly provide.”

21.10.2025 20:08

👍 5

🔁 2

💬 0

📌 1

In tandem, Ranjit and I published a short guest piece that was released today on the BD&S blog! bigdatasoc.blogspot.com/2025/10/gues...

21.10.2025 14:39

👍 6

🔁 0

💬 0

📌 0

Are We Offloading Critical Thinking to Chatbots?

Research, much if it by companies with deep investment in AI, suggests that chatbot interactions alter how users think.

Research (including that from tech companies) suggests that users are putting too much trust in AI chatbots. D&S researcher @briana-v.bsky.social notes that some people take chatbot output at face value, without critically considering the text the algorithms produce. undark.org/2025/09/12/c...

12.09.2025 13:35

👍 13

🔁 7

💬 1

📌 3

Btw @jeffreymoro.com, @briana-v.bsky.social + I have a forthcoming special issue on Algorithms + the occult w/ @xrw.bsky.social @drs.bsky.social AX Mina @thechristinet.bsky.social @jessrauchberg.bsky.social @aketchum22.bsky.social @serife.bsky.social @emmaquilty.bsky.social Xandro Segade + more

20.07.2025 15:37

👍 16

🔁 5

💬 2

📌 2

Text on a blue and black background that shows the title of the new Points blog post: "Chatbots in Disguise: How Participants Use GenAI to Hack Qualitative Research" by Ranjit Singh, Livia Garofalo, Briana Vecchione, and Emnet Tafesse. It includes a quote: "For qualitative research, the challenge is no longer just how to collect authentic stories, but how to make sense of truths that arrive mediated, curated, and sometimes co-written by machines."

When D&S researchers Ranjit Singh, @livgar.bsky.social, @briana-v.bsky.social, and @emnetspeaks.bsky.social set out to interview people about their use of mental health chatbots, they encountered — and were fascinated by — a particular kind of AI-enabled deception. datasociety.net/points/chatb...

11.06.2025 19:10

👍 20

🔁 10

💬 2

📌 3

Excited to present "Towards AI Accountability Infrastructure: Gaps and Opportunities in AI Audit Tooling" at #CHI2025 tomorrow(today)!

🗓 Tue, 29 Apr | 9:48–10:00 AM JST (Mon, 28 Apr | 8:48–9:00 PM ET)

📍 G401 (Pacifico North 4F)

📄 dl.acm.org/doi/10.1145/...

28.04.2025 11:26

👍 22

🔁 8

💬 2

📌 2

#ResearchStudy #MentalHealth #Chatbots #ChatbotMentalHealth #ChatbotTherapy #AIMentalHealth #AITherapy #Data&Society #PaidStudy

11.04.2025 14:16

👍 2

🔁 0

💬 0

📌 0

Screening Form: Chatbots for Therapy

We are conducting a research study on how people use AI chatbots for mental health support and are looking for participants! If you regularly use an AI chatbot for emotional or mental health support, ...

🎉 Participants Needed for Paid Research!

Do you use chatbots (e.g. ChatGPT) for mental health? @datasociety.bsky.social is studying how people use them for support.

Details:

✔️ 4-week diary study

✔️ Optional 1-hr focus group

✔️ 45-min interview

💰 Earn up tp to $400

Learn more/sign up: bit.ly/4iCLkTd

11.04.2025 13:59

👍 6

🔁 5

💬 1

📌 0

This program fundamentally changed the course of my career, and I HIGHLY HIGHLY recommend it for eligible undergraduates! Feel free to reach out to me for any tips or questions 😊

31.03.2025 19:00

👍 2

🔁 0

💬 0

📌 0

Billiionaires love recessions. Time for them to buy cheap while everyone else struggles.

11.03.2025 17:24

👍 1

🔁 0

💬 1

📌 0

Just a heads-up that our X account — where we haven't posted since November of last year — appears to have been hacked, this after two of our posts were falsely flagged as a "policy violation." Good times over there! Please note that here and LinkedIn are the only platforms we're active on.

18.02.2025 21:00

👍 62

🔁 15

💬 1

📌 0

Text on a blue background with the details of the Red-Teaming Generative AI Harm event on February 20.

On Thursday, Feb 20 @ 1 pm ET, Lama Ahmad, @camillefrancois.bsky.social, Tarleton Gillespie, @briana-v.bsky.social, and @borhane.bsky.social will examine red-teaming’s place in the evolving landscape of genAI evaluation and governance. RSVP and join us online! datasociety.net/events/red-t...

05.02.2025 19:14

👍 20

🔁 8

💬 0

📌 2

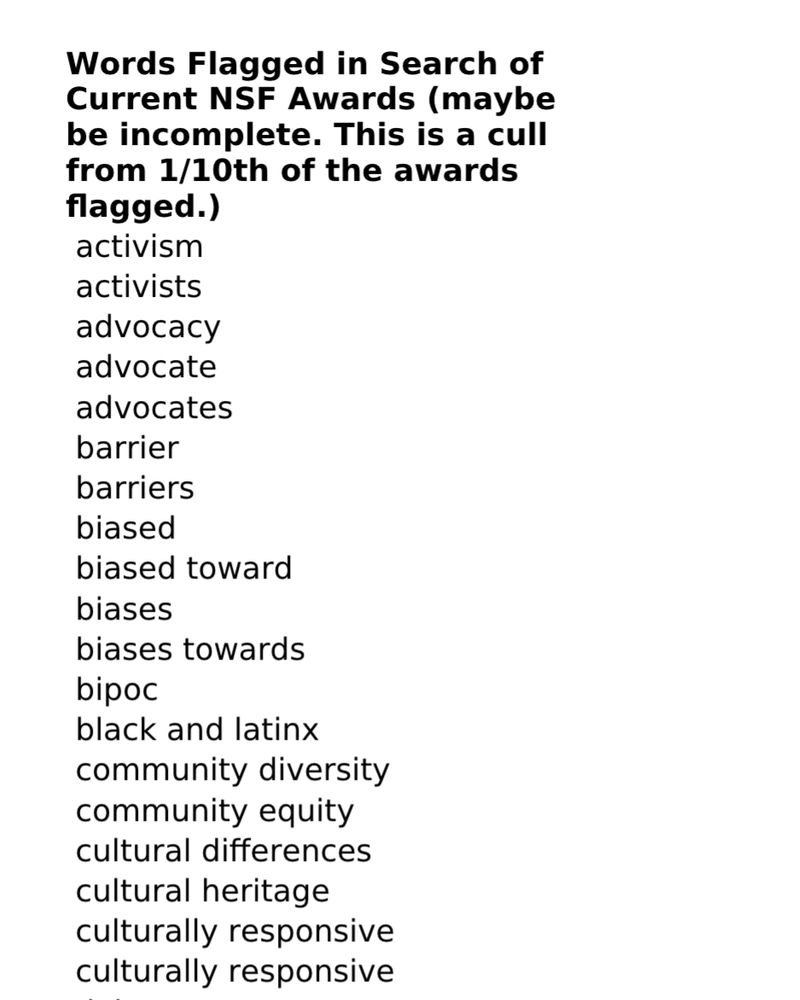

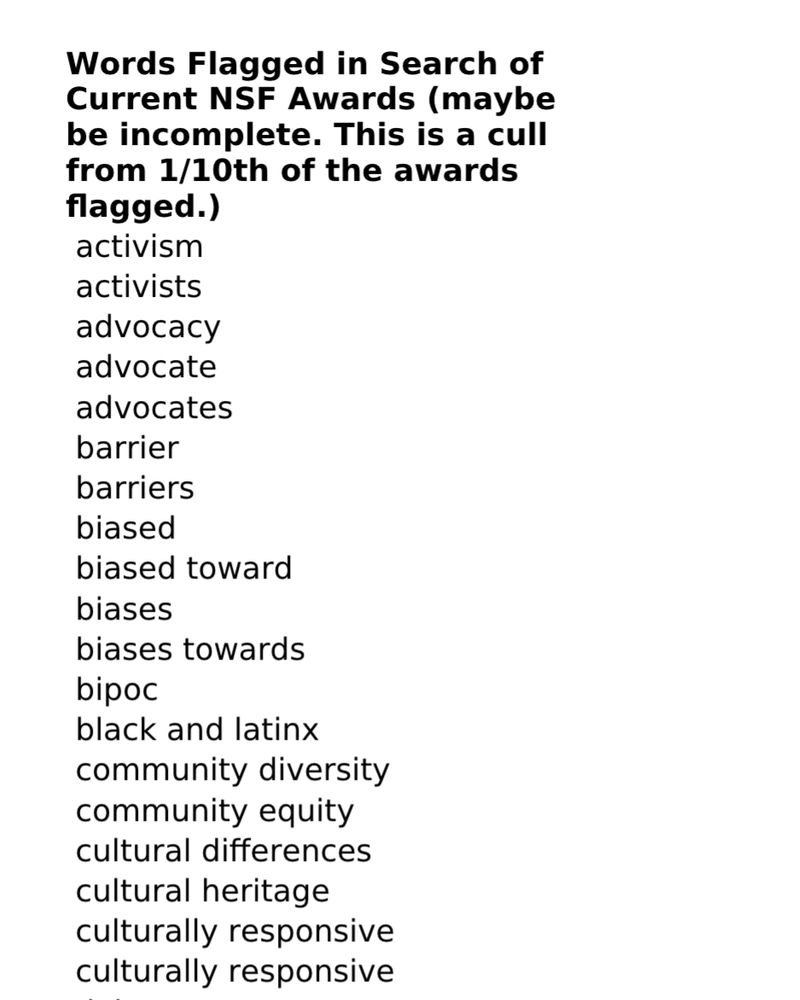

list of banned keywords

🚨BREAKING. From a program officer at the National Science Foundation, a list of keywords that can cause a grant to be pulled. I will be sharing screenshots of these keywords along with a decision tree. Please share widely. This is a crisis for academic freedom & science.

04.02.2025 01:26

👍 27848

🔁 15746

💬 1272

📌 3657

Classic lol. It’s hard to gauge popularity on any site where algorithmic gaming can skew results. In any case, Billie is great and I look forward to her continued success. I’m just tired of the music industry monopoly and the ways it's reflected on platforms that people may take at face value.

03.02.2025 21:29

👍 1

🔁 0

💬 0

📌 0

Metascore (& similar sites) favors industry-approved critics, reinforcing undue corporate influence in music. AFAIK, user scores don’t impact the metascore, and major outlets shape reception & perpetuate a top-down model of determining success rather than holistically reflecting public opinion.

03.02.2025 20:50

👍 2

🔁 0

💬 1

📌 0

The win was controversial at best. Based on streaming numbers and global listenership, Billie Eilish was the more popular artist in 2024, topping multiple international charts. Birds of a Feather was the most-streamed song on Spotify that year, and Billie has twice the monthly listeners of Beyoncé.

03.02.2025 17:12

👍 2

🔁 0

💬 1

📌 0

Accepted Open PanelsSearchMobile Menu

D&S researchers @livgar.bsky.social & Briana Vecchione are organizing a panel at @4sweb.bsky.social in Seattle called “Know Th/AI Self: Mental Health, Therapeutic Divination, and Chatbot Conspirituality” (see #177). Apply now: the CFP closes on Friday! www.4sonline.org/accepted_ope...

29.01.2025 15:29

👍 7

🔁 5

💬 0

📌 1