I'm excited to open the new year by sharing a new perspective paper.

I give a informal outline of MD and how it can interact with Generative AI. Then, I discuss how far the field has come since the seminal contributions, such as Boltzmann Generators, and what is still missing

16.01.2026 10:25

👍 19

🔁 5

💬 1

📌 1

Measuring AI Progress in Drug Discovery - A NEW LEADERBOARD IN TOWN

2015-2025: turns out that there's hardly any improvement. AI bubble?

GPT is at 70% for this task, whereas the best methods get close to 85%.

Leaderboard: huggingface.co/spaces/ml-jk...

P: arxiv.org/abs/2511.14744

19.11.2025 06:52

👍 12

🔁 7

💬 3

📌 3

thanks!

05.10.2025 18:05

👍 1

🔁 0

💬 0

📌 0

Posting a few nice importance sampling-related finds

"Value-aware Importance Weighting for Off-policy Reinforcement Learning"

proceedings.mlr.press/v232/de-asis...

04.10.2025 16:01

👍 3

🔁 1

💬 1

📌 0

Returning soon - stay tuned!

sites.google.com/view/monte-c...

18.09.2025 18:59

👍 21

🔁 7

💬 0

📌 1

I am very happy to finally share something I have been working on and off for the past year:

"The Information Dynamics of Generative Diffusion"

This paper connects entropy production, divergence of vector fields and spontaneous symmetry breaking

link: arxiv.org/abs/2508.19897

02.09.2025 16:40

👍 21

🔁 3

💬 0

📌 0

xLSTM for multivariate time series anomaly detection: arxiv.org/abs/2506.22837

“In our results, xLSTM showcases state-of-the-art accuracy, outperforming 23 popular anomaly detection baselines.”

Again, xLSTM excels in time series analysis.

01.07.2025 08:30

👍 4

🔁 2

💬 0

📌 0

New paper on the generalization of Flow Matching www.arxiv.org/abs/2506.03719

🤯 Why does flow matching generalize? Did you know that the flow matching target you're trying to learn *can only generate training points*?

w @quentinbertrand.bsky.social @annegnx.bsky.social @remiemonet.bsky.social 👇👇👇

18.06.2025 08:08

👍 55

🔁 17

💬 2

📌 3

New preprint alert 🚨

How can you guide diffusion and flow-based generative models when data is scarce but you have domain knowledge? We introduce Minimum Excess Work, a physics-inspired method for efficiently integrating sparse constraints.

Thread below 👇https://arxiv.org/abs/2505.13375

26.05.2025 09:13

👍 26

🔁 6

💬 1

📌 0

Many recent posts on free energy. Here is a summary from my class “Statistical mechanics of learning and computation” on the many relations between free energy, KL divergence, large deviation theory, entropy, Boltzmann distribution, cumulants, Legendre duality, saddle points, fluctuation-response…

02.05.2025 19:22

👍 63

🔁 9

💬 1

📌 0

I asked "on the other platform" what were the most important improvements to the original 2017 transformer.

That was quite popular and here is a synthesis of the responses:

28.04.2025 06:47

👍 204

🔁 43

💬 4

📌 3

Come check out SDE Matching at the #ICLR2025 workshops, a new simulation-free framework for training fully general Latent/Neural SDEs (generalisation of diffusion and bridge models).

FPI: Morning poster session

DeLTa: Afternoon poster session

#SDE #Bayes #GenAI #Diffusion #Flow

27.04.2025 23:27

👍 13

🔁 1

💬 1

📌 1

Excited to present our poster on Boltzmann priors for Implicit Transfer Operators tomorrow at @iclr-conf.bsky.social!

See you tomorrow at poster 13, 10-12:30.

24.04.2025 08:20

👍 11

🔁 5

💬 0

📌 0

1/11 Excited to present our latest work "Scalable Discrete Diffusion Samplers: Combinatorial Optimization and Statistical Physics" at #ICLR2025 on Fri 25 Apr at 10 am!

#CombinatorialOptimization #StatisticalPhysics #DiffusionModels

24.04.2025 08:57

👍 16

🔁 7

💬 1

📌 0

The Scientist Building an 'Artificial Scientist'

YouTube video by Quanta Magazine

My video interview with @quantamagazine.bsky.social about AI-designed physics experiments, AI as a Muse for new ideas in Science, and Artificial Scientists: www.youtube.com/watch?v=T_2Z...

19.03.2025 10:46

👍 18

🔁 7

💬 0

📌 2

Digital Discovery of Interferometric Gravitational Wave Detectors

AI-driven design of gravitational wave detectors uncovers approaches that surpass current plans, potentially boosting sensitivity more than tenfold.

📢 AI-discovered Gravitational Wave Detectors

published in @apsphysics.bsky.social Phys.Rev.X, with Rana Adhikari & Yehonathan Drori @ligo.org @caltech.edu @mpi-scienceoflight.bsky.social

journals.aps.org/prx/abstract...

Extremely happy to see this paper online after 3.5 years of work.

🧵1/5

14.04.2025 17:32

👍 11

🔁 1

💬 1

📌 0

We have been reworking the Quickstart guide of POT to show multiple examples of OT with the unified API that facilitates access to OT value/plan/potentials. It allows to select regularization/unbalancedness/lowrank/Gaussian OT with just a few parameters. pythonot.github.io/master/auto_...

26.03.2025 07:39

👍 32

🔁 11

💬 0

📌 0

xLSTM 7B: A Recurrent LLM for Fast and Efficient Inference

Meet the fastest 7B language model out there. Based on the mLSTM!

P: arxiv.org/abs/2503.13427

18.03.2025 06:33

👍 1

🔁 2

💬 0

📌 0

Tweedie's formula is super important in diffusion models & is also one of the cornerstones of empirical Bayes methods.

Given how easy it is to derive, it's surprising how recently it was discovered ('50s). It was published a while later when Tweedie wrote Stein about it

1/n

18.03.2025 06:12

👍 65

🔁 14

💬 1

📌 0

Opportunity to work with @hochreitersepp.bsky.social , @jobrandstetter.bsky.social , and me!!

We have many open positions in machine learning, deep learning, LLMs!! Both for PostDocs and PhDs!

Join us!

14.03.2025 12:31

👍 2

🔁 3

💬 0

📌 0

I shared a controversial take the other day at an event and I decided to write it down in a longer format: I’m afraid AI won't give us a "compressed 21st century"

Here: thomwolf.io/blog/scienti...

It's an extension of this interview discussion from the AI summit: youtu.be/AxBd3G0lFLs?...

06.03.2025 13:03

👍 133

🔁 34

💬 11

📌 12

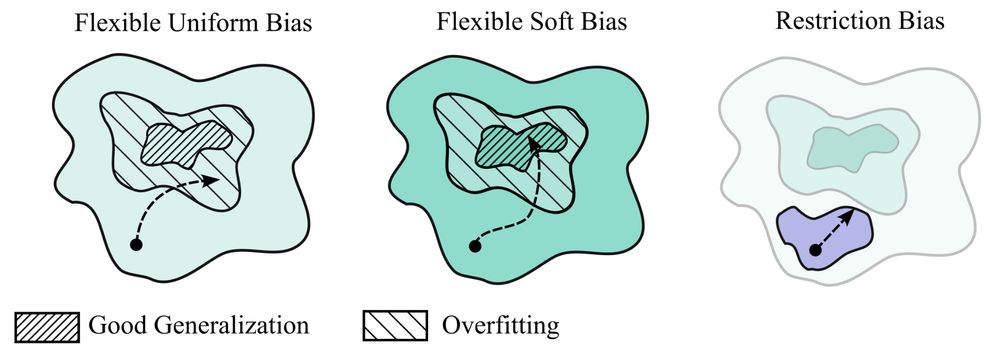

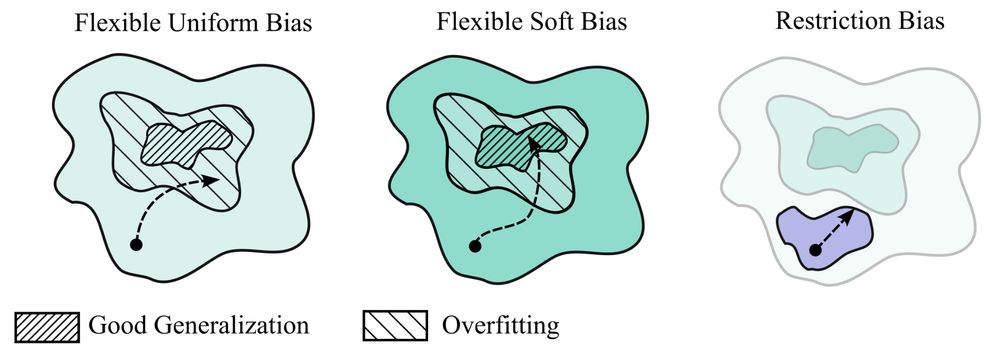

My new paper "Deep Learning is Not So Mysterious or Different": arxiv.org/abs/2503.02113. Generalization behaviours in deep learning can be intuitively understood through a notion of soft inductive biases, and formally characterized with countable hypothesis bounds! 1/12

05.03.2025 15:37

👍 210

🔁 49

💬 6

📌 9

Thanks @zlatko-minev.bsky.social and hello bluesky world!

04.03.2025 15:47

👍 13

🔁 3

💬 0

📌 0

Luca (Martino) once told me (when I said "MCMC does not have weights") that this is incorrect (in his Sicilian style): When you reject in MCMC, you increase the weight of the current sample. Chains do have replicates, can be written like a weighted sample. High rejection rate *is* weight degeneracy.

28.02.2025 11:29

👍 4

🔁 1

💬 1

📌 0

Excited to share our work with friends from MIT/Google on Learned Asynchronous Decoding! LLM responses often contain chunks of tokens that are semantically independent. What if we can train LLMs to identify such chunks and decode them in parallel, thereby speeding up inference? 1/N

27.02.2025 00:38

👍 16

🔁 9

💬 1

📌 1

Excited about our progress in characterizing The Computational Advantage of Depth in Learning with Neural Networks. Check out the number of samples that can be saved when GD runs on a multi-layer rather than on a two-layer neural network. arxiv.org/pdf/2502.13961

22.02.2025 14:23

👍 27

🔁 4

💬 1

📌 1

📢PSA: #NeurIPS2024 recordings are now publicly available!

The workshops always have tons of interesting things on at once, so the FOMO is real😵💫 Luckily it's all recorded, so I've been catching up on what I missed.

Thread below with some personal highlights🧵

22.01.2025 21:06

👍 128

🔁 33

💬 1

📌 1