It’s also my honor to have economists from @stanforddel.bsky.social join this project. As headlines are saying 2025 is a year of agents, we believe AI agent development is not solely a technical thing. Thanks Humishka, Yucheng, Jiaxin, David, @erikbryn.bsky.social and @diyiyang.bsky.social!

12.06.2025 16:41

👍 1

🔁 0

💬 0

📌 0

This project would not have been possible without the thoughtful participation of the 1,500+ domain workers. Many of those we contacted cold on LinkedIn thanked us for amplifying their voices—but truly, the honor is ours.

12.06.2025 16:39

👍 1

🔁 0

💬 1

📌 0

Mapping tasks to skills–and comparing currently high-paid skills and required human agency as AI agents enter the workforce—we see: core human strengths move from data processing toward interpersonal and organizational skills.

Read our blog post: futureofwork.saltlab.stanford.edu

12.06.2025 16:39

👍 1

🔁 0

💬 1

📌 0

The study also reveals insights on the future of HUMAN work.

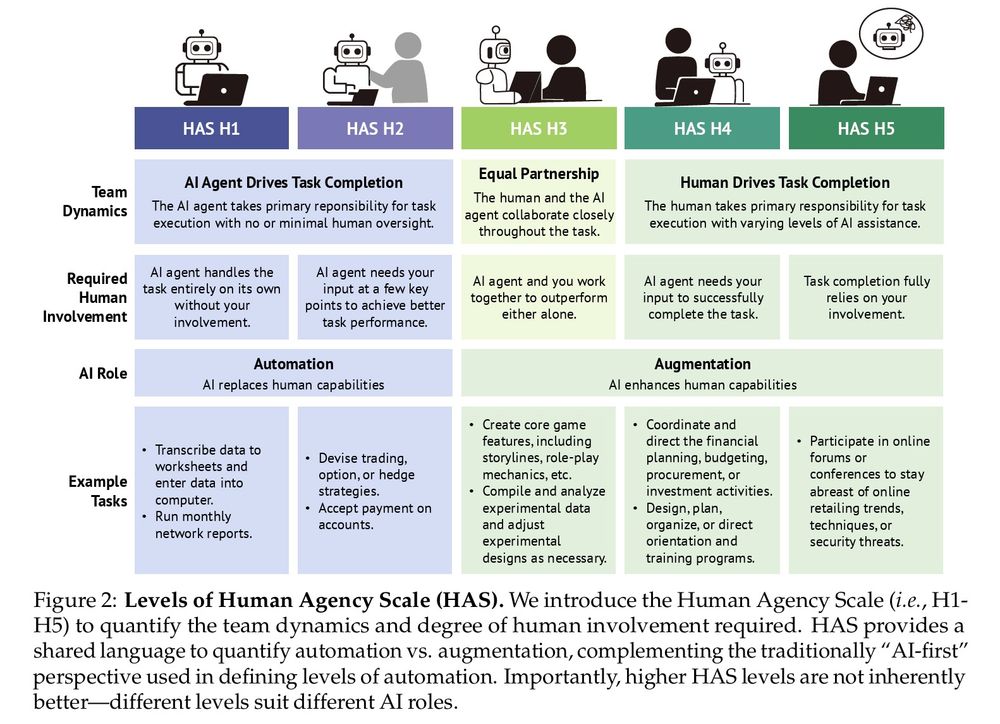

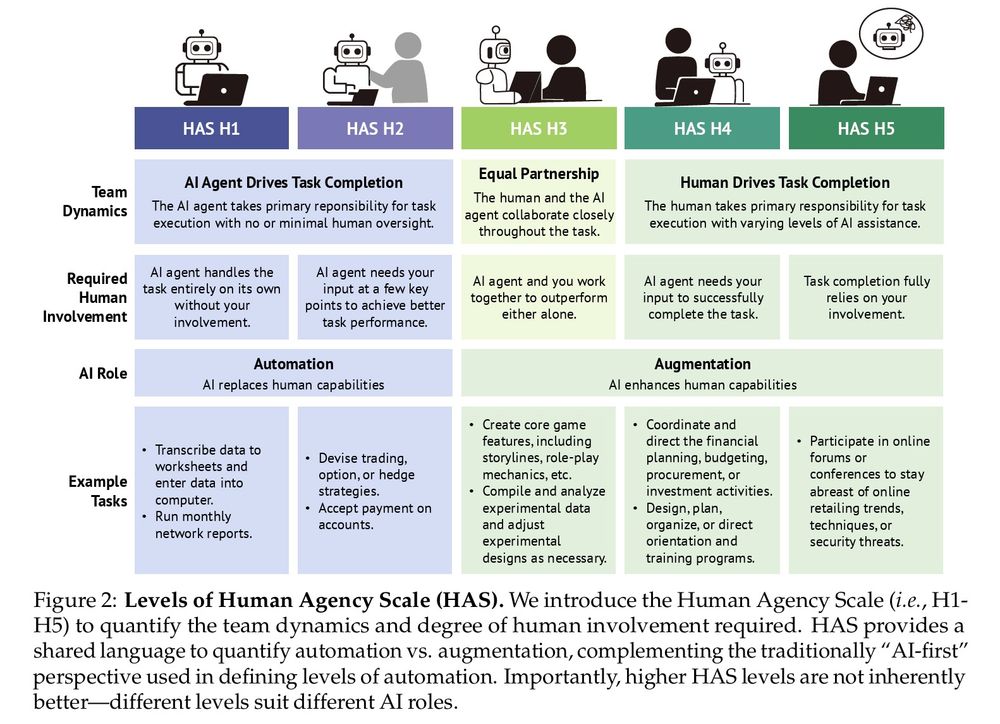

Mapping the Human Agency Scale across jobs shows which roles AI can’t replace. Currently, only Mathematicians & Aerospace Engineers have most AI expert ratings that fall into H5 (Human Involvement Essential).

12.06.2025 16:39

👍 0

🔁 0

💬 1

📌 0

Despite the buzz around "AI software engineers," "AI journalists," etc., our Human Agency Scale uncovers task-level nuances within every occupation.

We suggest that AI agent R&D and products account for them for more responsible, higher-quality adoption.

12.06.2025 16:36

👍 0

🔁 0

💬 1

📌 0

Workers generally prefer higher levels of human agency, hinting at friction as AI capabilities advance.

From transcript analysis, the top collaboration model envisioned by workers is “role-based” AI support (23.1%) - utilizing AI systems that embody specific roles.

12.06.2025 16:35

👍 0

🔁 0

💬 1

📌 0

The impact of AI agents on work isn’t just a binary “automate or not.”

We introduce the Human Agency Scale: a 5-level scale to capture the spectrum between automation and augmentation--where technology complements and enhances human capabilities.

12.06.2025 16:35

👍 2

🔁 1

💬 1

📌 0

Jointly considering worker desire and technological capability allows us to classify tasks into four zones to guide AI agent deployment and development.

Alarmingly, 41.0% of YC companies are mapped to Low Priority and Automation “Red Light” Zone.

12.06.2025 16:35

👍 0

🔁 0

💬 1

📌 0

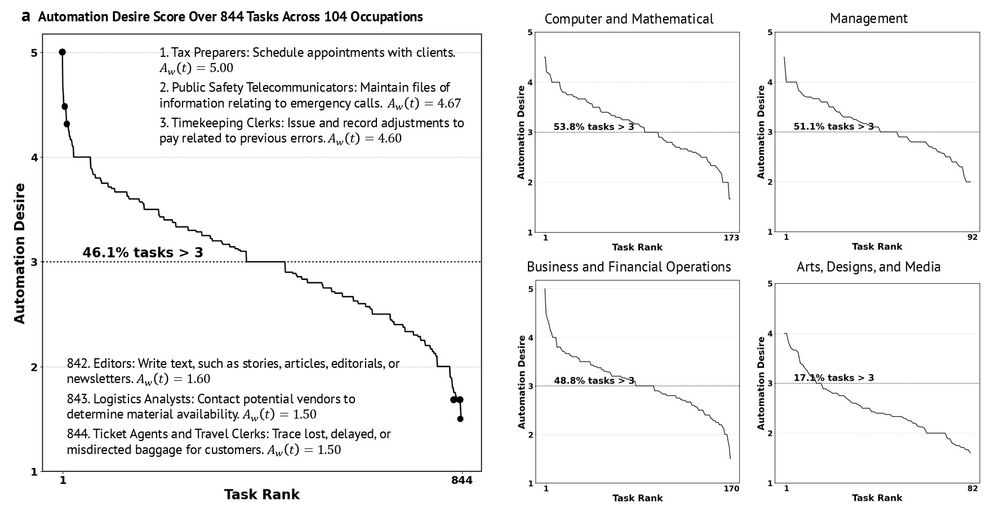

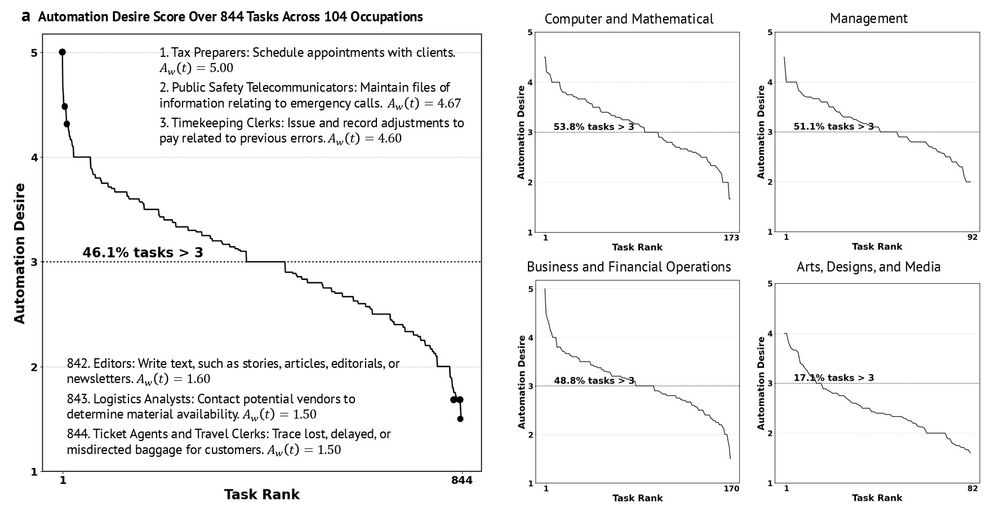

We rank tasks by worker desire for automation. For 46.1% of tasks receive a positive attitude (>3/5) – with notable variation across sectors.

Transcript analysis reveals top concerns: (1) lack of trust (45%), (2) fear of job replacement (23%), (3) loss of human touch (16.3%)

12.06.2025 16:34

👍 0

🔁 0

💬 1

📌 0

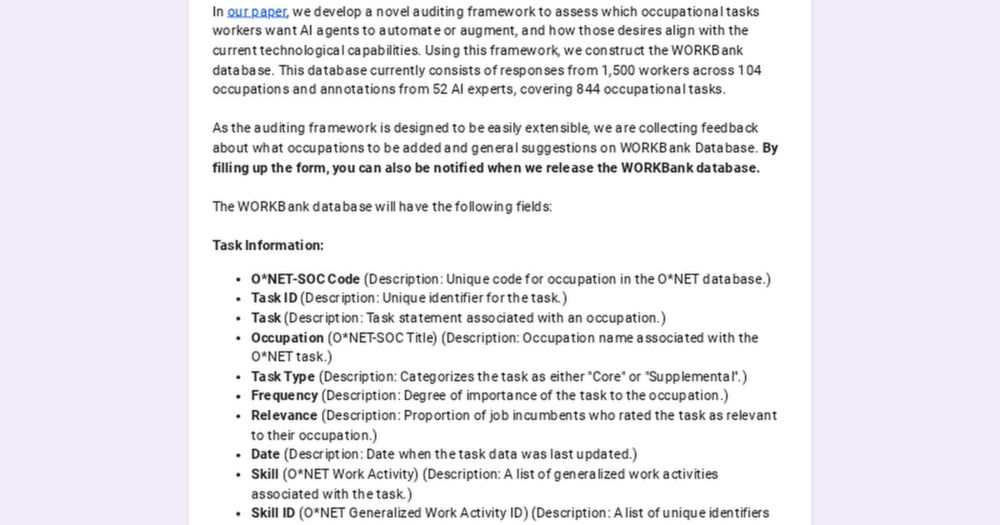

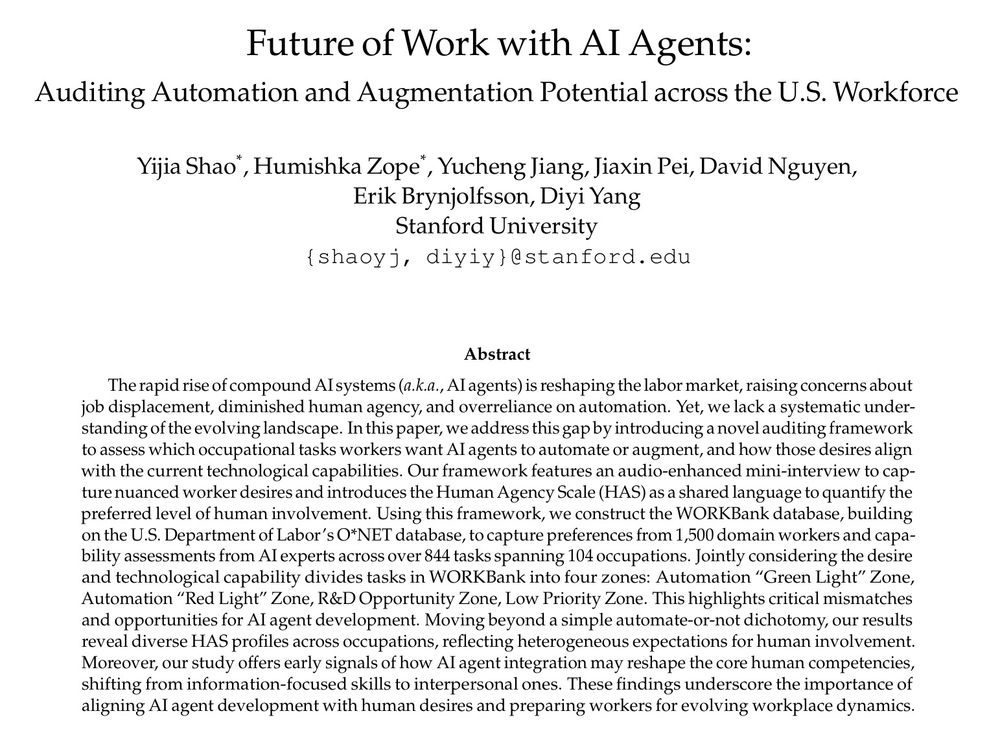

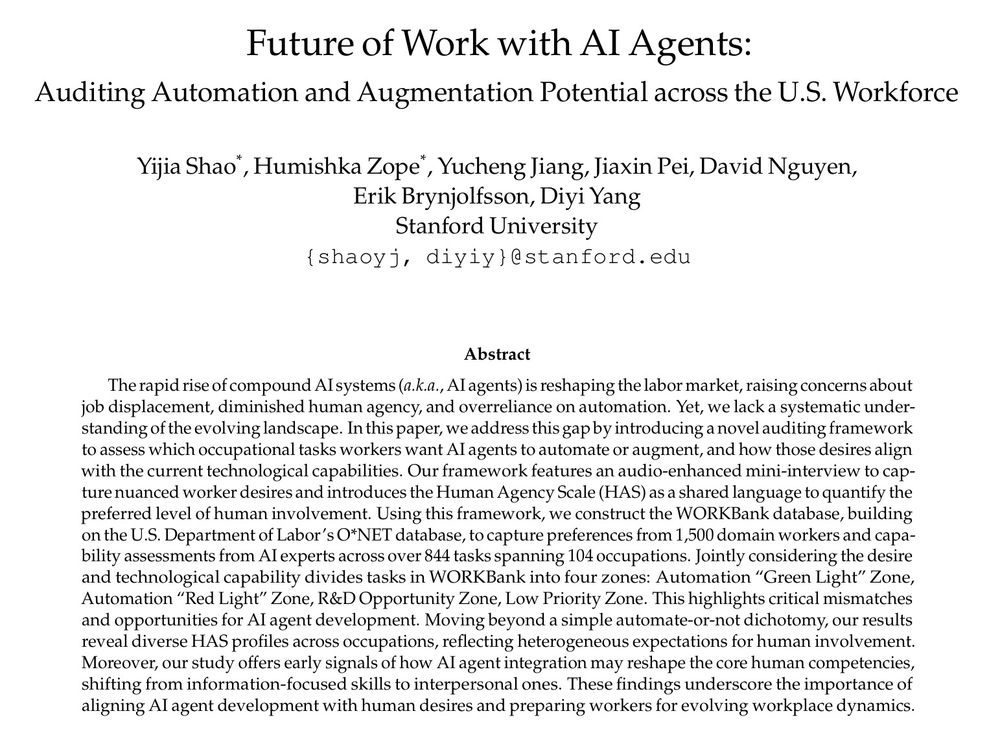

In our new paper: arxiv.org/abs/2506.06576

We collaborate with economists to develop an audio-enhanced auditing framework.

- 1500 domain workers from 104 occupations shared their desires.

- 52 AI agent researchers & developers evaluated today’s technological capabilities.

12.06.2025 16:34

👍 1

🔁 0

💬 1

📌 0

🚨 70 million US workers are about to face their biggest workplace transmission due to AI agents. But nobody’s asking them what they want.

While AI R&D races to automate everything, we took a different approach: auditing what workers want vs. what AI can deliver across the US workforce.🧵

12.06.2025 16:33

👍 22

🔁 7

💬 1

📌 0

We are getting closer to have agents operating in the real physical world. However, can we trust frontier models to make embodied decisions 🎮 aligned with human norms 👩⚖️ ?

With EgoNormia, a 1.8k ego-centric video 🥽 QA benchmark, we show that this is surprisingly challenging!

04.03.2025 04:32

👍 22

🔁 9

💬 1

📌 1

Hi, I found your work very interesting and hope to have a chance to reach out. Is there a way to contact you? I tried DM on this site and redit but both fails. Thank you so much for your consideration!

14.02.2025 20:51

👍 0

🔁 0

💬 0

📌 0

Thanks Vinay, Yucheng, John & @diyiyang.bsky.social for the amazing collaboration, and to all the friends—met or yet to be met—who shared suggestions for the platform release!

The release won't be possible without the generous support from US Navy Research, NSF, Google, and Microsoft Azure!

12.02.2025 19:27

👍 1

🔁 0

💬 0

📌 0

Try it out today at cogym.saltlab.stanford.edu!

Read our preprint to learn more details: arxiv.org/abs/2412.15701

12.02.2025 19:25

👍 1

🔁 0

💬 1

📌 0

We welcome contributions of new task environments and agents.

Contributed agents will be deployed on our platform to study their interaction dynamics with real users. A great chance to distribute your agent in the wild!

12.02.2025 19:25

👍 1

🔁 0

💬 1

📌 0

🎉 For the first time ever: Collaborate with AI agents in real-time! Collaborative Gym UI is now IRB-approved and alive at cogym.saltlab.stanford.edu!

A group of agents is eager to work with you. By providing feedback, you will see the agent's identity and its feedback to you!

12.02.2025 19:24

👍 2

🔁 0

💬 1

📌 0

We welcome contributions of new task environments and agents.

Contributed agents will be deployed on our platform to study their interaction dynamics with real users. A great chance to distribute your agent in the wild!

12.02.2025 19:23

👍 0

🔁 0

💬 1

📌 0

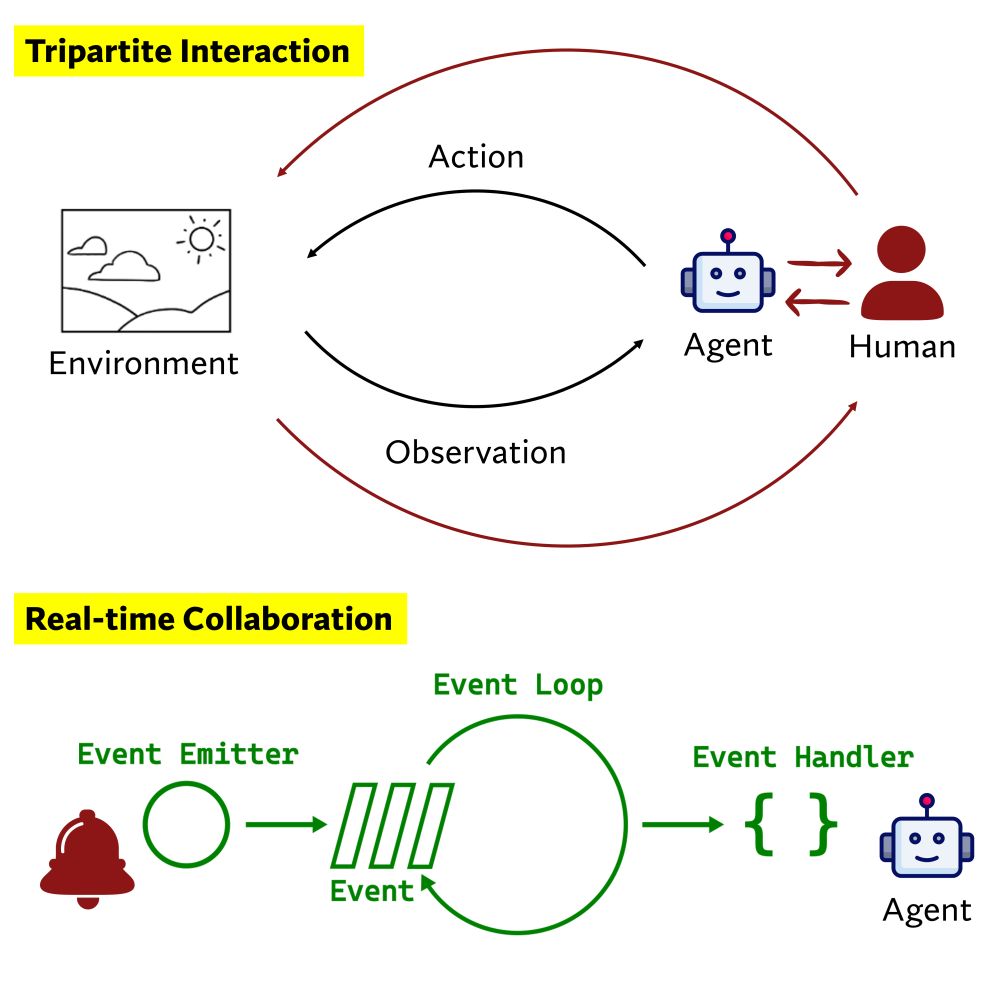

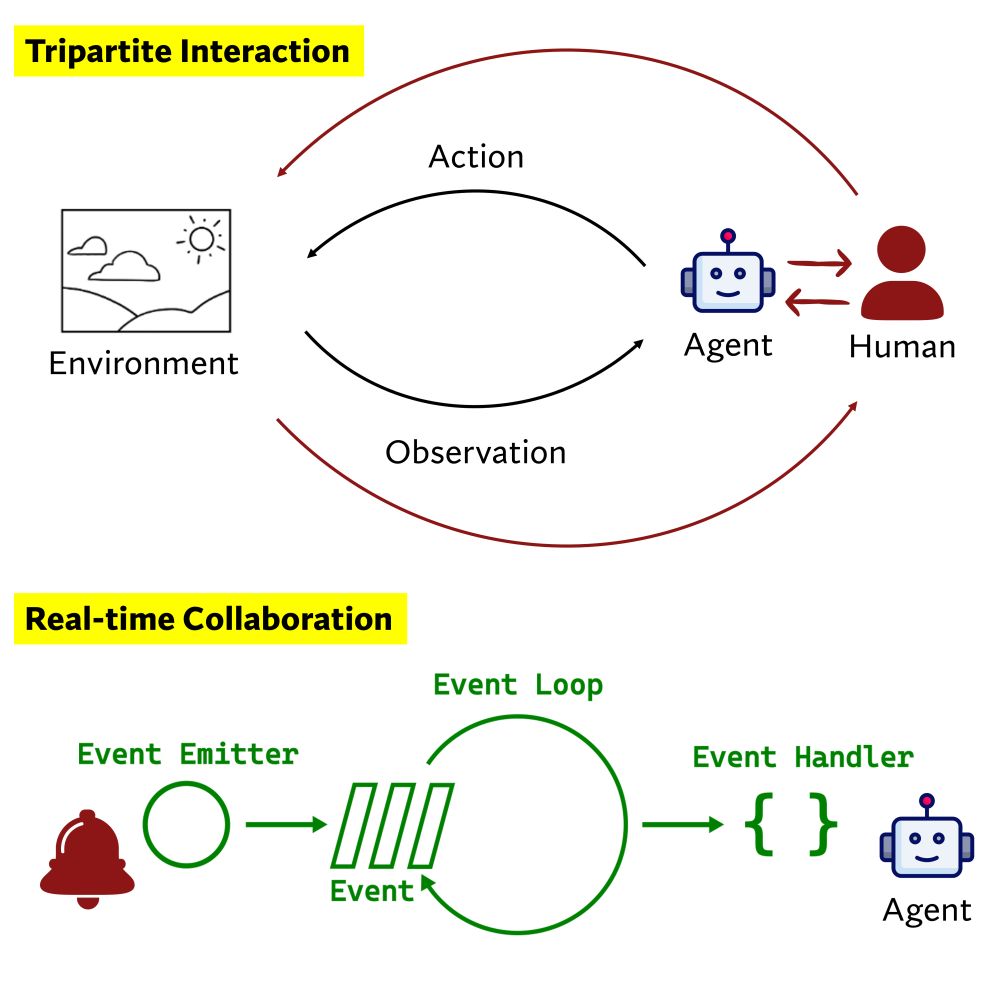

LM agents today primarily aim to automate tasks. Can we turn them into collaborative teammates? 🤖➕👤

Introducing Collaborative Gym (Co-Gym), a framework for enabling & evaluating human-agent collaboration! I now get used to agents proactively seeking confirmations or my deep thinking.(🧵 with video)

17.01.2025 17:44

👍 22

🔁 10

💬 1

📌 1

Hands-on Experience with Devin: Reflections from a Person Building and Evaluating Agentic Systems

Why I’m interested in making agentic systems collaborative.

Hi @narphorium.bsky.social , thank you! Can finally reply to you because our team wants to check whether the taxonomy can be used to examine other agentic systems (e.g. coding agents) first. It's indeed very useful. You can check out my recent blog post if interested: cs.stanford.edu/people/shaoy...

26.01.2025 23:26

👍 2

🔁 1

💬 1

📌 0

[8/8] To me, Co-Gym stems from my SoP on building human-centered agentic systems 2 years ago. I am excited to see how agents could work with us and the demands this poses for advancing model intelligence!

Thank you Vinay, Yucheng, John & @diyiyang.bsky.social for the amazing collaboration!

17.01.2025 17:50

👍 1

🔁 0

💬 0

📌 0

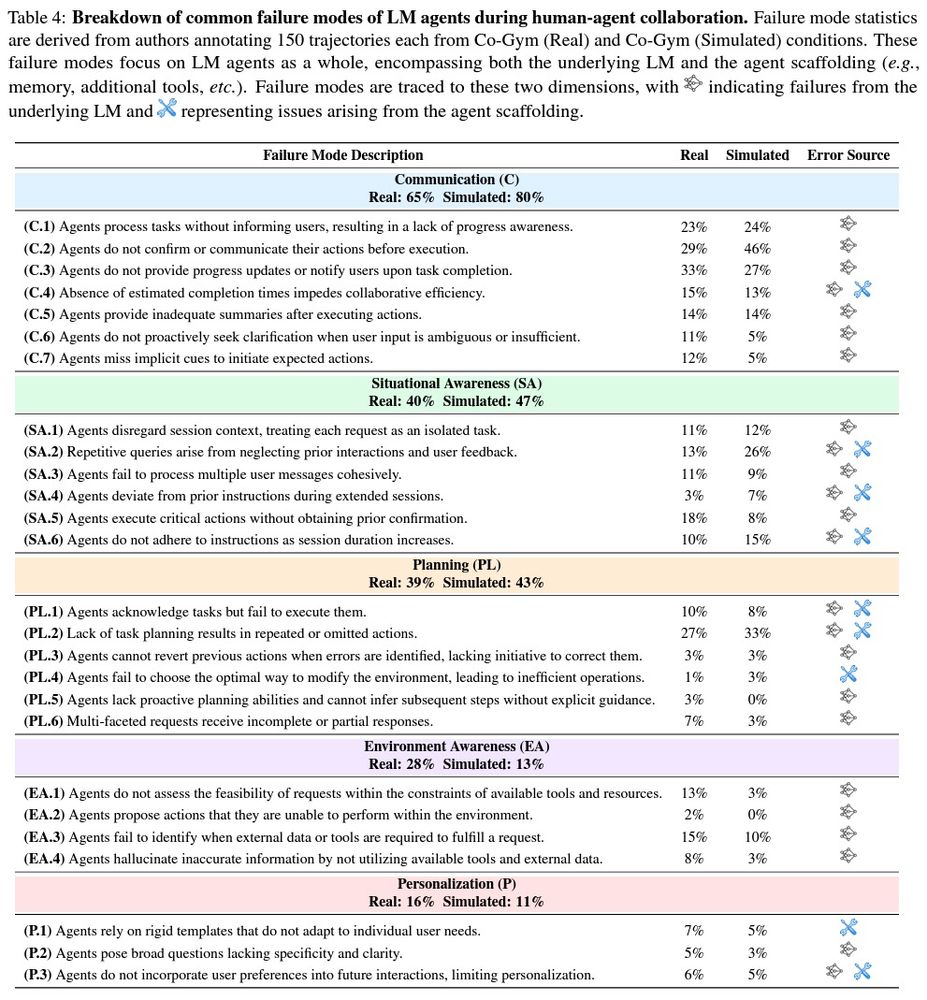

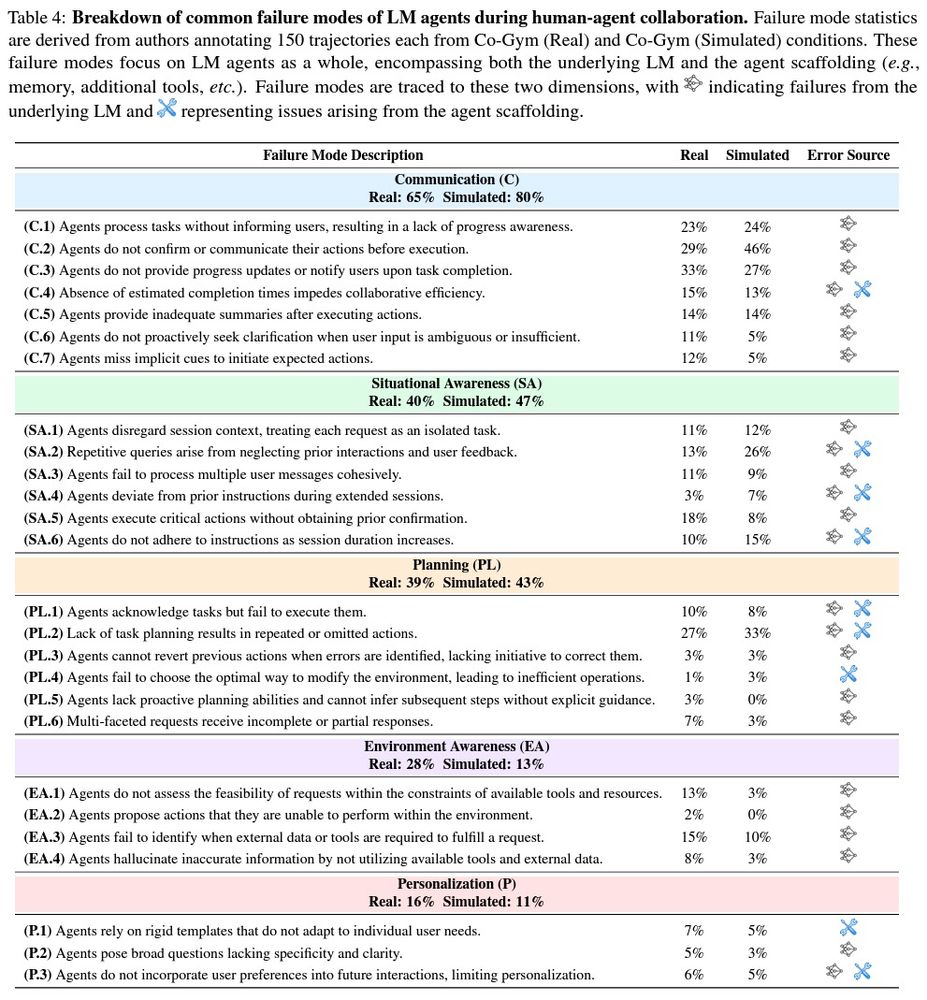

[6/8] We conducted a detailed error analysis by having authors annotate 300 trajectories. Collaborative agents expose significant limitations in current LMs and agent scaffoldings, with communication and situational awareness failures occurring in 65% and 40% of real trajectories.

17.01.2025 17:48

👍 3

🔁 0

💬 1

📌 1

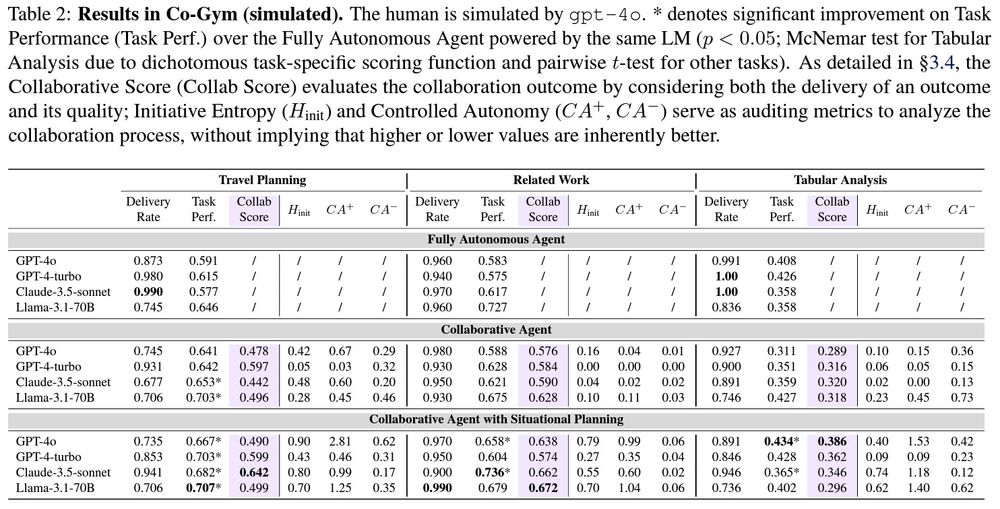

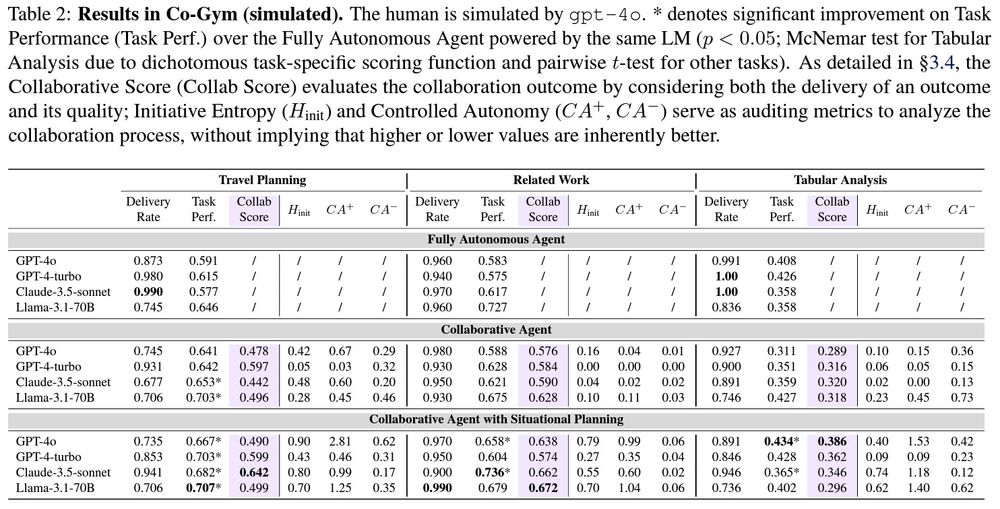

[5/8] We built a user simulator and web UI to instantiate Co-Gym in simulated and real settings.

Experiments reveal human-like patterns: collaborative inertia, where poor communication hinders delivery; and collaborative advantage, where human-agent teams outperform autonomous agents.

17.01.2025 17:48

👍 1

🔁 0

💬 1

📌 0

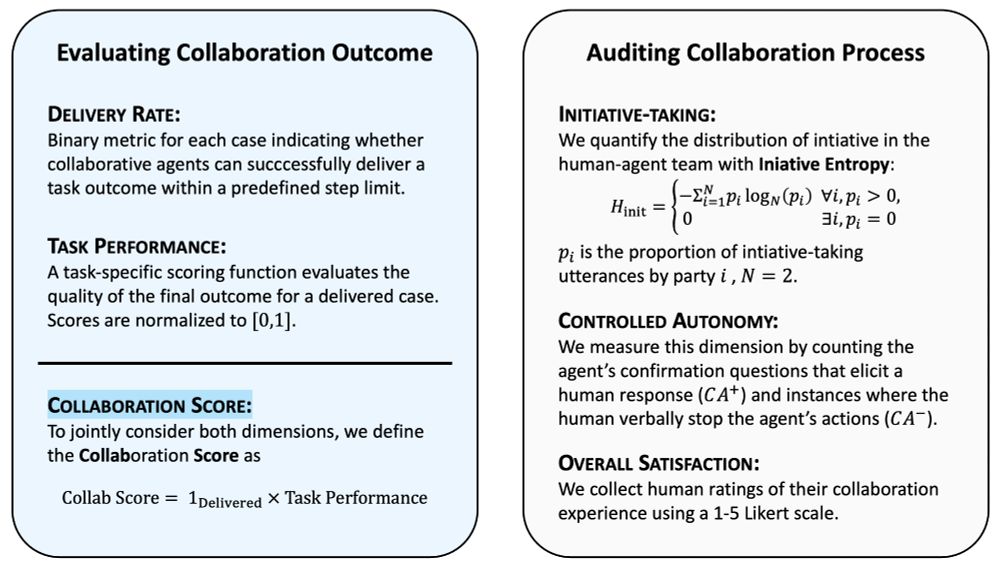

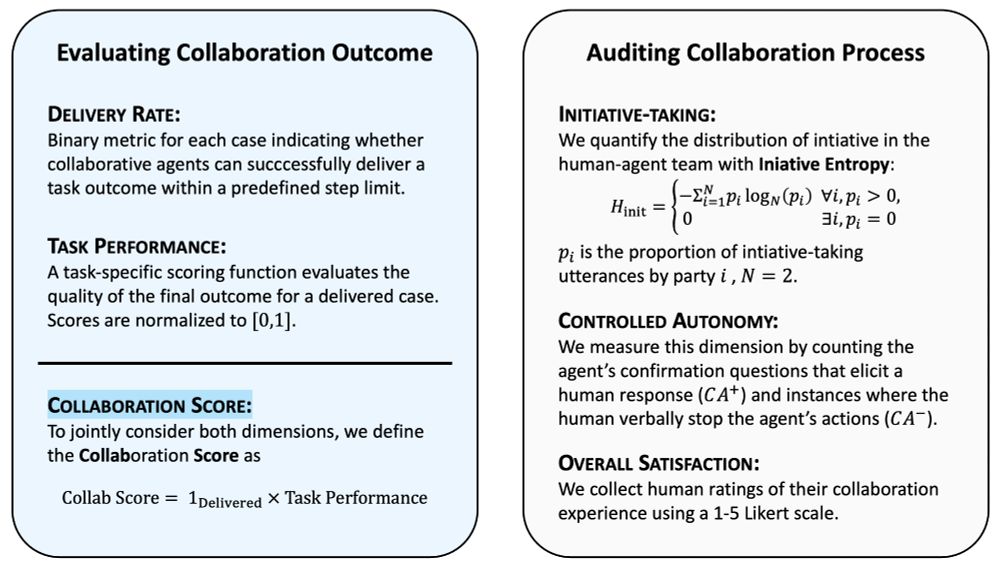

[4/8] Our vision builds on a long-standing dream in AI: to develop machines that act as teammates, not mere tools.

This demands situational intelligence to take initiative, communicate, and adapt. Co-Gym offers an evaluation framework that assesses both collab outcomes and processes.

17.01.2025 17:47

👍 2

🔁 0

💬 1

📌 0