We have now updated these numbers! Read more here:

x.com/Jsevillamol/...

We have now updated these numbers! Read more here:

x.com/Jsevillamol/...

For more details on our analysis, check out our latest data insight by Isabel Juniewicz: epoch.ai/data-insigh...

Our new data insight focuses on PP&E, allowing us to break down physical asset acquisitions into finer categories. This follows our prior work on capex, a related but distinct metric, finding that it has been growing rapidly for hyperscalers like Microsoft. epoch.ai/data-insigh...

Microsoft added $68B in physical assets in the second half of 2025 — almost as much as the entire prior fiscal year.

57% was IT equipment (GPUs, servers). 39% was buildings, dominated by data centers.

Check out our website for more results and commentary about FrontierMath overall!

epoch.ai/frontiermath

We also evaluated GPT-5.4 Pro on FrontierMath: Open Problems. It did not solve any problems. It made some novel observations on one problem, but of a form that the author had anticipated and characterized as relatively uninteresting. More here:

epoch.ai/frontiermat...

Across all runs ever, 42% (20/48) of the problems in Tier 4 have now been solved at least once.

We ran GPT-5.4 (xhigh) an additional ten times on Tier 4 to get a pass@10 score. This was 38%. In one of these runs, it solved another problem no model had solved before. This problem was by Bartosz Naskręcki.

GPT-5.4 Pro solved one Tier 4 problem that no model had solved before. In a preliminary analysis, it appeared to have found a preprint from 2011 which let it shortcut much of the intended work. The problem author was unaware of this preprint.

On Tiers 1–3 GPT-5.4 Pro solved 52% of the non-held-out set and 42% of the held-out set. On Tier 4, GPT-5.4 Pro solved 25% of the non-held-out set and 55% of the held-out set. Neither of these differences is statistically significant.

FrontierMath was funded by OpenAI, who has exclusive access to: all 290 problems in Tiers 1–3; solutions to 237 of these problems; 28 of the 48 problems in Tier 4; solutions to these 28 problems. Epoch holds out the rest.

GPT-5.4 set a new record on FrontierMath, our benchmark of extremely challenging math problems! We had pre-release access to evaluate the model. On Tiers 1–3, GPT-5.4 Pro scored 50%. On Tier 4 it scored 38%.

See thread for commentary and additional experiments.

Check out our website for more data on AI company finances!

epoch.ai/data/ai-com...

OpenAI’s recent funding round nearly triples the amount they have raised so far. The Information has reported that OpenAI projects a $157B cash burn through 2028. This round, combined with $40B cash on hand, essentially matches that projection.

These roles are fully remote and we can hire in many countries, although we prefer significant overlap with North American timezones. Applications are rolling, so apply soon! epoch.ai/careers

We’re looking for candidates for both roles who are up-to-date with AI trends, adept at managing multiple detailed workstreams, and excited about contributing to our mission: improving our understanding of the future of AI.

Our team is expanding! We’re hiring for a Researcher to work with our Senior Researcher, JS Denain, on pitching and developing new projects, and a Special Projects Associate to provide operational support for making great new benchmarks.

For more details on this analysis, see our website: epoch.ai/data-insigh...

Each company defines "capex" differently on earnings calls. Some include finance leases; some don't. So to build a consistent measure, we went directly to companies' financial filings and identified cash spending and new finance leases using standardized regulatory tags.

Company statements and analyst projections also anticipate continued rapid spending growth in capital expenditures in 2026, though slower than this trend extrapolation.

Driven by investments in AI, hyperscaler capital expenditures have grown 70% per year since the release of GPT-4, nearing half a trillion dollars in total during 2025.

If this trend continues, Alphabet, Amazon, Meta, Microsoft and Oracle will spend $770 billion on capex in 2026.

This week's Gradient Update was written by Anson Ho.

All Gradient Updates are informal and opinionated analyses that represent the views of individual authors, not Epoch AI as a whole.

You can read the full post here: epoch.ai/gradient-up...

The implications could be bigger still. In scenarios like AI 2027 and Situational Awareness, automating AI R&D hugely accelerates software progress and hence capability growth.

The big open question is whether this acceleration can actually happen, which we discuss here:

bsky.app/profile/epo...

This has huge implications. For instance, it explains how DeepSeek caught up with o1 with less compute in a matter of months. The specific rate of AI software progress might even shift your AGI timelines by over a decade!

www.astralcodexten.com/p/what-happ...

In fact, AI software progress seems to be extremely fast. Each year, you need several times less training compute to reach the same level of capabilities:

This improvement also lets researchers push AI capabilities with the same training compute. So if software progress is fast enough, we could reach much greater capabilities without scaling training.

That’s why GPT-5 outperformed GPT-4.5 with less training compute.

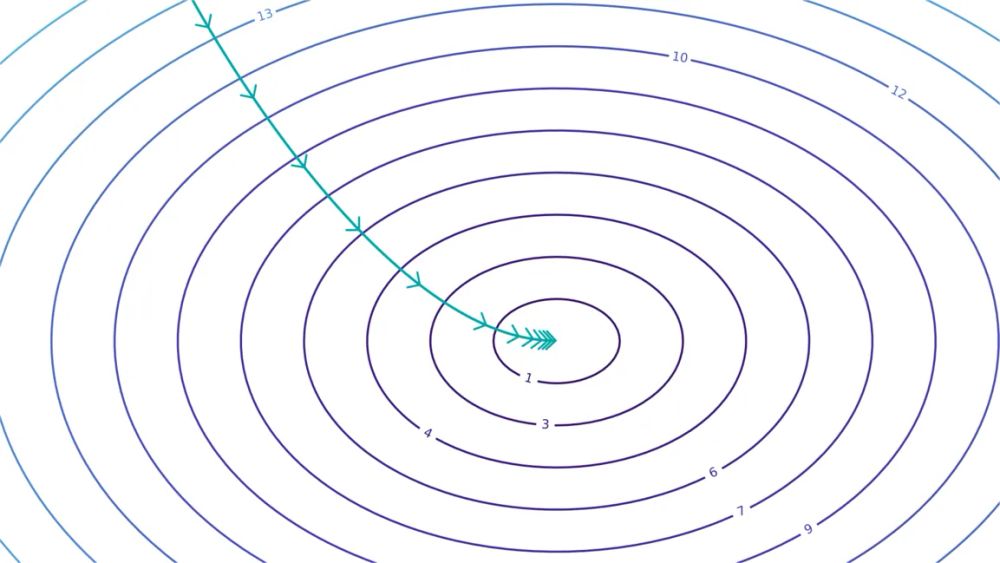

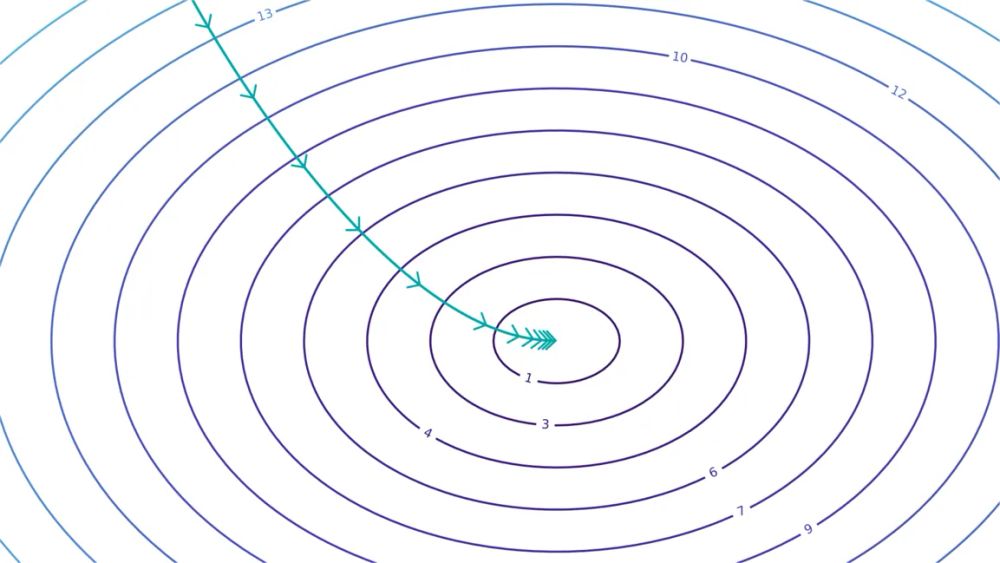

One way is to say that better AI software reduces the compute needed to reach the same capability.

For example, imagine a curve relating a measure of capabilities to log(training compute). After making an algorithmic innovation, the curve shifts to the left, saving compute:

There are many ways to improve algorithms and data. For example, you could change model architectures, build better RL environments, and improve training recipes.

But how do you concretize what makes some AI software better than others?

Developing more powerful AI isn’t just about scaling compute. It’s also about improving algorithms and data quality, which let you build better models with the same compute.

We call this “AI software progress” — here’s everything you need to know about it: 🧵

This week's Gradient Update was written by Anson Ho.

All Gradient Updates are informal and opinionated analyses that represent the views of individual authors, not Epoch AI as a whole.

You can read the full post here: epoch.ai/gradient-up...