Recurrent cortical networks encode natural sensory statistics via sequence filtering

www.cell.com/neuron/abstr...

#neuroscience

@gelbanna

PhD student in Speech and Hearing at Harvard/MIT. Building ANNs to study how humans perceive, understand, and produce speech. Working with @joshhmcdermott.bsky.social MSc. at EPFL BSc. at Cairo University ex Logitech and IDIAP

Recurrent cortical networks encode natural sensory statistics via sequence filtering

www.cell.com/neuron/abstr...

#neuroscience

Nature research paper: Compact deep neural network models of the visual cortex

go.nature.com/3OKRXZU

What is the relationship between memorization and generalization in AI? Is there a fundamental tradeoff? In infinitefaculty.substack.com/p/memorizati... I’ve reviewed some of the evolving perspectives on memorization & generalization in machine learning, from classic perspectives through LLMs.

Two more posters from our lab at ARO today:

T152 A Model of Speech Recognition Reproduces Signatures of Human Speech Perception and Reveals Mechanisms of Contextual Integration, by Gasser Elbanna

T171 Hearing-Impaired Deep Neural Networks Predict Real-World Hearing Difficulties, by Mark Saddler

If at ARO today, check out the presentations from our lab:

talk at 3:15pm: Optimized Models of Uncertainty Explain Human Confidence in Auditory Perception, by Lakshmi Govindarajan

poster 28: In-Silico fMRI Experiments Enable Comparisons of Speech Models to Human Auditory Cortex, by Gasser Elbanna

Should you go to academia or industry for research in AI or cognitive science? It's the most common question I get asked by PhD students, and I've written up some of my thoughts on the answer, as an epilogue to my research-focused series on these fields: infinitefaculty.substack.com/p/on-researc...

The cerebellum supports high-level language?? Now out in @cp-neuron.bsky.social, we systematically examined language-responsive areas of the cerebellum using precision fMRI and identified a *cerebellar satellite* of the neocortical language network!

authors.elsevier.com/a/1mUU83BtfH...

1/n 🧵👇

🧠 Hiring a Research Assistant/Lab Manager! Share widely! 📍 St. Louis | ⏰ Full-time

We're launching the How We Learn Lab @WashU, studying attention, learning & memory interactions. Perfect for anyone interested in dev cog neuro who wants hands-on experience before grad school.

deckerlab.com

We evaluated three classes of speech models against a set of neural signatures. If you’re curious how they performed, stop by our poster to learn more!

Prior work has shown that speech models can predict brain responses to natural speech. However, it remains unclear whether these models also reproduce well-documented signatures of the auditory cortex.

If you’re still at #NeurIPS2025, come say hi at our poster at @unireps.bsky.social in Ballroom 20D!

I'm presenting work co-led by Ivy Brundege and me, with @joshhmcdermott.bsky.social, showing that in silico fMRI experiments of speech models reveal notable discrepancies with human auditory cortex.

Not attending NeurIPS this year, but very much looking to connect.

I’m seeking a PhD research internship next summer in AI for Science, especially where AI meets brain and cognitive sciences. 🧠

If you’re hiring, I’d love to connect!

bkhmsi.github.io

Special issue of Neuropsychologia celebrating the career of John Duncan.

www.sciencedirect.com/special-issu...

#neuroscience

New pre-print from our lab, by Lakshmi Govindarajan with help from Sagarika Alavilli, introducing a new type of model for studying sensory uncertainty. www.biorxiv.org/content/10.1...

Here is a summary. (1/n)

Looking for a PhD program where you can study computational neuroscience? NYU has a fantastic array of researchers covering the field:

groups.google.com/g/systems-ne...

I'm recruiting PhD students to join my new lab in Fall 2026! The Shared Minds Lab at @usc.edu will combine deep learning and ecological human neuroscience to better understand how we communicate our thoughts from one brain to another.

Excited to share that I'm joining WashU in January as an Assistant Prof in Psych & Brain Sciences! 🧠✨!

I'm also recruiting grad students to start next September - come hang out with us! Details about our lab here: www.deckerlab.com

Reposts are very welcome! 🙌 Please help spread the word!

It has been "known" that musical experience improves auditory coding in the brainstem. But...a new multilab study concludes

"Our findings provide no evidence for associations between early auditory neural responses and either musical training or musical ability."

👀

www.nature.com/articles/s41...

My first paper with @naoshigeuchida.bsky.social is finally out in @natcomms.nature.com ! rdcu.be/eACGf

TL;DR: asymmetric learning rates can be induced by shifts in tonic dopamine giving rise to pessimistic/optimistic biases in agents or animals undergoing reinforcement learning .

NeurIPS is endorsing EurIPS, an independently-organized meeting which will offer researchers an opportunity to additionally present NeurIPS work in Europe concurrently with NeurIPS.

Read more in our blog post and on the EurIPS website:

blog.neurips.cc/2025/07/16/n...

eurips.cc

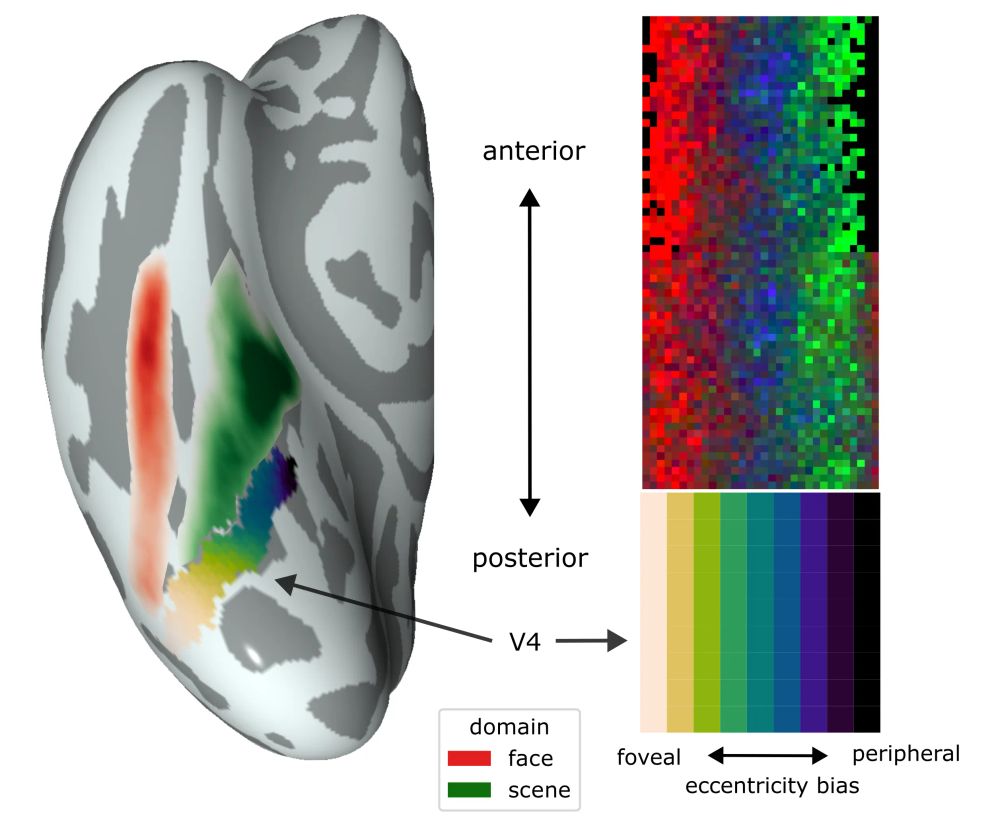

What shapes the topography of high-level visual cortex?

Excited to share a new pre-print addressing this question with connectivity-constrained interactive topographic networks, titled "Retinotopic scaffolding of high-level vision", w/ Marlene Behrmann & David Plaut.

🧵 ↓ 1/n

3. We manipulated the model’s access to past and future speech cues, revealing the importance of the acoustic context and its directionality in human speech recognition.

Come to our poster to learn more!

🧵4/4

2. These tasks allowed us to compute the first full phoneme confusion matrix in humans at scale. This enabled the first systematic comparison of human–model phoneme confusions, revealing that humans and models share not only similar response patterns but also similar patterns of confusions.

🧵3/4

This work has 3 main contributions:

1. We developed new models of continuous speech recognition alongside novel behavioral tasks to compare both models and humans on speech perception without conflating speech and language.

🧵2/4

At Frontiers in NeuroAI symposium @kempnerinstitute.bsky.social, I will be presenting a poster entitled "A Model of Continuous Phoneme Recognition Reveals the Role of Context in Human Speech Perception" (Poster #17).

Work done with @joshhmcdermott.bsky.social.

#NeuroAI2025

🧵1/4

I have been teaching my trainees exactly this for years...

universityaffairs.ca/career-advic...

This is exactly why @kordinglab.bsky.social tells everyone to write abstracts and aims pages first before starting projects / writing grants

Check out our new work on making robots process touch more like brains!

Surprisingly, ConvRNNs best matched mouse cortex—passing the NeuroAI Turing Test. We also developed tactile-specific SSL augmentations and an Encoder-Attender-Decoder framework unifying ConvRNNs, SSMs & Transformers.

If returns on science investment are shrinking, there are plenty of potential culprits

https://go.nature.com/4k9FVUQ

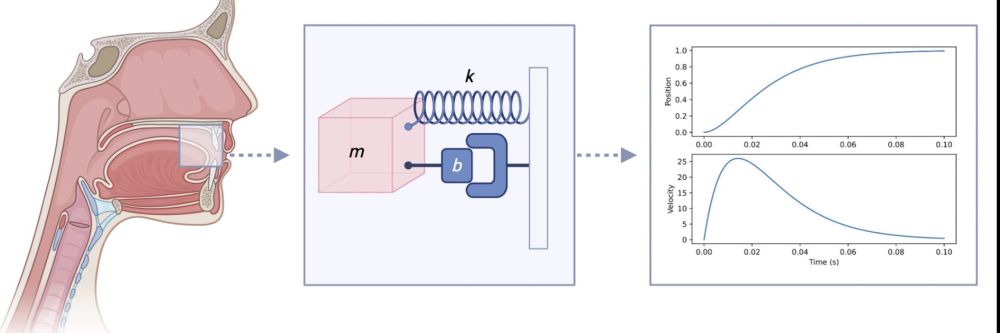

✨Discovering dynamical laws for speech gestures ✨

➡️ I’m delighted to announce my new article out today in Cognitive Science, where I discover simple mathematical laws that govern articulatory control in speech.

🔗 onlinelibrary.wiley.com/doi/full/10....

@cogscisociety.bsky.social

New paper! 🧠 **The cerebellar components of the human language network**

with: @hsmall.bsky.social @moshepoliak.bsky.social @gretatuckute.bsky.social @benlipkin.bsky.social @awolna.bsky.social @aniladmello.bsky.social and @evfedorenko.bsky.social

www.biorxiv.org/content/10.1...

1/n 🧵