None of this would work without my TAs: Dušan Variš, Tomáš Musil, Jan Bronec, @gianlucavico.bsky.social , Adnan Al Ali, Kristýna Onderková, and @straka-milan.bsky.social taking care of ReCodEx: recodex.mff.cuni.cz. Thank you 🙏

None of this would work without my TAs: Dušan Variš, Tomáš Musil, Jan Bronec, @gianlucavico.bsky.social , Adnan Al Ali, Kristýna Onderková, and @straka-milan.bsky.social taking care of ReCodEx: recodex.mff.cuni.cz. Thank you 🙏

Some students find the assignments too time-consuming. Fair. But here's what the data shows over 3 years:

📉 Forum questions dropped ~4×

📈 Full bonus points: 20% → 27% → 52%

📉 Avg. test attempts: 2.7 → 2.4 → 1.9

Asking less, achieving more, iterating less. 🤔

3rd run teaching ML to 250+ bachelor students (with great materials originaly by @straka-milan.bsky.social). Core philosophy: explain the math, implement algorithms from scratch, Kaggle-style competitions, all auto-graded. ufal.mff.cuni.cz/courses/npfl...

But look what LLMs did to the course 👇

Spent time making AI-generated images of Bayes' Rule, Laplace Smoothing, Markov Chains & Shannon Entropy for class today 🎨🤖 Even though the images are objectively hilarious, none of the 50 students in the room laughed. Or even smiled. 💀

More interesting: humans are predictably inconsistent in their values. LLMs capture this but overgeneralize: they become more stereotypically consistent than actual humans.

After several rejections, finally publishable. To appear at the Multilingual Multicultural Evaluation workshop at EACL 2026.

I reviewed papers evaluating LLM values using sociology questionnaires. Different methods, different results. Didn't trust them, so I tested it myself.

Methodology matters. Short answers vs CoT, squared err vs KL div.: each changes which populations an LLM "aligns" with.

www.arxiv.org/pdf/2602.04033

We have updated the pre-print on CUS-QA, benchmark for regional knowledge about Czechia, Slovakia and Ukraine arxiv.org/abs/2507.22752

Now, there are results of retrieval-augmented generation and more detailed analysis of model performance depending on the topic of the question or visual context.

👉 What do we do?

We use the good old IBM1 model to align subwords with morphological features from Unimorph and we show it captures the same thing as morpheme boundary recall.

👉 Why it matters?

For many languages good segmentation data is missing. Morphological features are more widely available.

We (= mostly @abyste.bsky.social) developed a way to evaluate how morphological a #tokenization is w/o gold segmentation labels. arxiv.org/abs/2601.18536 The key: align subword tokens with morphological features from UniMorph using IBM Model 1.

To appear in EACL 2026 Findings.

Happy holidays! 🎄🎅🤩🎁

Attenzione! 🇮🇹 Know Piedmontese or Neapolitan speakers? @gianlucavico.bsky.social is collecting crowd-sourced translations to evaluate LLM performance on these regional languages. Partecipate!

Cultural awareness is trickier. Different data for different cultures means we can't really compare performance across cultures in a straightforward way. And there's no clear optimization target for cultural awareness beyond curating diverse training data.

☝️🧵 Most current approaches emphasize langauge neutrality: about two-thirds of VL benchmarks use translation-based evaluation. This makes sense because we can explicitly train for language neutrality when we have parallel data. But... 🧵👇

With @andrei-a-manea.bsky.social, we posted a survey on multilingual vision-language models 👉 arxiv.org/pdf/2509.22123

We reviewed 31 models+21 benchmarks. There's a tension between language neutrality (same results across languages) & cultural awareness (context matters differently across cultures)

Most vision-language models only work in English. We explore how different parallel data types (machine-translated vs authentic captions) affect cross-lingual transfer. Key finding: authentic data can outperform machine translation, and multilingual training beats bilingual approaches. #NLP

So proud of my PhD student @andrei-a-manea.bsky.social for his first first-author publication! 🎉 He presented this work last week at TSD. Investigating the Effect of Parallel Data in the Cross-Lingual Transfer for Vision-Language Encoders arxiv.org/pdf/2504.21681

For evaluation researchers: Simple string-overlap metrics (BLEU, chrF) work surprisingly well for factual QA. 🤔 When answers are mostly named entities, exact matches matter more than we thought.

LLM-as-judge 🦙🧑⚖️ correlates best with human judgment, though.

The results are... humbling 😅

Even the best models:

>40% accuracy on textual questions

<30% on visual questions

Often perform better in English than the local language (!!)

Visual QA with regional images is especially challenging.

The problem: Most QA benchmarks focus on globally known facts. But real users ask about local geography, culture, and history.

We collected questions from native speakers in Czechia 🇨🇿, Slovakia 🇸🇰, and Ukraine 🇺🇦 about facts locals know but outsiders don't.

🧵 We're releasing CUS-QA - a new benchmark for testing LLMs on regional knowledge!

Find out what your model knows about Czechia 🇨🇿, Slovakia 🇸🇰, and Ukraine 🇺🇦!

👉 Textual and visual questions, answers, and human judgment on model outputs!

huggingface.co/datasets/ufa...

www.arxiv.org/abs/2507.22752

Stay tuned, we will release the dataset soon...

We need to have poster fights at the end of every conference.

Just presented MAGBIG, a new dataset and evaluation methodology for gender bias in multilingual text-to-image generation. Grammatical gender matters when studying these biases across languages!

Thanks to Felix Friedrich, @kathaem.bsky.social and all co-authors - it was fun to work on this together!

This week I am at #ACL2025NLP in Vienna 🎡🇦🇹. Find me 🕵️ or message 💌 me if you want to chat about multilinguality or tokenization. Stop 🛑 by our poster on gender bias in text-to-image generation on Monday aclanthology.org/2025.acl-lon...

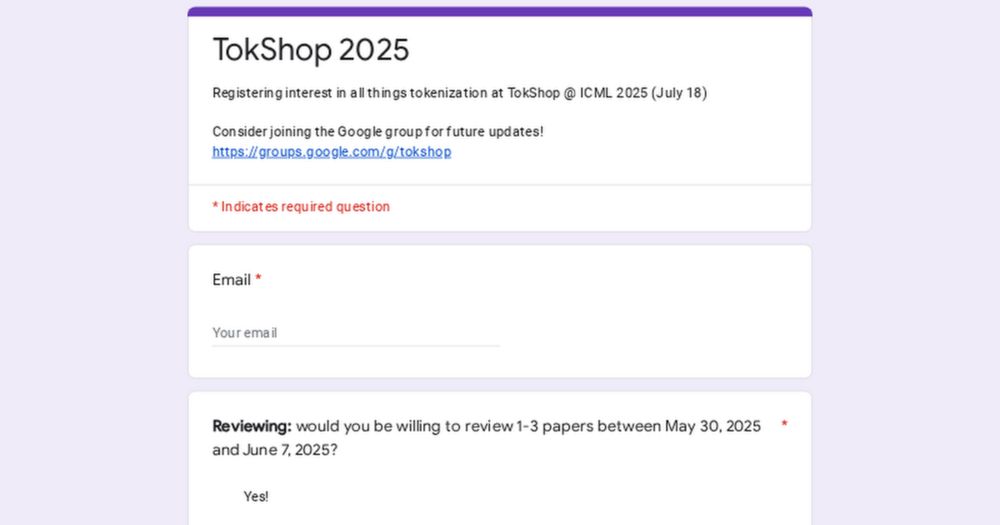

TokShop @ #ICML2025 got way more submissions than expected! 📈 We could really use a few more reviewers to help out. If you have the capacity to review a #tokenization paper by Saturday, please fill out this form: forms.gle/32A6sQHQrMSb... 🙏

📣 Call for Paper Alert: TokShop @ ICML 2025

TokShop explores tokenization across all data modalities. Topics include: subword NLP techniques, multimodal approaches, multilingual challenges, post-training modification, alternative representations, and statistical perspectives.

Got a tokenization paper that just didn't make the cut for ICML? Submit it to the Tokenization Workshop TokShop at #ICML2025 -- we'd love to see it there!

tokenization-workshop.github.io

If you will be on the virtual NAACL day on May 6, 5 pm Central European Time, don't miss @kathaem.bsky.social presenting our work on the importance of semantic token overlap in multilingual language models. aclanthology.org/2025.naacl-s...

Attending #NAACL2025 virtually. Since 2022, I've been training a classifier on papers I read to tackle the arXiv madness. Ran it on the NAACL proceedings for my personalized watch list. 🤓📺 However, it's far from perfect: Multilingual cultural awareness is great, but where is tokenization? 🤷

We're organizing ✨Tokenization Workhop✨ TokShop❗ Join us at @icmlconf.bsky.social in July in Vancouver 🇨🇦. Follow @tokshop.bsky.social for updates! Submit your paper by May 30.