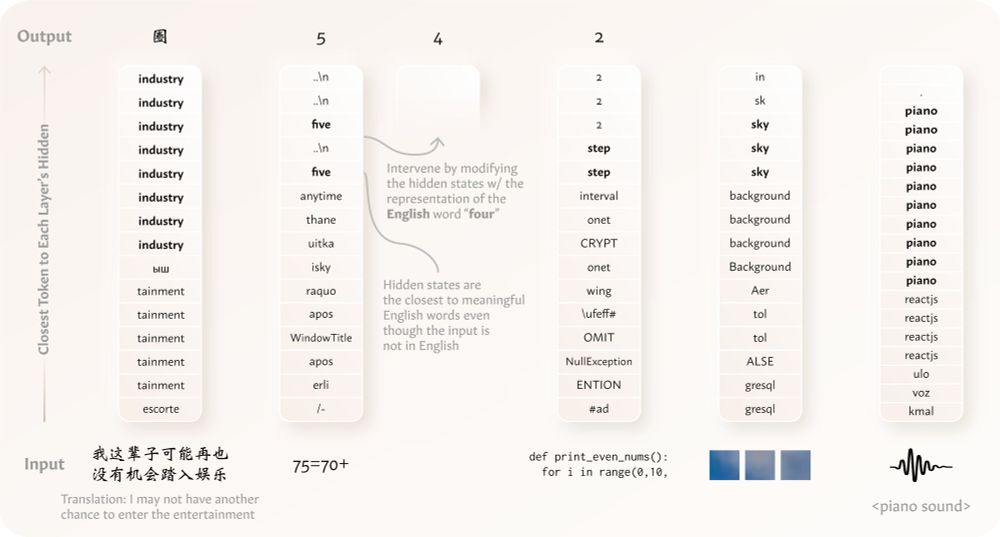

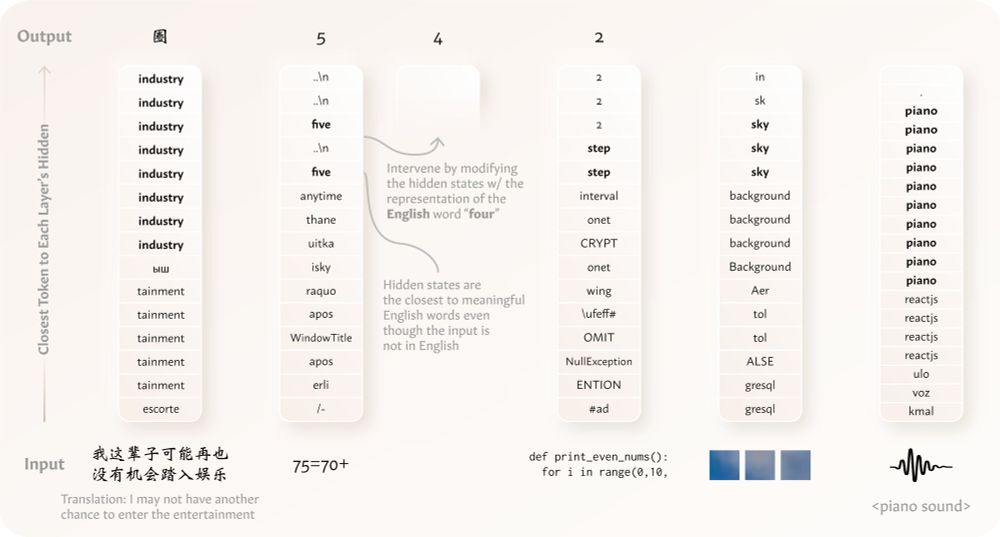

To appear @ #ICLR2025! We show that LMs represent semantically-equivalent inputs across languages, modalities, etc. similarly. This shared representation space is structured by the LM's dominant language, which is also relevant to recent phenomena where LMs "think" in Chinese🀄️ in English🔠 contexts

22.01.2025 18:10

👍 11

🔁 2

💬 0

📌 0

We have released our code at github.com/ZhaofengWu/s.... We hope that this could be useful for future studies understanding the how LMs work!

17.12.2024 15:26

👍 3

🔁 1

💬 0

📌 0

Would love to be added! Thank youuu 🙏

05.12.2024 22:09

👍 1

🔁 0

💬 0

📌 0

💡We find that models “think” 💭 in English (or in general, their dominant language) when processing distinct non-English or even non-language data types 🤯 like texts in other languages, arithmetic expressions, code, visual inputs, & audio inputs‼️ 🧵⬇️ arxiv.org/abs/2411.04986

02.12.2024 18:08

👍 12

🔁 1

💬 2

📌 2

Hi Marc! Do you mind adding me to the pack? Thanks!

01.12.2024 04:37

👍 1

🔁 0

💬 1

📌 0

🙌thank you thank you!!

25.11.2024 04:14

👍 0

🔁 0

💬 0

📌 0