Our department is hiring an Assistant Teaching Professor!! This is a joint-appointed position with Computational Social Sciences (css.ucsd.edu). It's 75+ degrees F and sunny today, just thought I'd mention apol-recruit.ucsd.edu/JPF04461

Our department is hiring an Assistant Teaching Professor!! This is a joint-appointed position with Computational Social Sciences (css.ucsd.edu). It's 75+ degrees F and sunny today, just thought I'd mention apol-recruit.ucsd.edu/JPF04461

omg everybody go draw a horse this is what the internet was made for

gradient.horse

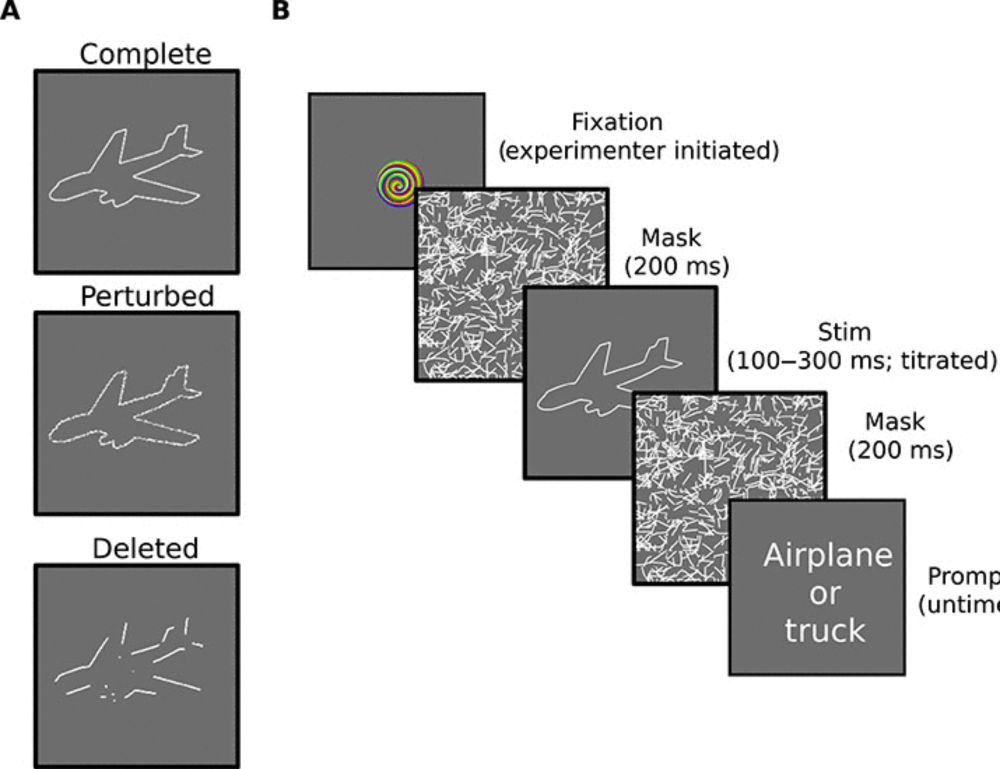

New preprint with @SamJung @timbrady.bsky.social and @violastoermer.bsky.social: osf.io/preprints/ps.... Here we uncover what might be driving the “meaningfulness benefit” in visual working memory. Studies show that real objects are remembered better in VWM tasks than abstract stimuli. But why? 1/

Come join our team!

For more details & official postings: www.vislearnlab.org/join-the-lab

Recent publications & projects at:

www.vislearnlab.org/publications

Feel free to reach out directly with questions!

Position 1: Developmental focus—work closely with postdoc @ajhaskins.bsky.social. Hands-on data collection with kids in our lab & at children's museums.

Position 2: Computational focus—manage eye-tracking studies, video data analysis, & lab software infrastructure.

The Visual Learning Lab is hiring TWO lab coordinators!

Both positions are ideal for someone looking for research experience before applying to graduate school. Application deadline is Feb 10th (approaching fast!)—with flexible summer start dates.

a red building on UPENN's campus photographed during the fall

the Philadelphia skyline, with clear skies and autumn trees

starting fall 2026 i'll be an assistant professor at @upenn.edu 🥳

my lab will develop scalable models/theories of human behavior, focused on memory and perception

currently recruiting PhD students in psychology, neuroscience, & computer science!

reach out if you're interested 😊

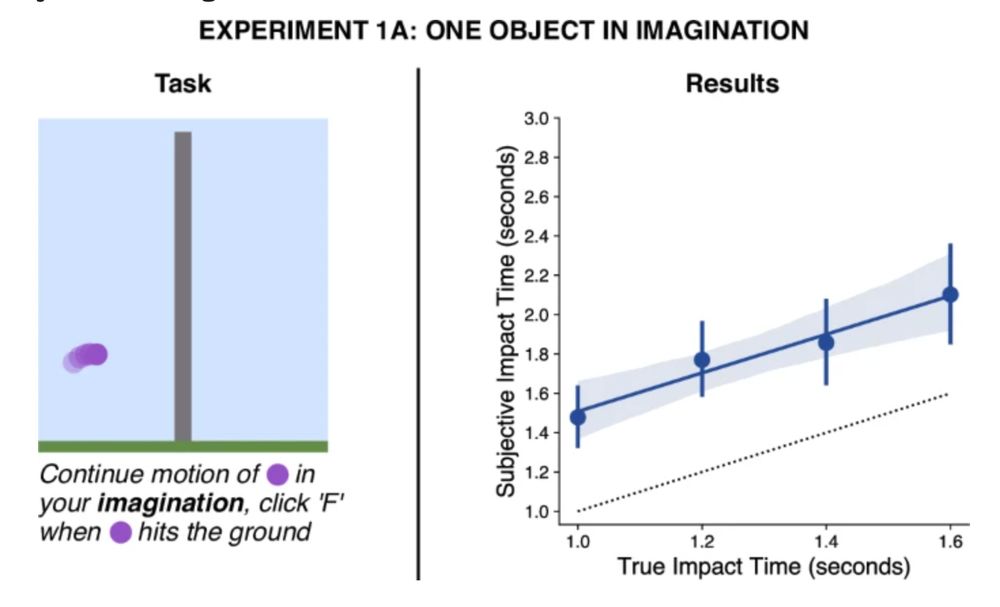

title line

screenshots from the nine tasks

Very excited to share the first empirical paper from LEVANTE: we describe the LEVANTE core tasks, a set of nine open source tasks for measuring learning and development in kids ages 5-12 years.

osf.io/preprints/ps...

🧵

This platform is so important! It allows researchers regardless of their university prestige to do research! So important for leveling the playing field for many developmental psychologist scientist from under resourced institutions. Please consider donating - donations will be matched!

This resource has been such a boon to the developmental science community broadly and to my lab specifically. One needn’t look any further than the publications that have come out of the platform to see this (lookit.readthedocs.io/en/develop/p...).

Please consider donating!

Just out in Infancy! "Time to talk", by our great team, Janet Bang, Mónica Munévar, Arlyn Mora, and Anne Fernald used day-long recordings of English- and Spanish-speaking families in the US to explore what caregivers are doing when talking the most with their children.

Thrilled to start 2026 as faculty in Psych & CS

@ualberta.bsky.social + Amii.ca Fellow! 🥳 Recruiting students to develop theories of cognition in natural & artificial systems 🤖💭🧠. Find me at #NeurIPS2025 workshops (speaking coginterp.github.io/neurips2025 & organising @dataonbrainmind.bsky.social)

For our last talk of the workshop, we are honored to have Prof. @brialong.bsky.social sharing with us on “The BabyView Dataset: Learning from and about young children's everyday experiences” #NeurIPS2025

📍Location: Upper Level Room 10.

🗓️ data-brain-mind.github.io

In the running for greatest human accomplishment.

Abstract of paper

Figure 1!

What do kids choose to do when they think that someone will help them? What about when no one will help?

New paper: "Young children strategically adapt to unreliable social partners" - led by Kat Shannon, with @hyogweon.bsky.social and Willem Frankenhuis.

osf.io/preprints/ps...

We’re recruiting a postdoctoral fellow to join our team! 🎉

I’m happy to share that I’ve opened back up the search for this position (it was temporarily closed due to funding uncertainty).

See lab page and doc below for details!

At #COLM2025 and would love to chat all things cogsci, LMs, & interpretability 🍁🥯 I'm also recruiting!

👉 I'm presenting at two workshops (PragLM, Visions) on Fri

👉 Also check out "Language Models Fail to Introspect About Their Knowledge of Language" (presented by @siyuansong.bsky.social Tue 11-1)

A first blogpost from the LEVANTE team, introducing our global project perspective.

A good intro if you're interested in learning more about cross-cultural developmental data collection using LEVANTE.

levante-network.org/global-colla...

🧠 New preprint: Why do deep neural networks predict brain responses so well?

We find a striking dissociation: it’s not shared object recognition. Alignment is driven by sensitivity to texture-like local statistics.

📊 Study: n=57, 624k trials, 5 models doi.org/10.1101/2025...

Humans largely learn language through speech. In contrast, most LLMs learn from pre-tokenized text.

In our #Interspeech2025 paper, we introduce AuriStream: a simple, causal model that learns phoneme, word & semantic information from speech.

Poster P6, tomorrow (Aug 19) at 1:30 pm, Foyer 2.2!

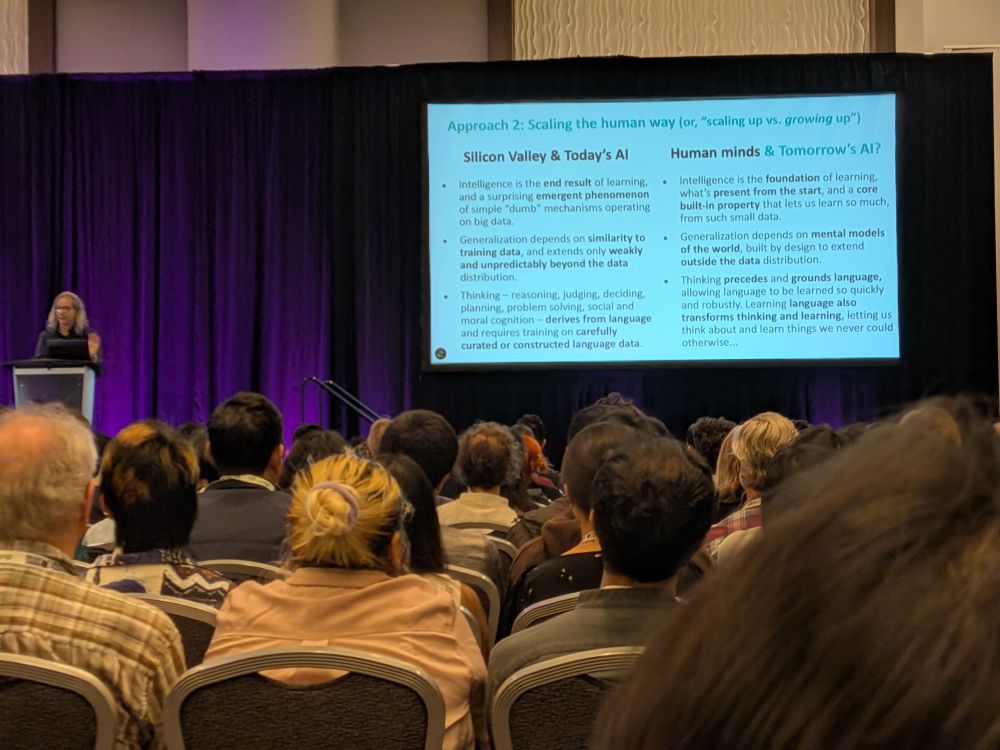

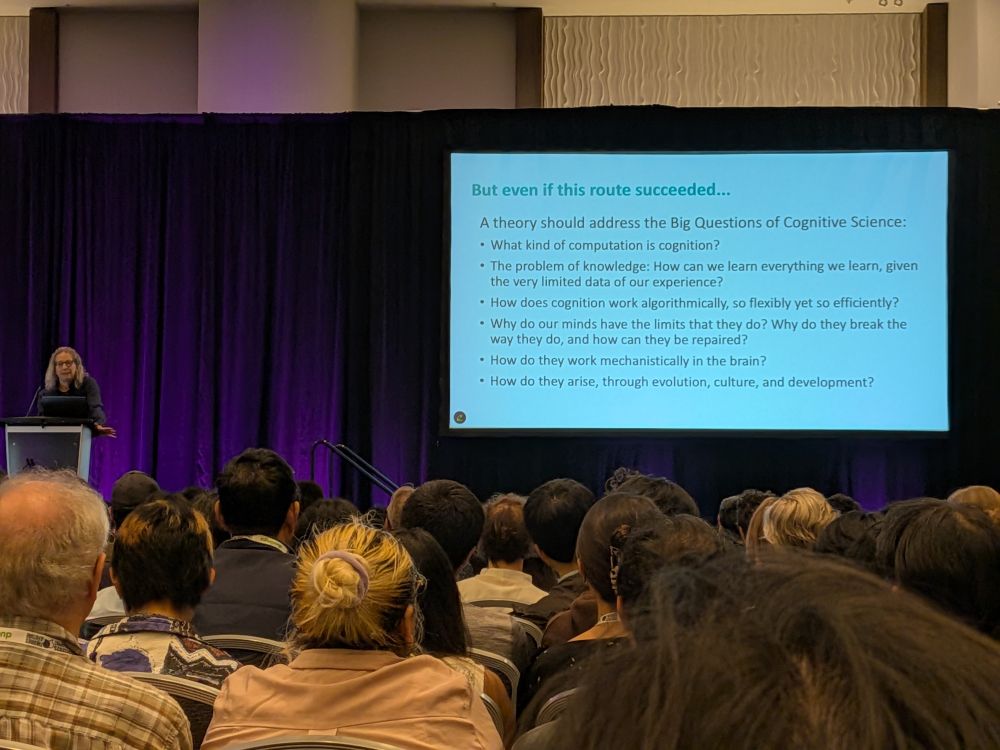

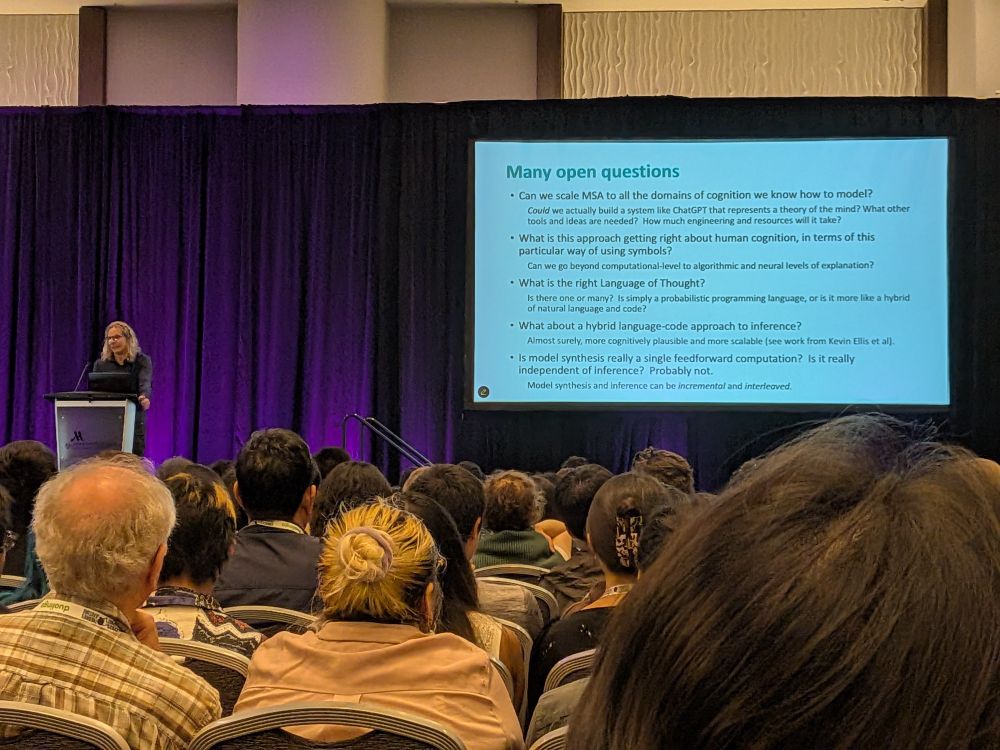

Josh Tenenbaum's inspiring keynote at #cogsci2025 on growing vs scaling AI, the big questions of cognitive science, and the many open questions for the field.

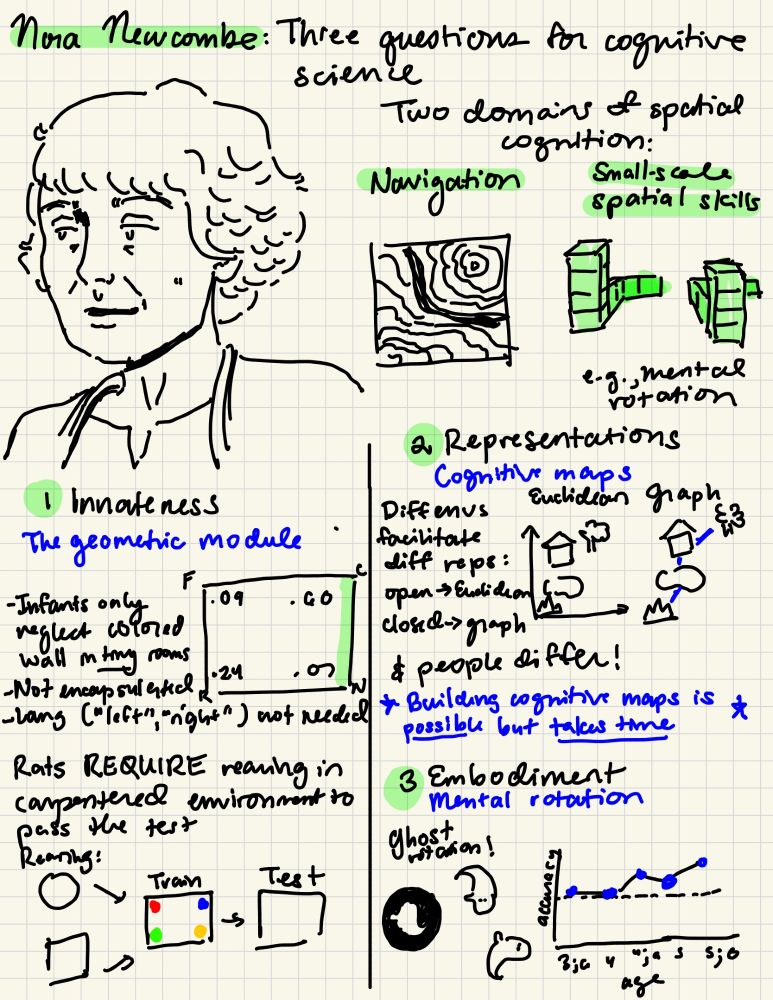

Sketchnote of Nora Newcombe's Rumelhart Prize Talk, posing three questions and case studies for cognitive science: (1) innateness (with the geometric module as a case study), (2) representations (featuring cognitive maps vs. graphs), and (3) embodiment (featuring mental rotation)

Notes from @noranewcombe.bsky.social 's beautiful Rumelhart Prize "tasting menu" - congratulations Nora!!! #CogSci2025

1/5 For upcoming work I lately read some articles on handling mistakes in science. They share an important consensus I think everyone should know:

Mistakes are a failure of systems, not people. In a working system, making a mistake is normal, but inconsequential. 🧵

🧠How “old” is your model?

Put it to the test with the KiVA Challenge: a new benchmark for abstract visual reasoning, grounded in real developmental data from children and adults.

🏆 Prizes:

🥇$1K to the top model

🥈🥉$500

📅 Deadline: 10/7/25

🔗 kiva-challenge.github.io

@iccv.bsky.social

My paper with @stellalourenco.bsky.social is now out in Science Advances!

We found that children have robust object recognition abilities that surpass many ANNs. Models only outperformed kids when their training far exceeded what a child could experience in their lifetime

doi.org/10.1126/scia...

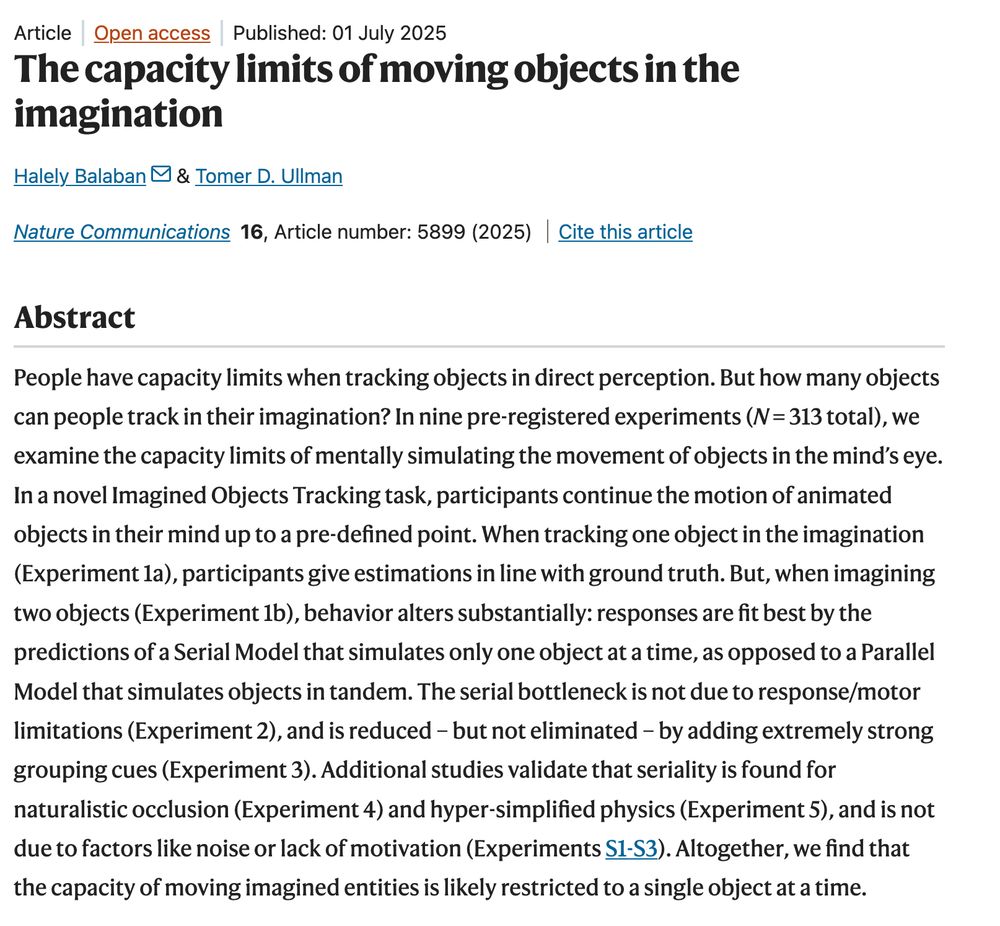

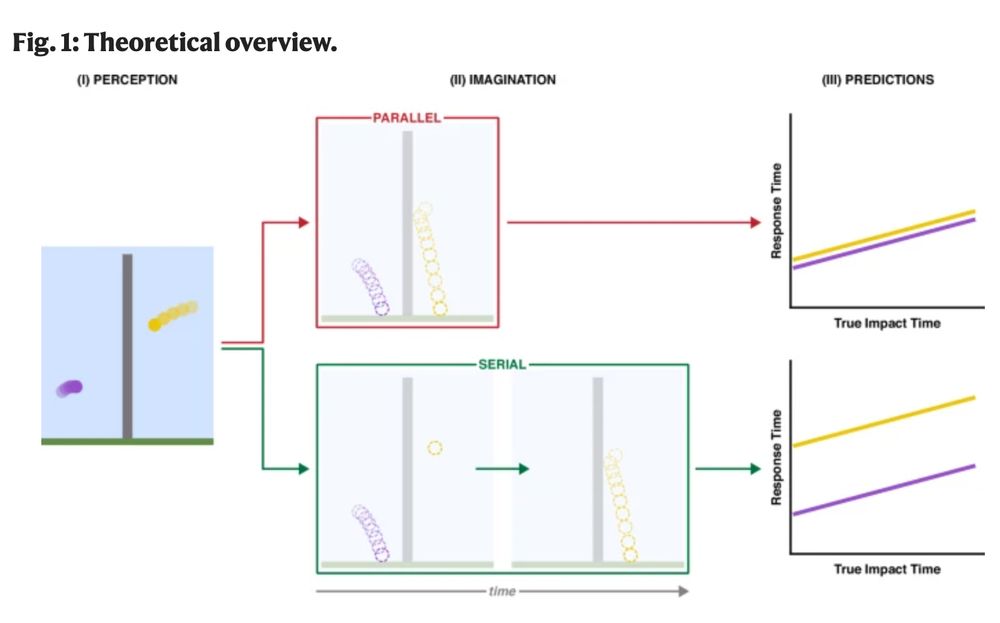

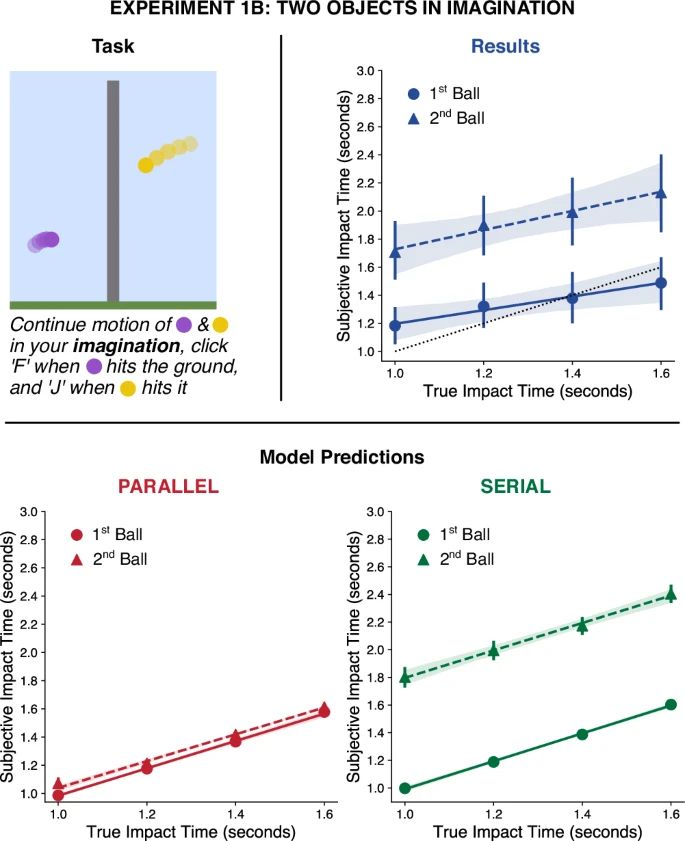

🎈 Out now: 🎈

"The capacity limits of moving objects in the imagination"

(by Balaban & me)

of interest to people thinking about the imagination, intuitive physics, mental simulation, capacity limits, and more

www.nature.com/articles/s41...

Amazing resource that I now use myself in teaching graduate methods at UCSD! Highly recommended.

Experimentology cover: title and curves for distributions.

Experimentology is out today!!! A group of us wrote a free online textbook for experimental methods, available at experimentology.io - the idea was to integrate open science into all aspects of the experimental workflow from planning to design, analysis, and writing.

Out today in @nature.com: we show that individual neurons have diverse tuning to a decision variable computed by the entire population, revealing a unifying geometric principle for the encoding of sensory and dynamic cognitive variables.

www.nature.com/articles/s41...

Trump’s NIH cuts aren’t just numbers. They are delayed cures, abandoned treatments, futures stolen, lives that will be lost. This is why we Stand Up For Science.

#StandUpForScience

#SummerFightForScience