🚋 New blog post:

On "infinite" learning-rate schedules and how to construct them from one checkpoint to the next.

fabian-sp.github.io/posts/2025/0...

🚋 New blog post:

On "infinite" learning-rate schedules and how to construct them from one checkpoint to the next.

fabian-sp.github.io/posts/2025/0...

yes, it does raise questions (and I don't have an answer yet). but I am not sure whether the practical setting falls within the smooth case neither (if smooth=Lipschitz smooth; and even if smooth=differentiable, there are non-diff elements in the architecture like RMSNorm)

This is joint work with @haeggee.bsky.social, Adrien Taylor, Umut Simsekli and @bachfrancis.bsky.social

🗞️ arxiv.org/abs/2501.18965

🔦 github.com/fabian-sp/lr...

Bonus: this provides a provable explanation for the benefit of cooldown: if we plug in the wsd schedule into the bound, a log-term (H_T+1) vanishes compared to constant LR (dark grey).

How does this help in practice? In continued training, we need to decrease the learning rate in the second phase. But by how much?

Using the theoretically optimal schedule (which can be computed for free), we obtain noticeable improvement in training 124M and 210M models.

This allows to understand LR schedules beyond experiments: we study (i) optimal cooldown length, (ii) the impact of gradient norm on the schedule performance.

The second part suggests that the sudden drop in loss during cooldown happens when gradient norms do not go to zero.

Using a bound from arxiv.org/pdf/2310.07831, we can reproduce the empirical behaviour of cosine and wsd (=constant+cooldown) schedule. Surprisingly the result is for convex problems, but still matches the actual loss of (nonconvex) LLM training.

Learning rate schedules seem mysterious? Why is the loss going down so fast during cooldown?

Turns out that this behaviour can be described with a bound from *convex, nonsmooth* optimization.

A short thread on our latest paper 🚞

arxiv.org/abs/2501.18965

That time of the year again, where you delete a word and latex manages to make the line <longer>.

Want all NeurIPS/ICML/ICLR papers in one single .bib file? Here you go!

🗞️ short blog post: fabian-sp.github.io/posts/2024/1...

📇 bib files: github.com/fabian-sp/ml-bib

nice!

Figure 9 looks like a lighthouse guiding the way (towards the data distribution)

you could run an online method for the quantile problem? sth similar to the online median arxiv.org/abs/2402.12828

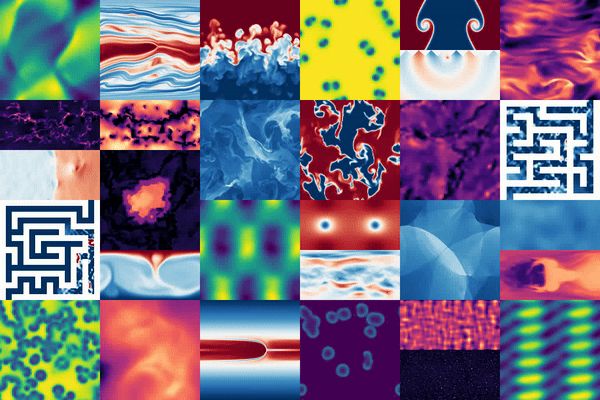

Generating cat videos is nice, but what if you could tackle real scientific problems with the same methods? 🧪🌌

Introducing The Well: 16 datasets (15TB) for Machine Learning, from astrophysics to fluid dynamics and biology.

🐙: github.com/PolymathicAI...

📜: openreview.net/pdf?id=00Sx5...

could you add me? ✌🏻

Not so fun exercise: take a recent paper that you consider exceptionally good, and one that you think is mediocre (at best).

Then look up their reviews on ICLR 2025. I find these reviews completely arbitrary most of the times.

my French 🇨🇵 digital bank (supposedly!) today asked me (via letter) to confirm an account action via sending them a signed letter. wtf

I made a #starterpack for computational math 💻🧮 so please

1. share

2. let me know if you want to be on the list!

(I have many new followers which I do not know well yet, so I'm sorry if you follow me and are not on here, but want to - drop me a note and I'll add you!)

go.bsky.app/DXdZkzV

would love to be added :)