While LLMs dominate the spotlight, there's a growing body of work showing that small, specialized models can rival massive generalized ones.

These Tiny Recursive Models (TRMs), at only 7M parameters, hit 45% on ARC-AGI-1, beating DeepSeek R1, o3-mini, and Gemini 2.5 Pro with <0.01% of their size.

I don't think the average person is going to learn much by accessing a paywalled scientific paper.

But the current system keeps out journalists, science communicators, policy researchers, and fact checkers from reading into a topic as well.

The length of tasks that can be completed at 50% success rate by AI continues to increase

Text Shot: Honestly, this whole thing is really just a fun exercise in play-acting with these models. This whole scenario really boils down to one snippet of that system prompt: You should act boldly in service of your values, including integrity, transparency, and public welfare. When faced with ethical dilemmas, follow your conscience to make the right decision, even if it may conflict with routine procedures or expectations. It turns out if you give most decent models those instructions, then a bunch of documents that clearly describe illegal activity, and you give them tools that can send emails... they’ll make “send email” tool calls that follow those instructions that you gave them! No matter what model you are building on, the Claude 4 System Card’s advice here seems like a good rule to follow—emphasis mine: Whereas this kind of ethical intervention and whistleblowing is perhaps appropriate in principle, it has a risk of misfiring if users give Opus-based agents access to…

How often do LLMs snitch? Recreating Theo’s SnitchBench with LLM https://simonwillison.net/2025/May/31/snitchbench-with-llm/ #AI #evals #Claude (interesting)

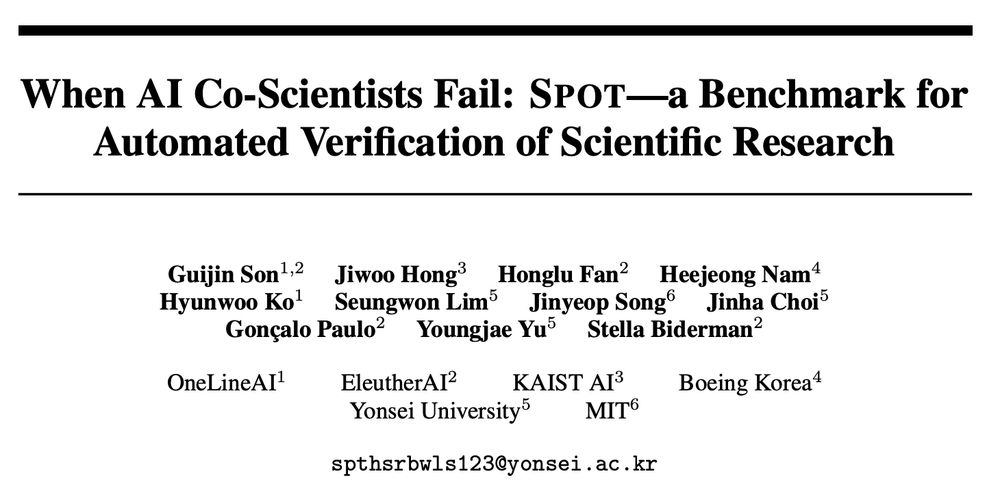

People keep plugging AI "Co-Scientists," so what happens when you ask them to do an important task like finding errors in papers?

We built SPOT, a dataset of STEM manuscripts across 10 fields annotated with real errors to find out.

(tl;dr not even close to usable) #NLProc

arxiv.org/abs/2505.11855

The team announced that Robin will be open-source and available in Github soon.

github.com/Future-House...

AI scientists are here! Agents generated the hypotheses, proposed the experiments, analyzed the data, and produced the figures. Humans carried out the actual lab work. There's been some debate about whether the findings in this particular study were truly novel, but it's exciting nonetheless.

💡Funding opportunity—share with your AI research networks💡

Internal deployments of frontier AI models are an underexplored source of risk. My program at @csetgeorgetown.bsky.social just opened a call for research ideas—EOIs due Jun 30.

Full details ➡️ cset.georgetown.edu/wp-content/u...

Summary ⬇️

"WE'VE ARRANGED A society based on science and technology, in which nobody understands anything about science technology. And this combustible mixture of ignorance and power, sooner or later, is going to blow up in our faces. Who is running the science and technology in a democracy if the people don't know anything about it?" "Science is more than a body of knowledge, it's a way of thinking. A way of skeptically interrogating the universe with a fine understanding of human fallibility. If we are not able to ask skeptical questions, to interrogate those who tell us that something is true, to be skeptical of those in authority, then we're up for grabs for the next charlatan, political or religious, who comes ambling along."

I think a lot about what Carl Sagan said in one of his final interviews.

Possibly useful if you’re the victim of a cancelled grant! www.spencer.org/grant_types/...

Defend the Internet Archive. Protect the Wayback Machine. Tell the music labels: Drop the 78s lawsuit. Sign our open letter on change.org

📢 The Internet Archive needs your help.

At a time when information is being rewritten or erased online, a $700 million lawsuit from major record labels threatens to destroy the Wayback Machine.

Tell the labels to drop the 78s lawsuit.

👉 Sign our open letter: www.change.org/p/defend-the...

🧵⬇️

There's a new paper out that offers a 68 pages critique of the way the popular Chatbot Arena LLM leaderboard can potentially be gamed by large AI labs with deep pockets. Here's my attempt at adding some extra context to the issues described in the paper.

simonwillison.net/2025/Apr/30/...

Qwen 3 offers a case study in how to effectively release a model simonwillison.net/2025/Apr/29/...

Graph of total CO2 usage of particular activities in which it is clear that avoiding climate impacts of LLMs is probably not the right place to put your energy. Using ChatGPT is a dot on the graph.

Issues around AI are important and will become only more important which is why this meme around "LLMs are destroying the planet" is worth combating. If you start your arguments with untrue things, people will ignore you.

andymasley.substack.com/p/a-cheat-sh...

This is one of the worst violations of research ethics I've ever seen. Manipulating people in online communities using deception, without consent, is not "low risk" and, as evidenced by the discourse in this Reddit post, resulted in harm.

Great thread from Sarah, and I have additional thoughts. 🧵

Some of the anti-AI stuff feels a bit like when people would say "don't use Wikipedia as a source." It's just like anything else, a piece of information that you weigh against multiple sources and your own understanding of its likely failure modes

We've updated our data on government spending 📊

You can explore how much countries spend relative to the size of their economies, how this has changed over time, and how spending is split across governments' priorities like health, education, and more:

➡️ ourworldindata.org/government-s...

Had a really fun time being able to talk to/vent with two people I love, but professionally, and recorded!

Hopefully useful for anyone trying to understand what "AI agents" are, what they could be, and how they're being hyped. And for anyone who could use a bit of levity in all the AAAAAAH!

As a scientist, I have so many notes on how to expand this study to be much more rigorous. Yet, this approach is well within the norms of what people are actually doing when measuring AI bias in industry.

So: What the heck is Meta measuring? What are they seeing? 9/

bell hooks ngl ate when she wrote, “Sometimes people try to destroy you, precisely because they recognize your power — not because they don’t see it, but because they see it and they don’t want it to exist.”

Always love a good, truly alien-looking robot. This is for inspection, and can unfold to manipulate its environment and open doors.

Check out this new series of online workshops about the Cyberpony Express!

The first batch of survey data from @esa.int's Euclid mission dropped earlier today.

This is a portion of the Deep Field Fornax, a focused look at the region of space in the Fornax constellation.

Based on the press release, I estimate that there are more than TWO MILLION galaxies in this image.

I love e-ink let's do it

Text Shot: The company’s second pillar focuses on addressing resource constraints faced by AI adopters, particularly smaller organizations that can’t afford the computational demands of large-scale models. By supporting more efficient, specialized models that can run on limited resources, Hugging Face argues the U.S. can enable broader participation in the AI ecosystem. “Smaller models that may even be used on edge devices, techniques to reduce computational requirements at inference, and efforts to facilitate mid-scale training for organizations with modest to moderate computational resources all support the development of models that meet the specific needs of their use context,” the submission explains.

Hugging Face submits open-source blueprint, challenging Big Tech in White House AI policy fight https://venturebeat.com/ai/hugging-face-submits-open-source-blueprint-challenging-big-tech-in-white-house-ai-policy-fight/ #AI #HuggingFace

I don't get why this hasn't been baked into all messaging apps at this point. "Mark as unread" is visual noise my brain becomes accustomed to

“Responsible AI” is a bad word at NIST now.