FGVC's not dead!

The 13th Workshop on Fine-Grained Visual Categorization has been accepted to CVPR 2026, in Denver, Colorado!

CALL FOR PAPERS: sites.google.com/view/fgvc13/

From Ecology to Medical Imagining, join us as we tackle the long tail and the limits of visual discrimination! #CVPR2026 #AI

13.01.2026 18:10

👍 20

🔁 8

💬 1

📌 3

We have two open PhD positions at the interface of AI and ecology. Start dates are Sept 2026.

We are looking for candidates with a background in AI/CS, Math, Stats, or Physics that are passionate about solving challenging problems in these domains.

Application deadline is in two weeks.

05.01.2026 14:14

👍 19

🔁 14

💬 1

📌 0

I will be presenting this work from 11AM-2PM at #NeurIPS2025 in San Diego today! Come by poster #2012 to learn more :)

04.12.2025 17:15

👍 2

🔁 1

💬 0

📌 0

Excited to share our paper Representational Difference Explanations (RDX) was accepted to #NeurIPS2025! 🎉RDX is a new method for model diffing designed to isolate 🔍 representational differences. 1/7

19.11.2025 16:49

👍 8

🔁 4

💬 1

📌 2

More info:

📄 Paper: arxiv.org/abs/2511.08512

🗂️ Data: huggingface.co/datasets/bos...

💻 Code: github.com/visipedia/cl...

🌐 Project website: cleverbirds-benchmark.github.io

5/5

12.11.2025 15:34

👍 2

🔁 0

💬 0

📌 0

Even strong sequence models struggle here, predicting how recognition evolves is genuinely hard.

CleverBirds sets a new challenge for understanding visual learning dynamics.

4/5

12.11.2025 15:34

👍 1

🔁 0

💬 1

📌 0

Collected in collaboration with #eBird, CleverBirds spans 10K+ species and 40K learners across six years.

It’s one of the largest datasets for visual expertise, tracking how people build recognition ability over time.

3/5

12.11.2025 15:33

👍 1

🔁 0

💬 1

📌 0

Two panels. Left panels shows how humans answer the quiz. Right panel shows how quiz answers are stacked per user to create the knowledge tracing task.

In CleverBirds, ML models have to predict human learning: inferring skills form past answers to anticipate future recognition.

2/5

12.11.2025 15:31

👍 1

🔁 0

💬 1

📌 0

Prof. @tokehoye.bsky.social (Aarhus University) and I have an open PhD position (jointly advised) on biodiversity monitoring with camera trap networks. Deadline: 15-Jan-2026

Please help us share this post among students you know with an interest in Machine Learning and Biodiversity! 🤖🪲🌱

11.11.2025 13:12

👍 20

🔁 11

💬 1

📌 2

Excited to share my first work as a PhD student at EdinburghNLP that I will be presenting at EMNLP!

RQ1: Can we achieve scalable oversight across modalities via debate?

Yes! We show that debating VLMs lead to better model quality of answers for reasoning tasks.

01.11.2025 19:29

👍 2

🔁 2

💬 1

📌 0

There are now millions of publicly-available AI models – which one is right for you?

We introduce CODA ( #ICCV2025 Highlight! ), a method for *active model selection.* CODA selects the best model for your data with any labeling budget – often as few as 25 labeled examples. 1/

@iccv.bsky.social

13.10.2025 18:00

👍 12

🔁 6

💬 2

📌 1

Interested in doing a PhD in machine learning at the University of Edinburgh starting Sept 2026?

My group works on topics in vision, machine learning, and AI for climate.

For more information and details on how to get in touch, please check out my website:

homepages.inf.ed.ac.uk/omacaod

16.10.2025 09:15

👍 39

🔁 18

💬 2

📌 0

Congratulations to everyone who got their @neuripsconf.bsky.social papers accepted 🎉🎉🎉

At #EurIPS we are looking forward to welcoming presentations of all accepted NeurIPS papers, including a new “Salon des Refusés” track for papers which were rejected due to space constraints!

19.09.2025 09:13

👍 50

🔁 16

💬 1

📌 5

Reminder that the deadlines for submitting papers to the FGVC workshop at #CVPR2025 are coming up soon.

The scope of the workshop is quite broad, e.g. fine-grained learning, multi-modal, human in the loop, etc.

More info here:

sites.google.com/view/fgvc12/...

@cvprconference.bsky.social

01.03.2025 09:48

👍 7

🔁 4

💬 0

📌 0

New working paper with @tobiasbergmann.bsky.social on the deficit-investment trade-off of deficit rules. Comments and feedback are more than welcome! 📩

24.01.2025 15:54

👍 7

🔁 3

💬 0

📌 0

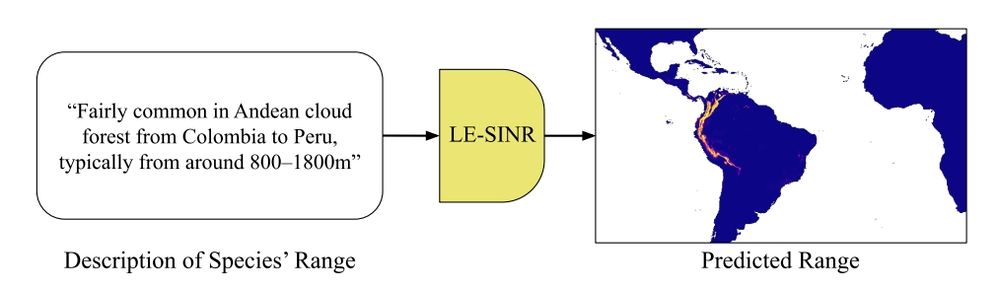

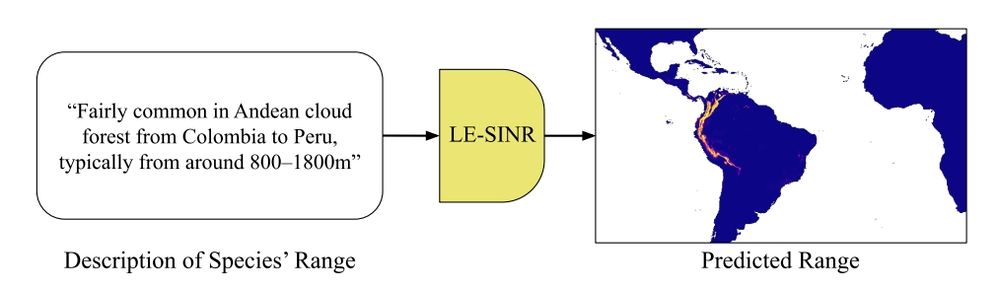

❓How can we predict where a species may be found when observations are limited?

✨Introducing Le-SINR: A text to range map model that can enable scientists to produce more accurate range maps with fewer observations.

Thread 🧵

09.12.2024 15:11

👍 20

🔁 8

💬 1

📌 1

🎯 How can we empower scientific discovery in millions of nature photos?

Introducing INQUIRE: A benchmark testing if AI vision-language models can help scientists find biodiversity patterns- from disease symptoms to rare behaviors- hidden in vast image collections.

Thread👇🧵

06.12.2024 20:28

👍 88

🔁 33

💬 3

📌 3