1/9 New blog is live! This is part 2 of a series—last time we looked at the Dunning-Kruger effect, now we are digging in to Implicit vs Explicit attitudes and the Implicit Association Test. To start, of course we need a good meme...

haines-lab.com/post/part-2-...

26.01.2026 17:45

👍 42

🔁 15

💬 4

📌 3

This has been a long journey. It started in April 2019 with a real eureka moment. I feel incredibly lucky to have @mattansb.msbstats.info as a major wind and support (and friend!) throughout this work. Years of work with Roi Cohen Kadosh and Avishai Henik, and we're thrilled it's finally out.

10.01.2026 13:14

👍 2

🔁 0

💬 1

📌 0

We also used a numerical Stroop task and found that stronger numerical bias in CLIP predicts larger Stroop effects when numbers must be ignored. This suggests a "tendency layer": varying attentional processes that predetermine how hard it is to ignore irrelevant information.

10.01.2026 13:14

👍 2

🔁 0

💬 1

📌 0

These individual differences predicted math fluency and quantitative reasoning, echoing child SFON research.

10.01.2026 13:14

👍 2

🔁 0

💬 1

📌 0

Hierarchical Bayesian Drift Diffusion modeling lets us combine choice and RT into a single measure, avoiding reliability problems of difference scores. The result: fantastic internal reliability and stable individual differences across all CLIP conditions.

10.01.2026 13:14

👍 2

🔁 0

💬 1

📌 0

Why this matters: Research on children shows that spontaneous focus on numerosity (SFON) predicts math skills and math development. The CLIP task provides a computerized version that can fit both adults and children! It captures these spontaneous tendencies trial by trial, and works great online!

10.01.2026 13:14

👍 2

🔁 0

💬 1

📌 0

Our paper is finally out in Cognition! 🎉

We introduce the "CLIP task"—a computerized paradigm for measuring numerical bias in adults: when number and physical size both matter, do you spontaneously rely more on numbers or on physical size?

10.01.2026 13:14

👍 13

🔁 5

💬 1

📌 2

This has been a long journey. It started in April 2019 with a real eureka moment. I feel incredibly lucky to have @mattansb.msbstats.info as a major wind and support throughout this work. Years of work with Roi Cohen Kadosh and Avishai Henik, and we're thrilled it's finally out.

10.01.2026 12:56

👍 0

🔁 0

💬 0

📌 0

These individual differences predicted math fluency and quantitative reasoning, echoing child SFON research.

10.01.2026 12:56

👍 0

🔁 0

💬 0

📌 0

Hierarchical Bayesian Drift Diffusion modeling lets us combine choice and RT into a single measure, avoiding the reliability problems of difference scores. The result: fantastic internal reliability and stable individual differences across all CLIP conditions.

10.01.2026 12:56

👍 0

🔁 0

💬 1

📌 0

Why this matters: Research on children shows that spontaneous focus on numerosity (SFON) predicts math skills and math development. The CLIP task provides a computerized version that can fit both adults and children! It captures these spontaneous tendencies trial by trial, and works great online!

10.01.2026 12:56

👍 0

🔁 0

💬 1

📌 0

OSF

New preprint with @rogierk.bsky.social @paulbuerkner.com - we introduce "relative measurement uncertainty" - a reliability estimation method that's applicable across a broad class of Bayesian measurement models (e.g., generative-, computational- and item response theory-models osf.io/h54k8

01.10.2025 08:17

👍 19

🔁 7

💬 2

📌 5

Exploring {ggplot2}’s Geoms and Stats – Stat’s What It’s All About

New blog post!

Ever wonder what geom_histogram is actually doing? How about geom_boxplot?

In celebration of the release of #ggplot2 4.0.0 (ggplot8?), I explore the relationships between the “geoms” and “stats” offered by the core {ggplot2} functions.

#rstats

15.09.2025 19:04

👍 80

🔁 35

💬 1

📌 4

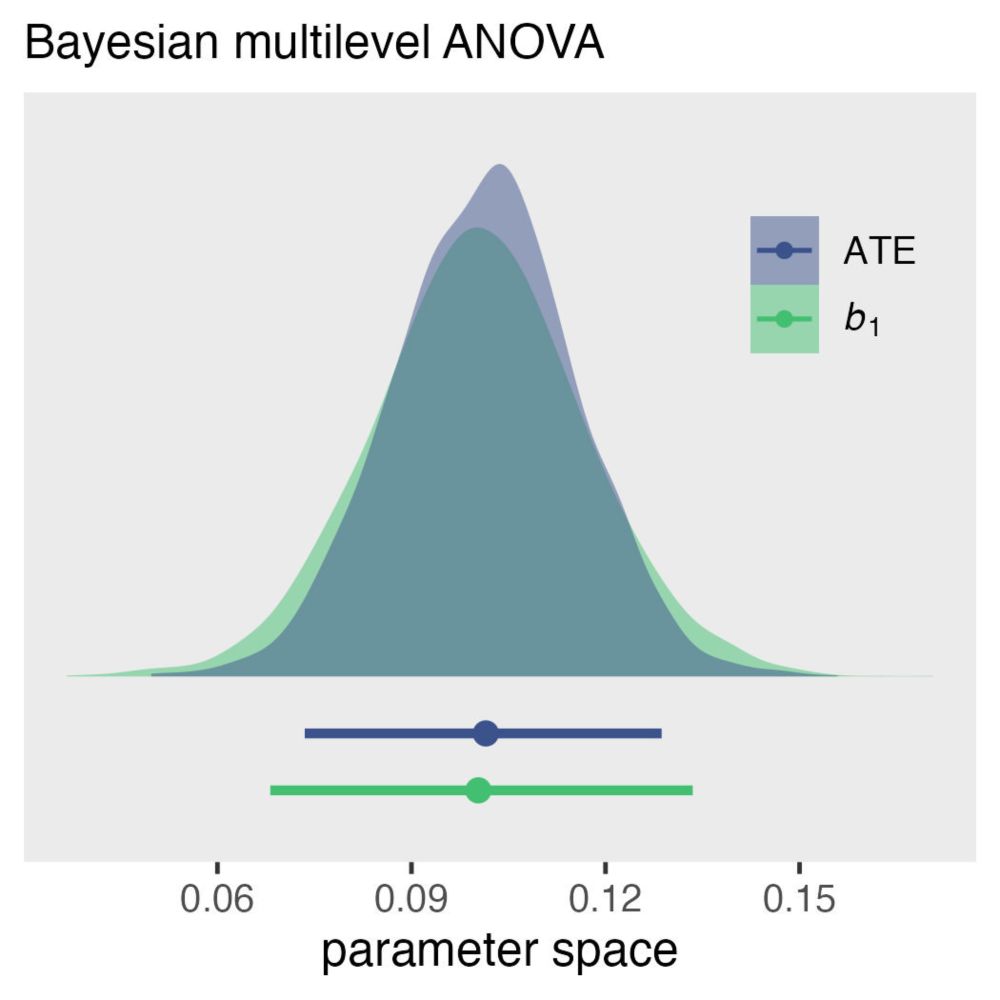

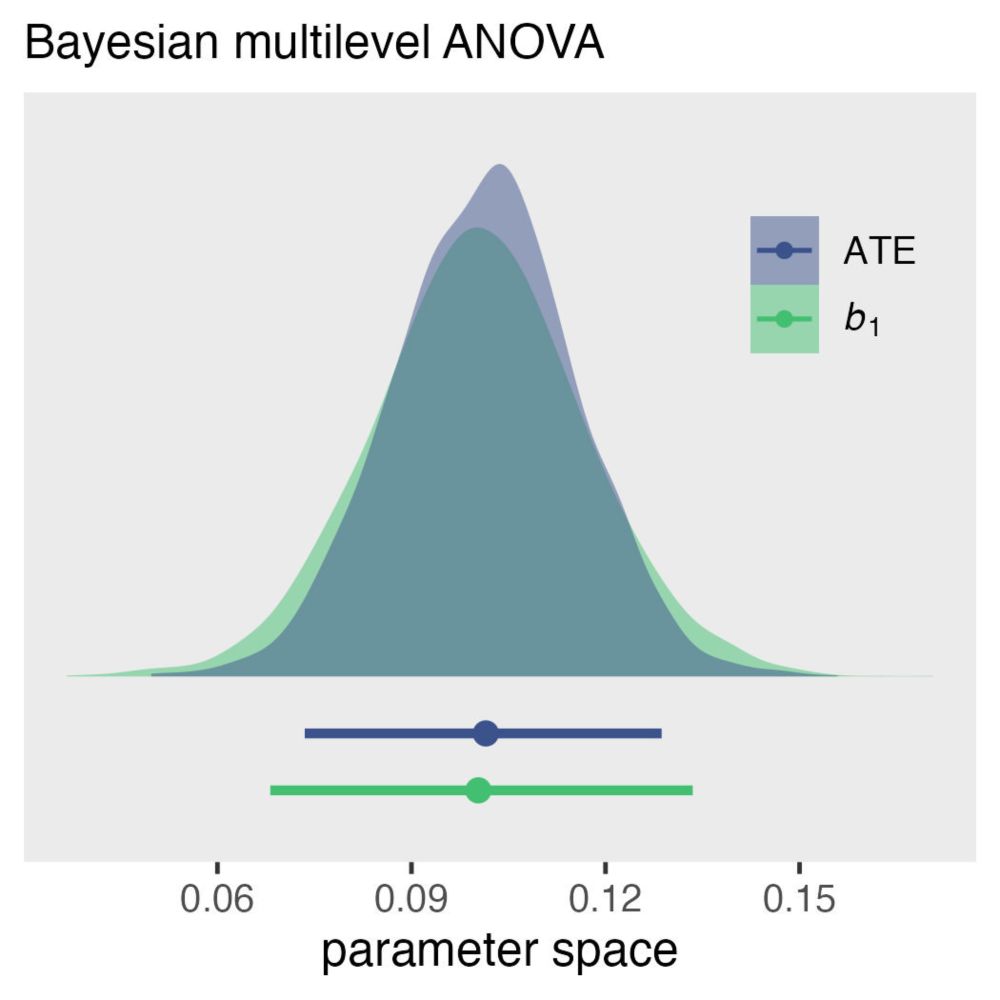

Within-person factorial experiments, log(normal) reaction-time data | A. Solomon Kurz

Causal inference with the GLMM, Part 1

New #rstats blog up!

solomonkurz.netlify.app/blog/2025-07...

This is the first in a new series discussing causal inference with experimental data using multilevel models. My basic case is g-computation is the way to go.

21.07.2025 14:14

👍 97

🔁 19

💬 7

📌 1

Beyond the Exclamation Points!!! – CogPsych Reserve

Dive in for code, visuals, and a clearer path through the log-odds fog → cogpsychreserve.netlify.app/posts/logist...

#NLP #Kaggle #marginaleffects #BayesianStatistics #DataScience #SignificantTesting

14.07.2025 07:14

👍 5

🔁 1

💬 0

📌 0

2/3

• NLP + PCA to capture toxicity/incoherence

• Cohen’s d ➡️ log-odds priors in one line using #brms

• #marginaleffects → 0–100 % probability shifts you can explain

• Inference with HDI-ROPE. It flags which effects are big enough to matter. Great for researchers and anyone shipping spam filters!

14.07.2025 07:14

👍 2

🔁 0

💬 1

📌 0

1/3 New post up! 📝 I took the workhorse 🔧 of binary modeling—logistic regression—and gave it a Bayesian tune-up using a Kaggle SMS-spam dataset.

14.07.2025 07:14

👍 19

🔁 6

💬 2

📌 2

Thanks Laura! 🙏 I analyzed vertical-face tasks (6 variants across SOAs) from subjects with mouse responses only. The Preprocessing details are in the post’s collapsible section 😊. Grateful for your work—DM anytime!

08.03.2025 20:22

👍 3

🔁 0

💬 0

📌 0

Thank you! 😊 While latent correlations are possible in Stan via custom likelihoods (modeling latent Gaussian variables), it's quite involved. For 95% of cases, I recommend the simpler brms approach: model questionnaires as predictors of task effects using condition-by-questionnaire interactions.

08.03.2025 20:05

👍 2

🔁 0

💬 0

📌 0

6/6 Thanks to @solomonkurz.bsky.social for statistical inspiration, @natehaines.bsky.social for works that influenced my approach, and @almogsi.bsky.social & @mattansb.bsky.social or thoughtful feedback!

#BayesianStatistics #ReliabilityAnalysis #CognitiveScience

07.03.2025 09:14

👍 5

🔁 0

💬 0

📌 0

5/6 The implications go beyond this single task. Many measures in psychology (and beyond) might be more reliable than we thought—we need to preserve and properly model the information in trial-level data.

07.03.2025 09:14

👍 3

🔁 0

💬 1

📌 0

4/6 This visualization shows the transformation when the same data is analyzed with trial-level Bayesian methods instead of traditional aggregation:

07.03.2025 09:14

👍 4

🔁 0

💬 1

📌 0

3/6 I implemented two Bayesian approaches in #brms:

@jeffrouder.bsky.social & @juliaha.bsky.social's variance decomposition

@gangchen6.bsky.social's approach

Both show substantially higher reliability than traditional analyses.

07.03.2025 09:14

👍 3

🔁 0

💬 1

📌 0

2/6 Recent research by @irenexu.bsky.social claimed the emotional dot-probe task lacks reliability for individual differences research. I wanted to see if more sophisticated analysis methods could tell a different story.

07.03.2025 09:14

👍 2

🔁 0

💬 1

📌 0

The Dot-Probe Task is Probably Fine – CogPsych Reserve

1/6 Hello Bluesky! 👋 Excited to join this community and share my new blog. First post: Using Bayesian hierarchical models to rescue "unreliable" cognitive tasks, with the dot-probe task as my case study. cogpsychreserve.netlify.app/posts/dotpro...

07.03.2025 09:14

👍 64

🔁 17

💬 7

📌 8