Brief pitch:

The students are good, you can commute to Clemson from Greenville pretty easily (and Greenville's nice), and other than me the faculty are lovely.

Brief pitch:

The students are good, you can commute to Clemson from Greenville pretty easily (and Greenville's nice), and other than me the faculty are lovely.

We're hiring: 3-year VAP, AOS Phil Law, 3/3 load, application date Mar. 23.

We're also searching for a lecturer who can teach logic and a research ethics-type class. I'll update when that ad is posted.

philjobs.org/job/show/30989

I think about this Tony Benn speech much more than I used to

OutKast, Tribe, Aesop Rock, Saul Williams, Gorrillaz.

If you tell me that that's what an AI predicted, I will not believe you.

I can hear this in William Shatner's voice.

✊

in case you're curious about how angry Minnesota is about ICE, it was -20 today

The new directive asks USDA workers in the agency’s research arm to use Google to check the backgrounds of all foreign nationals collaborating with its scientists.

The names of flagged scientists are being sent to national security experts at the agency, according to records reviewed by ProPublica.

I also enjoyed Outer Worlds.

But also: I enjoyed *Starfield* (well, at least until I did all the wandering I wanted to do and started actually working on the main quest), so I recognize that the bar for me is *very* low.

I feel like Fallout 4 was the point where I came to terms with the fact that I don't actually need these games to be *good* to enjoy them.

I mean, I gave up on that game because I found the stories tiresome regardless of them coming together or not, so take anything I have to say about it with a grain of salt, but also isn't there a "true ending" that does "bring them together"?

I pulled a "say what you will about [blank], but at least it's an ethos" on my students the other day and just moved on knowing none of them would pick up on it.

When it comes to confirmation, what matters for robustness can be boiled down to:

1. Does the hypothesis predict robustness?

2. Do the alternatives predict not robustness?

If you answer yes to both, then you've got confirmation! It's that simple. (2/2)

Another of my papers, "Stability, Robustness Reasoning, and Measuring the Human Contribution to Warming" is now online.

This one gets in the weeds of the last decade-ish of research on the human contribution to warming to motivate a simple philosophical claim ... (1/2)

doi.org/10.1017/psa....

"How do you assert a graph?" now has an issue -- still open access!

onlinelibrary.wiley.com/doi/10.1111/...

So this is obviously almost unbelievably stupid.

But also, prediction: yes it will, it will just only approve those by companies that Trump likes.

www.cnbc.com/2025/08/20/t...

And you can find the code for our R package on my GitHub. This doesn't have all the pretty graphs, but does all the actual calculating you could want for any of the standard tests.

github.com/coreydethier...

If you had any of these questions, you can now find out!

Sam Fletcher, Nada Mohamed, and myself have put together a Shiny app (severity.shinyapps.io/severity/) that illustrates our work, motivates it with examples, and explains the theoretical backing.

Wondering what I've been working on for the last couple years? (Probably not.)

Wondering what philosophers of statistics even do? (I'm betting no.)

Were you thinking to yourself: how would Deborah Mayo's project function outside a Neyman-Pearson setting? (Lol)

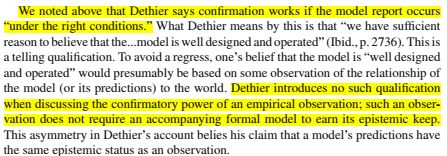

FWIW: the *more* substantive issue is that they take "model report" to refer to "a proposition about what the model entails" (p. 46) while I take it to be a proposition about the model's target.

As such, I think their criticisms simply don't land. But it's possible that I'm mistaken about that.

Ultimately, this doesn't matter much to the argument -- the substantive disagreements are more important.

But it's certainly the kind of thing you'd have hoped that the referees would catch!

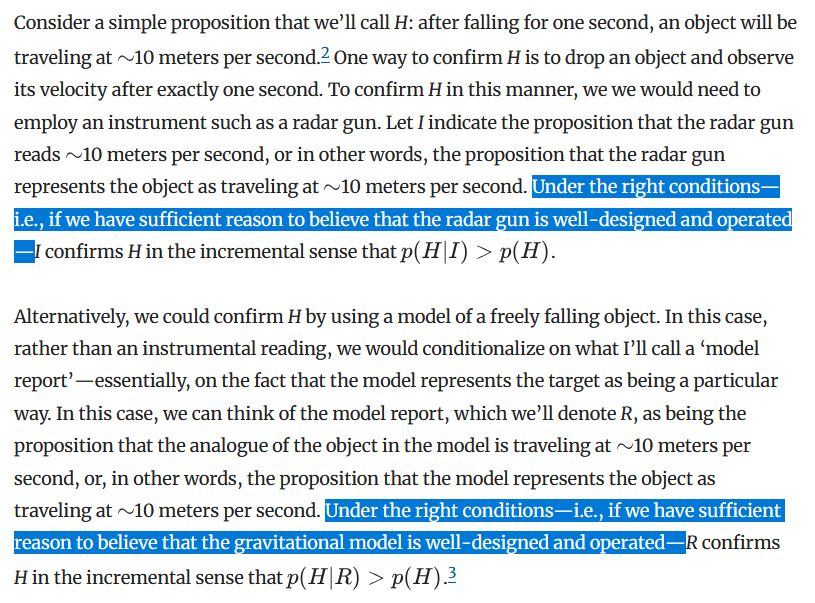

*That* assertion is pretty clearly wrong. I make *exactly* the same qualification in the paragraph immediately preceding the one they cite.

I then explicitly say that the qualifications are the same in the footnote attached to said sentence!

What's more notable is their assertion that I qualify my claim that models provide evidence in a way that I don't qualify the claim that experiments do (the image is from page 46 of their paper).

Brian McLoone, Steven Orzack, and Elliot Sober have a new paper out in which they argue (among other things) that I'm wrong about robustness.

I think *they're* wrong, of course -- indeed, I think I addressed all their arguments already in the paper they cite. Unsurprisingly, they disagree!

So I asked it to write the intro to an ethics paper in my style, since I've never published anything in ethics.

The thesis it came up with?

All injustice is ultimately epistemic injustice.

So now I know what it is like to be roasted by an LLM. (3/3)

I mention this here because ChatGPT -- without being asked -- described my style using exactly those elements that I *aim* for. That was both flattering and slightly worrying.

It then rewrote the intro to one of my published papers. Boring. (2/3)

Recently, I asked ChatGPT to write the intro to a paper in my style, because I curious how good it would be at imitating an author, and the best way to tell that would be by asking it to imitate the author I know best.

(Don't worry, there's a punchline here. 1/3)

People have strong opinions about which *entirely fictional* characters should date.

I mean, I guess that's not in the news, but just agreeing that there are a lot things that people have strong opinions about that are much ... further from relevant than Zionism.

(For those without access to PoS, you can find a pre-print on my website or on the archive: philsci-archive.pitt.edu/23817/)

Also included in the paper are discussions of the connections between classical statistics and epistemology and contrasting views about the goal of statistical theory -- should statisticians be more like engineers or logicians?