A diagram illustrating pointwise scoring with a large language model (LLM). At the top is a text box containing instructions: 'You will see the text of a political advertisement about a candidate. Rate it on a scale ranging from 1 to 9, where 1 indicates a positive view of the candidate and 9 indicates a negative view of the candidate.' Below this is a green text box containing an example ad text: 'Joe Biden is going to eat your grandchildren for dinner.' An arrow points down from this text to an illustration of a computer with 'LLM' displayed on its monitor. Finally, an arrow points from the computer down to the number '9' in large teal text, representing the LLM's scoring output. This diagram demonstrates how an LLM directly assigns a numerical score to text based on given criteria

LLMs are often used for text annotation, especially in social science. In some cases, this involves placing text items on a scale: eg, 1 for liberal and 9 for conservative

There are a few ways to accomplish this task. Which work best? Our new EMNLP paper has some answers🧵

arxiv.org/pdf/2507.00828

27.10.2025 14:59

👍 27

🔁 8

💬 1

📌 0

Can large language models (LLMs) fairly annotate data on contentious topics?

Our new paper dives into this question—looking at whether LLM-generated labels reflect diverse viewpoints or skew toward majority perspectives. The results are surprisingly nuanced. 🧵

11.07.2025 16:44

👍 17

🔁 4

💬 1

📌 1

📣 FUN UPDATE: We're extending the deadline by 3 days!! Submit to the NLP4Democracy workshop by June 22!

19.06.2025 23:22

👍 6

🔁 2

💬 0

📌 0

Quantifying Narrative Similarity Across Languages - Hannah Waight, Solomon Messing, Anton Shirikov, Margaret E. Roberts, Jonathan Nagler, Jason Greenfield, Megan A. Brown, Kevin Aslett, Joshua A. Tuck...

How can one understand the spread of ideas across text data? This is a key measurement problem in sociological inquiry, from the study of how interest groups sh...

I am thrilled to share a new article in Sociological Methods & Research, “Quantifying Narrative Similarity Across Languages”. My co-first author Sol Messing and our collaborators developed a new approach to measuring “narrative similarity” between texts: journals.sagepub.com/doi/10.1177/...

18.06.2025 15:56

👍 58

🔁 27

💬 3

📌 4

NLP 4 Democracy - COLM 2025

📣 Super excited to organize the first workshop on ✨NLP for Democracy✨ at COLM @colmweb.org!!

Check out our website: sites.google.com/andrew.cmu.e...

Call for submissions (extended abstracts) due June 19, 11:59pm AoE

#COLM2025 #LLMs #NLP #NLProc #ComputationalSocialScience

21.05.2025 16:39

👍 47

🔁 18

💬 1

📌 6

Unidentified men grabbing someone off the street and putting her in a car because she wrote an op-Ed. This as flatly authoritarian as anything we’ve seen in this country in a very long time.

26.03.2025 17:41

👍 36502

🔁 16291

💬 1844

📌 1036

It's well known that politicians take more extreme positions during primaries. In @electoralstudies.bsky.social, we find this shift is much more likely when incumbents in safe seats face a well-funded primary challenger.

🧵👇

authors.elsevier.com/a/1kn5KxRaZn...

17.03.2025 14:12

👍 7

🔁 6

💬 1

📌 1

Re-upping this again as more people read about the Khalil case. More information to come, but nothing in WP and NYT so far contradicts original reporting. Again, it DOES NOT MATTER what you think about him or his cause. Either government is bound by the law for all of us or we're all at their mercy.

10.03.2025 00:16

👍 1722

🔁 487

💬 14

📌 10

Exclusive: NIH to terminate hundreds of active research grants

Studies that touch on LGBT+ health, gender identity and DEI in the biomedical workforce could be terminated, according to documents obtained by Nature.

NEW: The NIH has begun terminating grants for active projects studying gender identity, DEI, environmental justice, climate change, among other topics.

At least 16 termination letters have already been sent — and hundreds more are coming, people inside NIH tell me.

www.nature.com/articles/d41...

06.03.2025 02:17

👍 1314

🔁 894

💬 39

📌 119

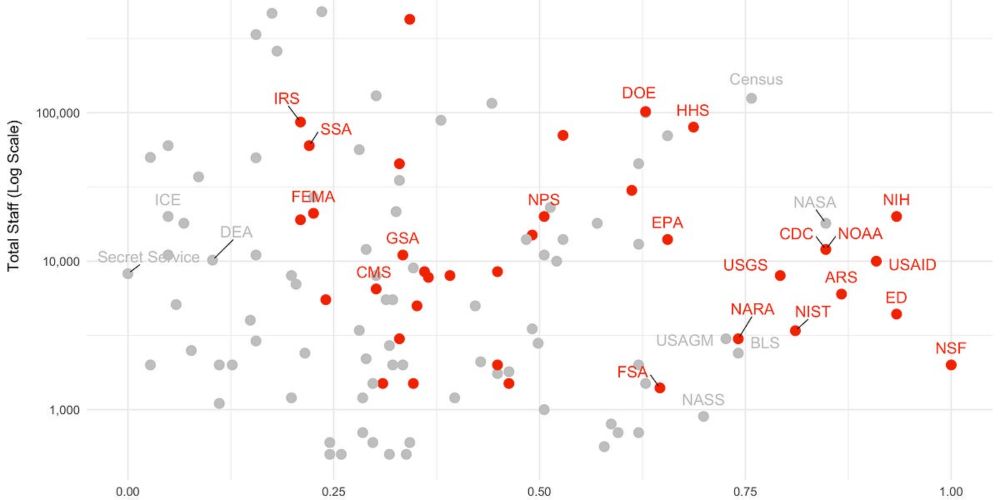

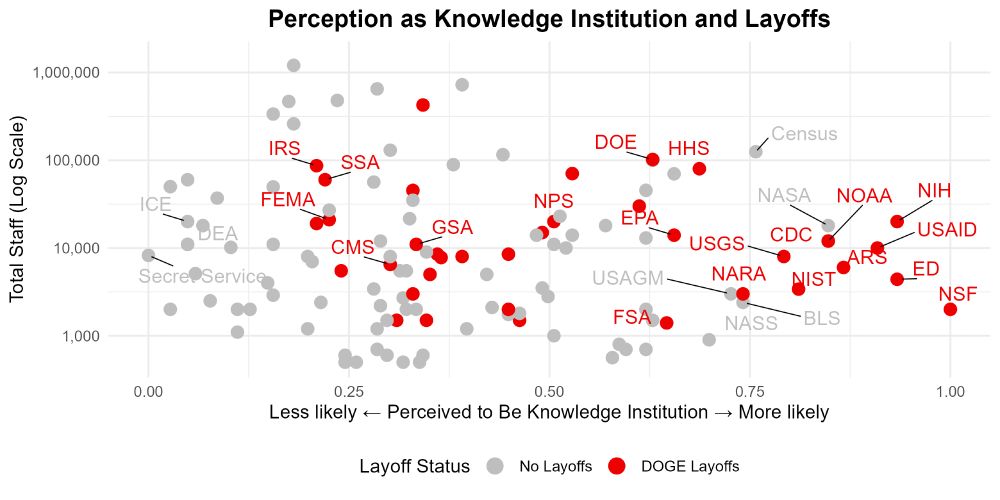

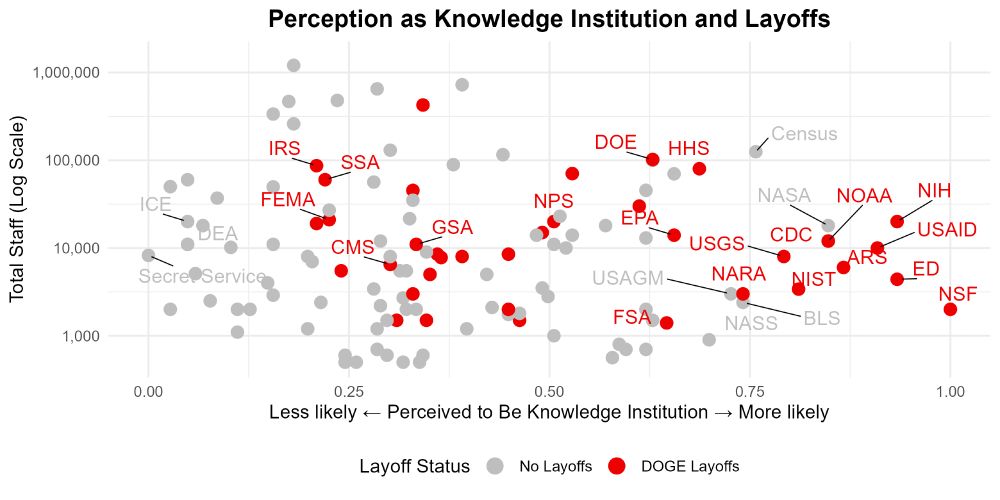

On the 0-1 scale in the graph, the DOJ has a score of 0.44.

06.03.2025 01:25

👍 2

🔁 0

💬 1

📌 0

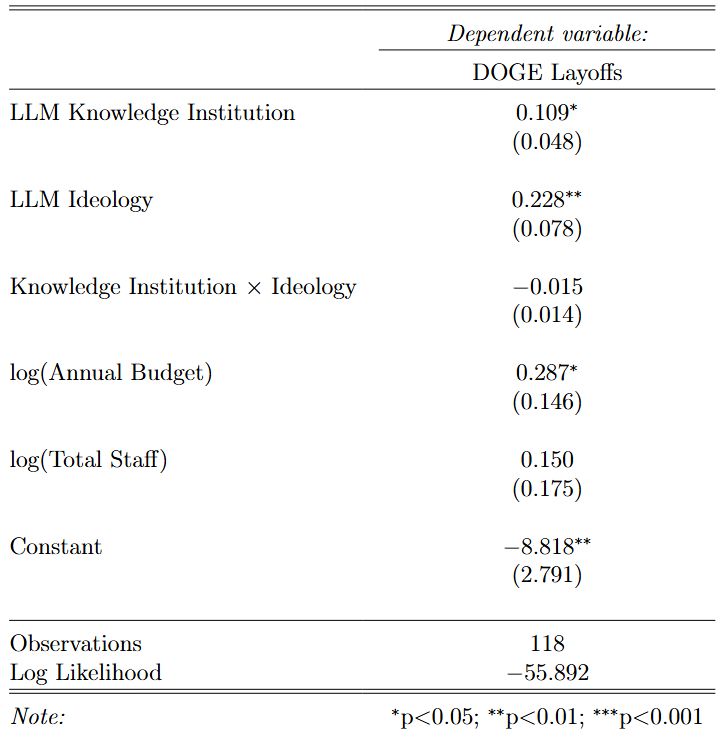

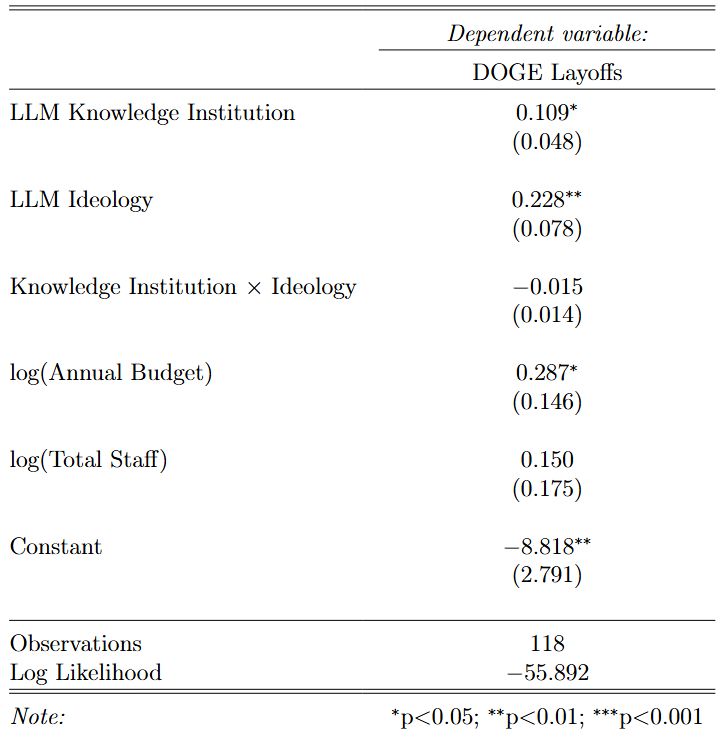

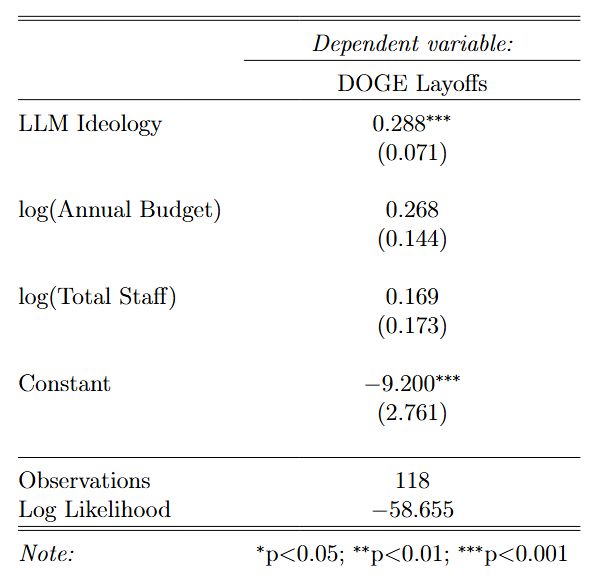

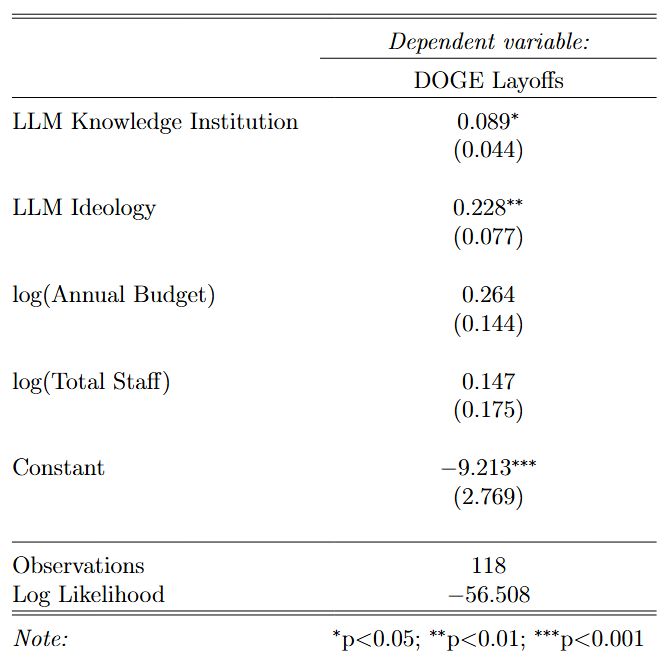

The correlation is 0.52. Here are the logistic regression results with the interaction term. Interestingly, the coefficient is slightly negative, but the p-value is 0.28.

06.03.2025 01:23

👍 1

🔁 0

💬 0

📌 0

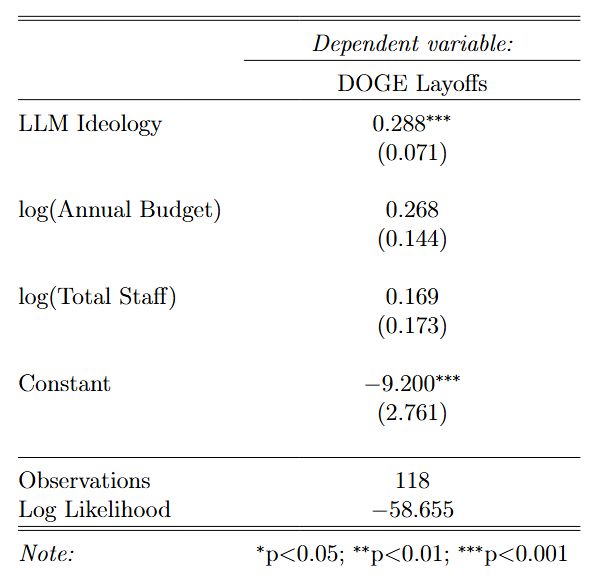

5/ This LLM-based approach also replicates @adambonica.bsky.social's finding that agencies perceived as more liberal are more likely to face DOGE layoffs.

05.03.2025 22:34

👍 10

🔁 0

💬 1

📌 0

4/ The findings suggest that the DOGE layoffs go beyond the perceived ideology of the agency: they appear to be a fight over who gets to wield and distribute knowledge. It would explain why DOGE eliminated 18F, a cost-recoverable division that open sourced solutions to improving govt efficiency.

05.03.2025 22:34

👍 12

🔁 2

💬 1

📌 0

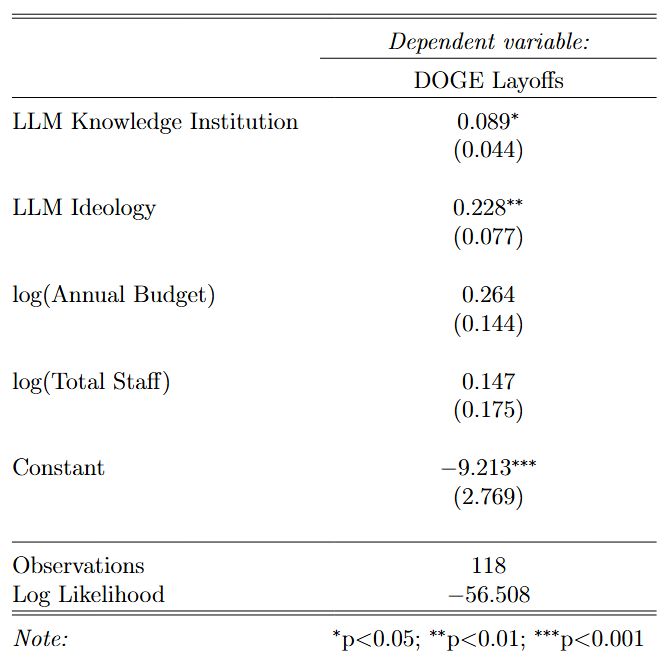

3/ Using a logistic regression, I find that this measure is strongly predictive of DOGE layoffs, even when controlling for ideology, annual budget, and total staff.

05.03.2025 22:34

👍 8

🔁 0

💬 1

📌 0

2/ I use a generative LLM (Llama 3.3 70B Instruct) to make pairwise comparisons between all agencies, prompting the LLM to select the agency more likely to be perceived as a knowledge institution. I then use the Bradley-Terry model to estimate a latent score for each agency along this dimension.

05.03.2025 22:34

👍 11

🔁 0

💬 1

📌 0

1/ Curtis Yarvin's ideas have been influential on current admin members. One of his core ideas is that knowledge institutions--institutions he believes create, distribute, and legitimize knowledge--have been dominated by progressive messaging and need to be dissolved.

05.03.2025 22:34

👍 17

🔁 3

💬 1

📌 1

Scatterplot showing various U.S. government agencies plotted with the total staff (on a log scale) on y-axis versus likelihood of being perceived as a knowledge institution on the x-axis. Red dots indicate agencies that have experienced DOGE layoffs, while gray dots indicate agencies without layoffs. Agencies like NIH, NSF, CDC, and NOAA appear on the right side (more likely to be perceived as knowledge institutions), while agencies like ICE, DEA, and Secret Service appear on the left side (less likely to be perceived as knowledge institutions).

@adambonica.bsky.social showed ideology predicts which agencies experience DOGE layoffs. But what other factors could be driving this?

Using a generative LLM-derived measure, I find agencies perceived as knowledge institutions are more likely to experience layoffs, even controlling for ideology. 🧵

05.03.2025 22:34

👍 128

🔁 59

💬 6

📌 7