See how eye tracking is bridging the gap between machine metrics and human performance: pupil-labs.com/blog/beyond-...

See how eye tracking is bridging the gap between machine metrics and human performance: pupil-labs.com/blog/beyond-...

How do drivers really handle complex situations on the road?

Our latest Research Digest summarizes four recent studies using Neon to decode the cognitive processes of driving. From hidden stress levels during routine maneuvers to mapping how gaze adapts to weather and autonomous driving.

If we didn’t get a chance to speak in person, here’s a video that shows a glimpse of our booth and what we showcased this year.

You can also learn more about Neon here: pupil-labs.com/products/neon

We had a great time meeting so many of you across diverse fields, from clinical neuroscience to animal behaviour, vision restoration, VR research, and more.

That’s a wrap on @sfn.org (Society for Neuroscience) #SfN25!

Four days of conversations, demos, and reconnecting with the neuroscience community. Thank you to everyone who stopped by Booth 435 to explore Neon, discuss your research, or share ideas.

Whether you work with pupillometry, clinical populations, or naturalistic gaze behaviour in real-world or VR setups, we’d love to show you what’s possible with Neon.

#SfN2025

We’re halfway through Society for Neuroscience @sfn.org, and it has been great meeting so many behavioural and cognitive neuroscientists.

If you’re looking for high-grade, flexible eye-tracking tools that adapt to your research, from VR setups to real-world environments, visit us at Booth 435.

The future of neuroscience is in the real world. We're bringing the tools to make it happen.

Find our team at @sfn.org Neuroscience 2025 (Booth 435), San Diego Convention Center, Nov 16-19.

We'll be showing how Neon's real-world eye tracking is opening new frontiers in science.

#SfN25

Discover how eye tracking is revealing a new dimension of social behavior: pupil-labs.com/blog/wearabl...

In our latest research digest, we explore three studies using Neon eye tracking glasses to capture gaze in natural interactions. From infants actively seeking faces to therapists building trust and partners synchronizing blinks, wearable eye tracking uncovers the hidden signals of human connection.

Human gaze is a language without words. It signals attention, conveys emotion, and reveals intent.

It’s a faster, more flexible way to study gaze in sports, classrooms, or any dynamic, real-world environment.

All powered by a user-friendly Gradio bsky.app/profile/grad... app in Google Colab. No coding required!

Follow along here: docs.pupil-labs.com/alpha-lab/dy...

Define a dynamic Area of Interest with a single click, and the tool automatically follows it throughout your recording, mapping your gaze to the moving object. No more tedious manual coding or being limited by predefined categories.

What if you could automatically track gaze on any moving object?

Our new Alpha Lab tutorial makes it possible, introducing a powerful workflow that combines our Neon eye tracker with metaopensource.bsky.social Segment Anything Model 2 (SAM2).

The process is simple: Click. Segment. Track.

Real-time Python Client Update: Now supports streaming live audio from Neon!

Play synced gaze + video + audio, analyze, or transcribe (STT) in real-time.

Start listening. Update now:

pip install -U pupil-labs-realtime-api

Learn more: pupil-labs.github.io/pl-realtime-...

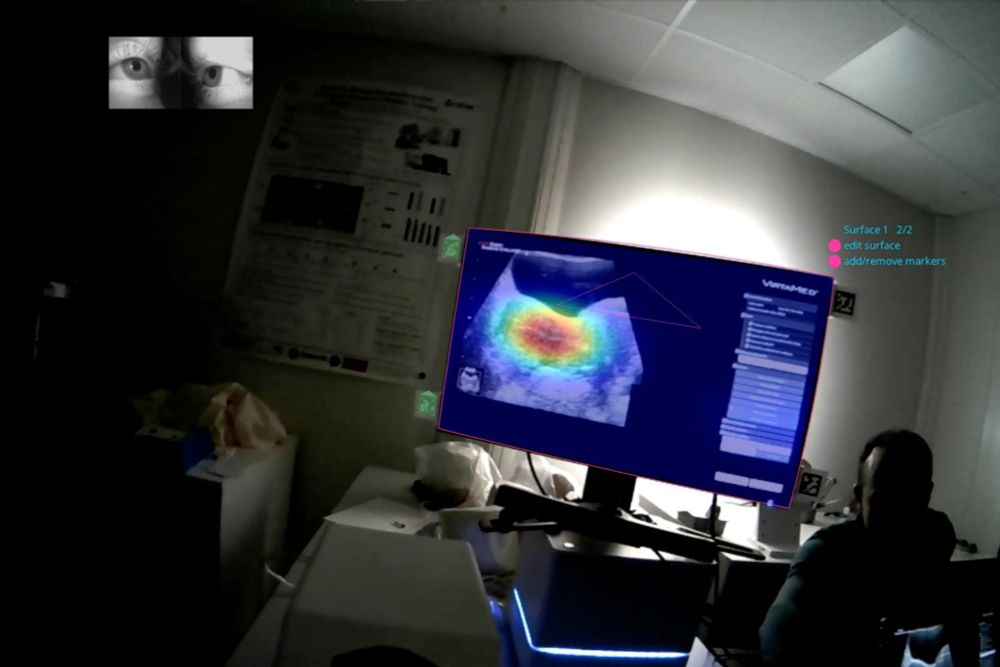

Discover the full study and how eye tracking is reshaping reproductive medicine: pupil-labs.com/blog/unveili...

Their findings reveal distinct gaze patterns and cognitive strategies that differentiate experts from novices — a breakthrough that could transform medical training and improve IVF success rates.

What does the expert eye see during ultrasound-guided embryo transfer?

Researchers of the LTSI at University of Rennes combined Pupil Labs Neon eye tracking glasses with a high-fidelity simulator to capture how specialists visually navigate this delicate procedure.

(Video credit: Josselin Gautier)

You can now:

• Customize gaze (circle or crosshair) and fixation visualization styles

• Add synchronized eye video overlays (adjust position and transparency of the overlay)

• Draw and rectangular AOIs

Read the full release notes: pupil-labs.com/releases/v7-...

Updates for Pupil Cloud

We’ve rolled out a big update to the Video Renderer and introduced a new drawing tool in the AOI Editor.

The Galea headset is available now at galea.co

This collaboration supports our shared mission of making high-precision, accessible tools that accelerate the future of human-centered research. We can’t wait to see how the community puts it to work.

Key features include:

- Fast, flexible prototyping for multi-modal research

- One streamlined developer interface for eye, brain, and body data

- A fully mobile design for natural, untethered movement

- Quick transitions across VR, XR, 2D screen, and real-world studies

OpenBCI’s new Galea headset is packed with bio-sensors including a seamless integration with our Neon eye tracking module. This is a research-grade toolkit that combines all the most import human behavior seniors into a single device for researchers in neuroscience, HCI, clinical fields, and beyond.

We’re very excited to announce a new collaboration between Pupil Labs and OpenBCI. Together we are merging eye tracking + brain and body sensing to create one powerful, unified research platform.

If you're here at the conference, come see the recording and chat about eye tracking! We’re in the exhibit area, right by the posters and the coffee ☕

There’s always more than meets the eye at ECVP @ecvp.bsky.social 👀

We took Neon for a spin through "Highlights from the Barn" – An exhibition featuring illusions from Dr. Bernd Lingelbach's collection.

We’ve got a full house and are very much looking forward to connecting with everyone!

Can’t attend the workshop - swing by the conference exhibit space to get a hands-on demo and meet with our team.

Meet us in Mainz at the @ecvp.bsky.social (European Conference on Visual Perception).

Next Sunday we will host a hands-on eye tracking workshop with Neon. The focus is on programmatic control - using our Python APIs to stream live data, manage experiments, and analyze results directly with code.

This work is a step forward for sports psychology and performance analysis, offering a path to better training and decision-making.

Dive into our Research Digest to see how they built this "cognitive radar": pupil-labs.com/blog/eye-tra...

Key Findings:

- 🧠 Referees showed higher cognitive load (larger pupil diameter) when observing defensive plays.

- 👀 Coaches' visual focus shifted dramatically depending on whether their team was on offense or defense.