Huge congrats!!! 🥳👏🤩🤩

Huge congrats!!! 🥳👏🤩🤩

Symposia submissions for #IMRF2026 in Genoa are now open! 🎉

Submit your proposal by 7 February 2026 via the conference website:

imrf2026.sciencesconf.org

We look forward to receiving your contributions!!

Repetition suppression for mirror images of objects and not Braille letters in the ventral visual stream of congenitally blind individuals www.eneuro.org/content/earl... by @katarzynaraczy.bsky.social et al.

#eNeuro | Korczyk, @katarzynaraczy.bsky.social, and Szwed found brain activity linked to reading expertise may be repurposed in blind people https://doi.org/10.1523/ENEURO.0002-25.2025

🎉 We’re thrilled to announce the 24th International Multisensory Research Forum (IMRF 2026)!

📍 Genova, Italy

🗓️ June 24–27, 2026

More details coming soon — stay tuned!

We can’t wait to see you in Genova! 🇮🇹

🚀 We’re hiring - Join our lab 🚀

🔍 Hiring: PhD (75% TV-L) & Postdoc (100% TV-L)

🧠 fMRI, VR, EEG, modelling

We combine a range of cognitive neuroscience methods to study flexible behaviour.

📅 Start: Feb 2026 or later | ⏳ Apply by Nov 3!

More details:

tinyurl.com/ms3a9ajt

#CognitiveNeuroscience

(please repost) If you're looking for a #neuroscience PhD program - and interested in human brain plasticity and reorganization (neuroimaging in people born with blindness, deafness or without hands), my lab is accepting students this cycle. Email me!

Excited to share our (Flo Martinez Addiego @yuqiliu1179.bsky.social @culhamari-lab.bsky.social) paper, now published in PNAS!

www.pnas.org/doi/10.1073/...

(or an easier read on medicine.georgetown.edu/news-release... )

The role of visual experience in haptic spatial perception: evidence from early blind, late blind, and sighted individuals bsd.biomedcentral.com/articles/10.... #haptics

@lenia-amaral.bsky.social

fMRI experimental design and procedure

Decoding semantic sound categories in early visual cortex academic.oup.com/cercor/artic... "semantic and categorical sound information is represented in early visual cortex, potentially used to predict visual input"; #neuroscience

It's a wrap!

We are happy to bring IMRF 2025 to a close, but also sad to say goodbye to so many lovely, smart people! (or at least "until next year").

@lenastroh.bsky.social, @paulmatusz.bsky.social, @nikaradziun.bsky.social, Lénia Amaral, Rashi Pant

What a fantastic time at IMRF! 🎉

Huge thanks to the brilliant speakers for making our symposium on “Blindness as a Window into Brain Organisation” a big success! Big thanks to the organisers and the scientific community for this stellar event! Great to see so many friends and colleagues! #IMRF2025

Symposia 3 and 4: what blindness reveals about brain organisation (Raczy, Stroh) and metacognition in mutlisensory decision-making (Noppeney) are on now. Such interesting topics and talks! 🤩 #IMRF2025

Today @nikaradziun.bsky.social gave a fantastic talk on cardiac interoception processing in the blind as part of the symposium "Blindness as a window into fundamental principles of brain organisation" #IMRF2025 @imrf.bsky.social

Thrilled to be attending the #IMRF2025 @imrf.bsky.social in beautiful Durham this week! I'll be presenting in Symposium 3 on Wednesday at 11:00, kindly organized by @katarzynaraczy.bsky.social and @lenastroh.bsky.social. Looking forward to the discussions ahead! 🧠

IMRF folks, we are ready for you!

We cannot wait to see you all tomorrow for the opening (1.30pm) or the workshops (9am).

Safe travels & looking forward to catching up tomorrow

Your organising team

imrf2025.sciencesconf.org

Got any job openings/PhD positions in your #multisensory research labs? Let us know by tagging us - we are happy to share it with the community

New to IMRF? Or just not sure what to do on the evening before the conference? 🙌

Come down to the *Head of Steam* on Monday evening, 14th July, at 8 pm to meet new and old friends!

Look out for people wearing IMRF t-shirts! 👕

maps.app.goo.gl/T6hcD9t5JtaU...

How does the brain integrate artificial body extensions? Using a custom-built finger-extending exoskeleton, we show that wearable augmentations are quickly integrated into body representation, with proprioceptive space adapting to the device’s structure and function.

www.biorxiv.org/content/10.1...

There is still ~2 weeks time to sign up for one of our exciting pre-conference methods workshops:

imrf2025.sciencesconf.org/resource/pag...

How is high-level visual cortex organized?

In a new preprint with @martinhebart.bsky.social & @kathadobs.bsky.social, we show that category-selective areas encode a rich, multidimensional feature space 🌈

www.biorxiv.org/content/10.1...

#neuroskyence

🧵 1/n

I'm particularly happy to see this preprint out! Lenny compellingly demonstrates, in a data-driven way, a coding scheme unifying distributed dimensions and category selectivity. The idea:Higher visual cortex comprises many partially overlapping tuning maps that include but go beyond category tuning.

If you use PsychoPy we would be very grateful for 5-15 mins of your time to complete this survey 🙏🏼

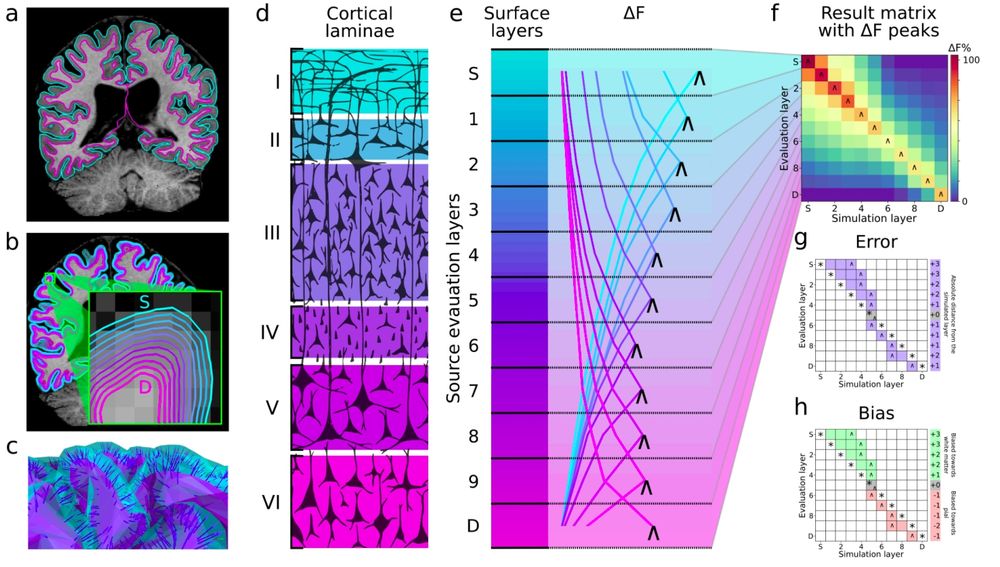

Overview of the simulation strategy and analysis. a) Pial and white matter boundaries surfaces are extracted from anatomical MRI volumes. b) Intermediate equidistant surfaces are generated between the pial and white matter surfaces (labeled as superficial (S) and deep (D) respectively). c) Surfaces are downsampled together, maintaining vertex correspondence across layers. Dipole orientations are constrained using vectors linking corresponding vertices (link vectors). d) The thickness of cortical laminae varies across the cortical depth (70–72), which is evenly sampled by the equidistant source surface layers. e) Each colored line represents the model evidence (relative to the worst model, ΔF) over source layer models, for a signal simulated at a particular layer (the simulated layer is indicated by the line color). The source layer model with the maximal ΔF is indicated by “˄”. f) Result matrix summarizing ΔF across simulated source locations, with peak relative model evidence marked with “˄”. g) Error is calculated from the result matrix as the absolute distance in mm or layers from the simulated source (*) to the peak ΔF (˄). h) Bias is calculated as the relative position of a peak ΔF(˄) to a simulated source (*) in layers or mm.

🚨🚨🚨PREPRINT ALERT🚨🚨🚨

Neural dynamics across cortical layers are key to brain computations - but non-invasively, we’ve been limited to rough "deep vs. superficial" distinctions. What if we told you that it is possible to achieve full (TRUE!) laminar (I, II, III, IV, V, VI) precision with MEG!

Happy Friday Multisensory pals,

the talk/poster session schedule for IMRF 2025 is now available on our website - check it out here: imrf2025.sciencesconf.org/resource/pag...

✌️👁️👅👂👃🦇🦾🦻⚖️

New paper! We show written word-selective ventral occipito-temporal region, the superior temporal sulcus, and orofacial sensorimotor regions encode phonological information from auditory and visual speech, but with multisensory abstraction only in STS and SM. 💬 🧠

www.jneurosci.org/content/earl...

Phonological representations of auditory & visual speech in the occipito-temporal cortex and beyond www.jneurosci.org/content/earl... "studies suggested that occipital and temporal regions encode both auditory and visual speech features but their location and nature remain unclear" so an fMRI study