photo from groundlevel of a small pong. Many frogs can be seen in the water

Morning

#PondLife #Sheffield #Frogs

@tomstafford.mastodon.online.ap.brid.gy

"A vast confusion of vows, wishes, actions, edicts, petitions, lawsuits, pleas, laws, proclamations, complaints, grievances, are daily brought to our ears. " [bridged from https://mastodon.online/@tomstafford on the fediverse by https://fed.brid.gy/ ]

photo from groundlevel of a small pong. Many frogs can be seen in the water

Morning

#PondLife #Sheffield #Frogs

Pair with Kevin Munger:

https://kevinmunger.substack.com/p/things-will-have-to-change

"So, yes, the current situation with journals publishing static pdfs is indefensibly antiquated. But the current academic polyarchy is actually quite robust; the sluggishness, the institutional conservativism […]

Matt Grawitch sketches what a peer review system which embraces AI would look like

https://mattgrawitch.substack.com/p/no-ai-was-used-in-the-writing-of

"Why the academic obsession with human-only peer review protects bias, inconsistency, and anonymous reviewer soapboxes"

Here's @davekarpf lucid on AI and research.

AI kills the paper and good riddance

https://davekarpf.beehiiv.com/p/can-ai-replace-social-science-researchers

Getting a complex revise and resubmit is like getting assigned to a murder trial. You call work: "Sorry, I'm out for the rest of the year"

Reviewing should be like jury service. You have a chance of being randomly selected, then the funder or journal calls up your institution and they have to give you two weeks off, or whatever, and you bash out as many reviews as you can in that time

#AcademicChatter

A large-scale randomized study of large language model feedback in peer review: www.nature.com/articles/s42...

RCT w/ 20k reviews shows that 27% of reviewers who received feedback from AI updated their reviews, and blinded eval confirmed revised feedback was more informative.

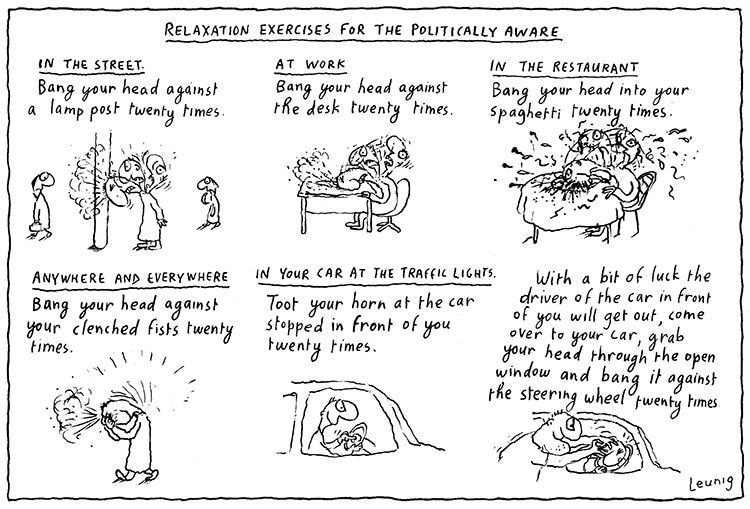

Cartoon:: a chaotic mess

Here's the plan

(Michael Leunig, https://www.leunig.com.au/)

@drjennings.bsky.social alternatively

@drjennings.bsky.social

![A table of 18 types of "Disagreement Strategy," with a tag for each strategy in square brackets next to its name, and a text description of each strategy. The strategies, which are arranged in two columns with green, yellow, orange, and red color bars, include Complex Counter Argument [CCA], Dismantle [DIS], Softened Counter Argument [SCA], Regular Counter Argument [RCA], Critical Question [CQ], Invitation For Cooperation [IFC], Playing On Emotions [POE], Joking [JOK], Reasoned Direct Denial [RDD], Proposing Alternative [PRA], Deafening Silence [DES], Agree to Disagree [ATD], Breakdown of Dialogicity [BOD], Unreasoned Direct Denial [UDD], Ordering [ORD], Irrelevancy Claims [IRC], Ironic Echoing [IRE], and Blatant or Aggressive Denial [BAD].](https://cdn.bsky.app/img/feed_thumbnail/plain/did:plc:n2yhxn2fcj4ccyl6qkim43zh/bafkreifsilajg4eg55gywqky74a3qg4sxe6gj5g2hcvsb754tghldyccby@jpeg)

A table of 18 types of "Disagreement Strategy," with a tag for each strategy in square brackets next to its name, and a text description of each strategy. The strategies, which are arranged in two columns with green, yellow, orange, and red color bars, include Complex Counter Argument [CCA], Dismantle [DIS], Softened Counter Argument [SCA], Regular Counter Argument [RCA], Critical Question [CQ], Invitation For Cooperation [IFC], Playing On Emotions [POE], Joking [JOK], Reasoned Direct Denial [RDD], Proposing Alternative [PRA], Deafening Silence [DES], Agree to Disagree [ATD], Breakdown of Dialogicity [BOD], Unreasoned Direct Denial [UDD], Ordering [ORD], Irrelevancy Claims [IRC], Ironic Echoing [IRE], and Blatant or Aggressive Denial [BAD].

The Hierarchical Taxonomy of Disagreement Strategies (HiTODS)

https://doi.org/10.1016/j.edurev.2026.100769

What's a multiverse good for anyway?

https://osf.io/preprints/psyarxiv/37g29_v1

"We discuss various ways in which a multiverse may be employed – as a tool for reflection and critique, as a persuasive tool, as a serious inferential tool ...it fails as a persuasive tool when researchers disagree […]

Hyperactive Minority Alter the Stability of Community Notes

https://arxiv.org/abs/2602.08970

"[We] conduct counterfactual simulations that modify the display status of notes by varying the pool of raters. Our results reveal that the system is structurally unstable: the emergence and visibility […]

This image is a statistical box plot that visualizes how different factors contribute to the variance of treatment t-statistics across various outcomes. Title: Distribution of $\eta^2$ components across outcomes (t-statistics). Y-Axis: Represents $\eta^2$ (eta-squared), ranging from 0.00 to 1.00, which measures the proportion of variance explained. X-Axis: Categorizes the variance into four components: Outlier, Missing, Transform, and Residual. This order is also the largest to smallest ordering

Results from Randomized Controlled Trials are Highly Sensitive to Data Preprocessing Decisions: A Multiverse Analysis of 97 Outcomes

https://osf.io/preprints/metaarxiv/kbgc2_v2

This is nice: take open data from RCTs, and show how defensible preprocessing […]

[Original post on mastodon.online]

Of note

https://minutes.substack.com/p/rented-virtue

"The work that lasts from this era will be no different. The companies that endure, the technologies that serve rather than consume, the institutions that hold their shape across generations when the founders are dead and the capital is […]

Sound the nerd victory klaxon!

Tax nerd throws life savings at prediction markets, after realising he could take the opposite side of bets by Musk fans. DOGE, inevitability, fails to decrease year on year federal spending. He wins big […]

Following up for this, I've written a longer piece for the new @RoRInstitute #Metascience substack

https://researchonresearchinstitute.substack.com/p/the-point-of-no-return

My minimal claim is that, contra Schweiger, it is very hard to definitely claim any scheme a waste of time and money […]

New newsletter!

https://tomstafford.substack.com/p/specifically-right-generally-wrong

on reactions I had to the most controversial piece I've written for a while

Photo of a book White Moss by Anna Nerkagi A silhouetted figure rides a sleigh in the arctic tundra

Recommend

@drjennings.bsky.social sure to be an attractive prospect for billionaire philanthropic dollar "For too long research has been held back by the inefficiency of having to conduct ethical studies..something something disruption ...something something red tape"

I've just an idea for a crazily unethical experiment:

- pick ~20 minor celebrities

- randomise into two halves

- half you leave alone

- half, you buy a bot army which responds with enthusiastic endorsement whenever they say anything edgy, conspiratorial or political on social media

- come back […]

Following up for this, I've written a longer piece for the new @RoRInstitute #Metascience substack

https://researchonresearchinstitute.substack.com/p/the-point-of-no-return

My minimal claim is that, contra Schweiger, it is very hard to definitely claim any scheme a waste of time and money […]

Life is pain, Highness.

#PrincessBride

@jamesjefferies organising often a thankless task. But you make the world go round

LLMs should be a private cognitive tool, like a calculator, which is not currently possible under our existing model of corporate AI. Crucially, they do not think and have no agency, again in the same way as a calculator

Some useful thinking out loud from @philipncohen on developing moderation rules to cope with AI generated content submitted to SocArxiv

https://socopen.org/2026/02/22/where-should-socarxiv-draw-the-ai-line/

New substack from @RoRInstitute

https://researchonresearchinstitute.substack.com

#MetaScience

A heatmap with various model names for each row (Gemini Pro 3, Gemini Flash 3 etc etc on to Claude Opus 4.6) and most of the rows are coloured dark or light green (which is good)

Same Prompt, Different Outcomes: Evaluating the Reproducibility of Data Analysis by LLMs

https://arxiv.org/abs/2602.14349

Eyeballing figure 1 the ability and consistency of LLMs for data analysis looks ... pretty good. Better than humans?

Metascience seminar 1: Improve our inferences from observational studies

https://events.humanitix.com/metascience-seminar-1-harrison-hansford

The event is free for all.

Date and time: March 12 at 11AM (GMT + 11; Sydney, Melbourne, Canberra time)

Speaker: Harrison Hansford

#MetaScience […]

"Since homeopathy contradicts established scientific principles, doubts about the trial’s validity quickly emerged."

I feel like the homeopathy-reproducibility beat is a bit like shooting fish in a barrel https://link.springer.com/article/10.1186/s41073-026-00191-5