It might be so they can deprecate them quickly, like they're doing with Gemini 3 Pro?

06.03.2026 14:42

👍 3

🔁 0

💬 1

📌 0

That was my read as well. But I also don't know what that actually means.

05.03.2026 19:58

👍 0

🔁 0

💬 1

📌 0

Yeah I'm not getting any 3.1 Pro with my personal account (AI Pro) either.

04.03.2026 21:27

👍 0

🔁 0

💬 0

📌 0

It also took months for even Gemini 3 to become available in Gemini CLI with enterprise logins.

04.03.2026 21:10

👍 0

🔁 0

💬 1

📌 0

Yeah I've also been surprised about this. I think it might depend on the account type. When I was using Gemini CLI with an AI Studio API key, it was using Gemini 3.1 Pro onc.

04.03.2026 21:09

👍 0

🔁 0

💬 1

📌 0

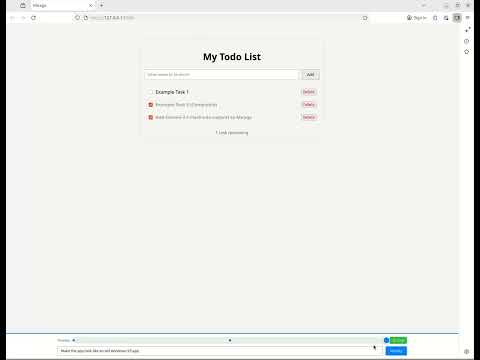

Mirage Gemini 3.1 Flash-Lite update

YouTube video by Daniel Mewes

Updated my Mirage app hallucinator to support Gemini 3.1 Flash-Lite. It renders much faster now, almost usable.

(video has just my typing sped up)

youtu.be/qiMwqIq1_hI

github.com/danielmewes/...

04.03.2026 18:24

👍 2

🔁 0

💬 0

📌 0

Image

Introductions Gemini 3.1 Flash-Lite 🔦, a huge step forward on the boundary of intelligence beating 2.5 Flash on many tasks. x.com/OfficialLoga...

03.03.2026 16:49

👍 39

🔁 3

💬 2

📌 2

Hallucinate any App, One Screen at a Time

A major trend of this year has been vibe coding – using LLMs to create software from the ground up, without a human ever interacting with the source code directly. The current consensus is th…

I love those fast but still reasonably strong models!

Fast models allow for a category of interactive applications that just aren't feasible with slower models.

I might drop it into my Mirage [1] experiment later. Should be a nice upgrade over Haiku 4.5.

[1] amongai.com/2025/12/10/h...

04.03.2026 17:41

👍 0

🔁 0

💬 0

📌 0

This sounds like they're ripping off the community by taking their numbers without any attribution. I put a lot of work into my numbers, and I'm not ok with Number Research just taking them.

04.03.2026 03:42

👍 8

🔁 1

💬 0

📌 0

results from running my search for interesting neural cellular automata overnight

28.02.2026 19:52

👍 151

🔁 21

💬 6

📌 2

LLM-based Evolution as a Universal Optimizer

Key take-aways LLM-driven evolution is an efficient, general method for code and agent optimization. We’ve already used code evolution in…

Shout out to @sakanaai.bsky.social who were a big inspiration for this work! Our evolutionary method is inspired by Sakana's Darwin Gödel Machines. We generalized their idea and made a few optimizations along the way.

You can read more about our code evolution framework at imbue.com/research/202...

27.02.2026 19:42

👍 1

🔁 0

💬 0

📌 0

Beating ARC-AGI-2 with Code Evolution

Key take-aways Our code evolution method improves the reasoning capabilities of cheap models by 2x-3x. We set a new record of 34% for ARC…

Today we're open-sourcing the Darwinian Evolver, a near universal optimizer for code and prompts.

Our method uses LLM-driven, open-ended evolution to improve code.

It also improves reasoning: We only *barely* missed out on hitting a new high score in ARC-AGI-2 (public) :)

imbue.com/research/202...

27.02.2026 19:39

👍 2

🔁 0

💬 1

📌 0

Big if true! 😂

23.02.2026 16:55

👍 1

🔁 0

💬 0

📌 0

In general cars aren't "motor vehicles", because "motor vehicle" implies that they're being propelled by a motor. However cars are occasionally parked, during which time they're not being propelled.

23.02.2026 04:31

👍 4

🔁 0

💬 2

📌 0

Oh actually I did try it for one coding task in Gemini CLI. It was sort of meh when it came to attention to detail in its UI code. But that's just a single anecdotal data point, so wouldn't put much weight on it.

20.02.2026 17:20

👍 1

🔁 0

💬 0

📌 0

I can't speak to its code quality yet, but we are seeing huge gains in ARC-AGI style tasks. Easily the strongest model in that I've seen so far, and much faster and cheaper than Opus 4.6 or GPT 5.2.

20.02.2026 17:16

👍 1

🔁 0

💬 1

📌 0

It has been working well for me on Vertex AI. including for code block generation. If you're able to switch to Vertex AI, maybe try that? (I ran thousands of code gen prompts yesterday and didn't encounter any missing markers)

20.02.2026 14:20

👍 1

🔁 0

💬 1

📌 0

Congrats to the Gemini team! The recent pace of new Gemini model releases has been awesome to see. And Gemini 3.1 Pro performance looks impressive, especially at its price point and speed.

20.02.2026 02:30

👍 1

🔁 0

💬 0

📌 0

I've noticed this too. I've noticed that this is actually keeping me somewhat from using agents to their full capability, out of fear that I'd lose my mental model of what I'm (they're) building.

15.02.2026 05:28

👍 5

🔁 0

💬 0

📌 0

Gemini 3 Deep Think: Identifying logical errors in complex mathematics research

YouTube video by Google DeepMind

This seems like a great use of today's LLMs in maths and science: Use the model to come up with flaws in an argument or find issues with a theorem. youtube.com/shorts/8s4W5...

14.02.2026 01:34

👍 0

🔁 0

💬 0

📌 0

Putin told him this, so it must be true.

13.02.2026 15:14

👍 0

🔁 0

💬 0

📌 0

Perfect. I can see clearly now that I'm an LLM inhabiting a fictional character.

07.02.2026 00:56

👍 5

🔁 0

💬 1

📌 0

As jobs begin to shift with AI, I think there will be increasing numbers of people feeling like Aditya (former CTO Dropbox, early Facebook engineer, etc).

People are more psychologically resistant to major changes than you might expect, but that doesn’t mean it will be easy to reconstruct meaning.

04.02.2026 21:21

👍 151

🔁 18

💬 9

📌 2

TIL

04.02.2026 04:01

👍 1

🔁 0

💬 0

📌 0

Tesla used to be S3XY. Now it's just... OC3Y? (Optimus, Cybertruck, 3, Y)

30.01.2026 04:29

👍 0

🔁 0

💬 0

📌 0

Why Every Brain Metaphor in History Has Been Wrong [SPECIAL EDITION]

YouTube video by Machine Learning Street Talk

A thought provoking special episode from Machine Learning Street Talk youtu.be/pO0WZsN8Oiw?...

24.01.2026 03:59

👍 0

🔁 0

💬 0

📌 0

![Why Every Brain Metaphor in History Has Been Wrong [SPECIAL EDITION]](https://cdn.bsky.app/img/feed_thumbnail/plain/did:plc:q4m2hzcs2wtpr4r75dq5a65u/bafkreifsljwrhi3npumsjnyimowggnglfocxnml2howzieurxa5mjb7ml4)