Super basic but watching the sunset by the cross of the martyrs was super nice and the O’Keeffe museum was also great. + you have to get a Frito pie at five & dime!

Super basic but watching the sunset by the cross of the martyrs was super nice and the O’Keeffe museum was also great. + you have to get a Frito pie at five & dime!

Lil personal update: I started a new role at Apple this month! 🍏 I'll be doing full-stack work, building tooling for AIML teams. Excited for what's to come :)

LLMs are good at boilerplate thanks to repetition in the training data. What makes Svelte great (imo!) is how little boilerplate there is. I worry there’s an unsolvable tension between tools that feel great to use by hand vs feel great to use with LLMs. The latter being mostly busywork

Thanks! It's inspired by 0x10c, teenage me couldn't let go of the fact that they never finished the game, so now that I know a little more I'll just make my own version :)

Ended up pushing it here: spesscomputer.nsarrazin.com

It's just a proof of concept at this stage but I'm already amazed it's possible to do this in a browser, godot is pretty wild. Need to add support for interrupts on the 6502 side of things and uuh *just* make a fun game out of it.

thanks! i'm still thinking about how hard to make it, should players figure out orbital parameters from first principles using stuff like star/horizon sensors or do I "cheat" a little and directly expose velocity for example ? don't want it to be overwhelming but not too easy either

It's open-source here: github.com/nsarrazin/sp...

It runs entirely in the browser so I'll be publishing it soon, it's absolutely not ready for release but it's been a fun learning experience.

I still need to figure out how to make a fun game out of this, not sure what the gameplay loop could be.

I've been working on a little game project recently, controlling a spacecraft using a 6502 emulator! You can read sensors and actuate the craft by doing read/writes to memory.

It's a Godot project (with a 6502 rust extension) exported to the web with a svelte interface. A lot of firsts for me 😅

Unfortunately the communities best suited to create such feeds are being alienated from the platform 🫠

Yeah I feel like custom feeds could really solve this, seems like there is a lot of untapped potential in building open recommendation systems that are transparent and customizable. Most feeds I've seen are really basic heuristics and miss out on discoverability

Interface of Space Privacy Analyzer app, describing how it reviews Hugging Face Spaces for data privacy concerns, with a pre-loaded example for the Hugging Face demo app for SmolVLM2

A TLDR report generated by the Spaces Privacy app outlining the different types of data used and where they go when using the app

Today in Privacy & AI Tooling - introducing a nifty new tool to examine where data goes in open-source apps on @hf.co 🤗

HF Spaces have tons (100Ks!) of cool demos leveraging or examining AI systems - and because most of them are OSS we can see exactly how they handle user data 📚🔍

1/4 🧵

Today, we share the tech report for SmolVLM: Redefining small and efficient multimodal models.

🔥 Explaining how to create a tiny 256M VLM that uses less than 1GB of RAM and outperforms our 80B models from 18 months ago!

huggingface.co/papers/2504....

Last week, we launched a waitlist to move builders on @hf.co from LFS to Xet. This was made possible through months of hard work and staged migrations to test our infrastructure in real-time.

This post provides an inside look into the day of our first migrations and the weeks after.

Gemma 3 is live 🔥

You can deploy it from endpoints directly with an optimally selected hardware and configurations.

Give it a try 👇

🤖 (🤗+🐸 )=✅ I am always stoked to share how the tech world operationalizes ethical values, and I get to do that today. 🥳 @hf.co and JFrog have partnered to certify models for security and safety.

This also moves forward AI *trustworthiness* and provides another form of AI *transparency*. More later!

As someone building for the self hosting crowd, the main benefit is the assurance that my app will work across all kind of setups. I'd be open to support alternatives but I genuinely don't know any 😅 What would be your ideal distribution method for full stack apps?

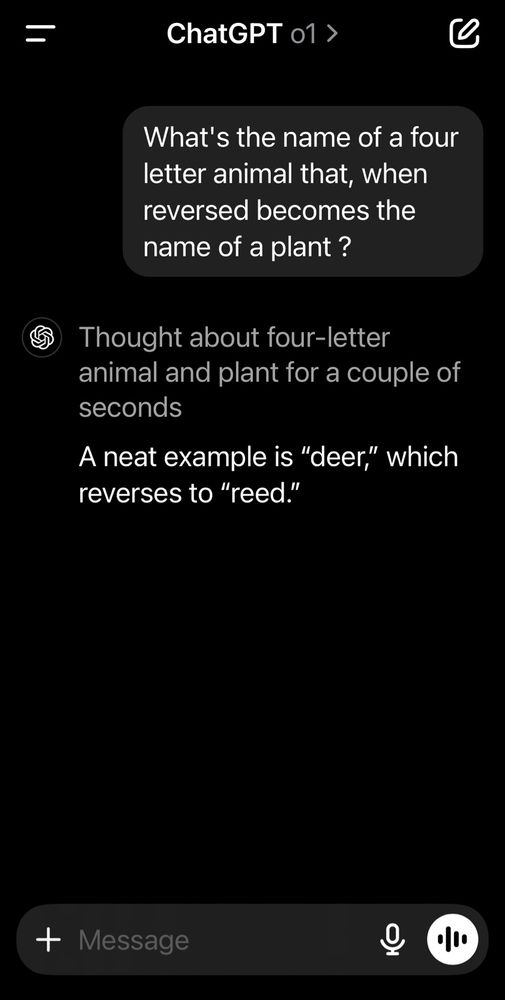

A screenshot of the chatgpt app, showing a conversation with the o1 model. The user asks: What's the name of a four letter animal that, when reversed becomes the name of a plant ? And the model answered: A neat example is “deer,” which reverses to “reed.”

This felt like a good use case for reasoning models (better ability to detect its own mistakes especially around things like letter manipulations which are naturally challenging for LLMs) and indeed:

So mastermind is wordle but only for one possible combination

Remember those .io games which were "multiplayer" but were actually just bots? Everyone could feel like they were winning regardless of their skill level!

Social networks are 0-sum game for attention but this will make sure you get replies & likes from fake people to keep you hooked & posting.

Day 17 — until today, intellisense would often fail when you were in the middle of writing components, because Svelte's parser crashed on syntax errors.

We just fixed that. Install svelte@latest, make sure your extensions are up to date, and feel the wind in your hair as you write your components

2025 will be the year of AI for science

Leveraging all the things we've recently learned training AI models for 1000x impact in science

and this will need data!

More details: huggingface.co/blog/lemater...

Thread:

...

Naturally it makes me want to move some of the logic away from my components to .svelte.ts classes...

I'm not sure if that's going to be more maintainable long term: having logic, layout and styling into a component was a big plus for Svelte imo. I guess I just need to experiment with it more?

This feels like a pretty big deal! Loving this new feature, especially when combined with reactive state in classes. I really like this new Svelte DX

Performance leap: TGI v3 is out. Processes 3x more tokens, 13x faster than vLLM on long prompts. Zero config !

New Llama model just dropped! Evals are looking quite impressive but we'll see how good it is in practice. We're hosting it for free on HuggingChat, feel free to come try it out: hf.co/chat/models/...

Love this!

The Lichess database of games, puzzles, and engine evaluations is now on @hf.co - https://huggingface.co/Lichess. Billions of chess data points to download, query, and stream and we're excited to see what you'll build with it! ♟️ 🤗

Currently it's only available with Qwen's QwQ preview. I'm hopeful we'll have more open reasoning models in the future though!

Try it out here for free: huggingface.co/chat/models/...

Worked on a little UI for displaying the outputs of reasoning models on HuggingChat...

Trying to find the right balance between letting users see the raw model output but also making the final answer easier to parse. QwQ can be *very* verbose so it seemed useful to give a summary at the end.