Reminds me of the SolidGoldMagikarp

Reminds me of the SolidGoldMagikarp

this is so cool

it's crazy to me that RoPE's issue with BF16 wasn't noticed earlier.

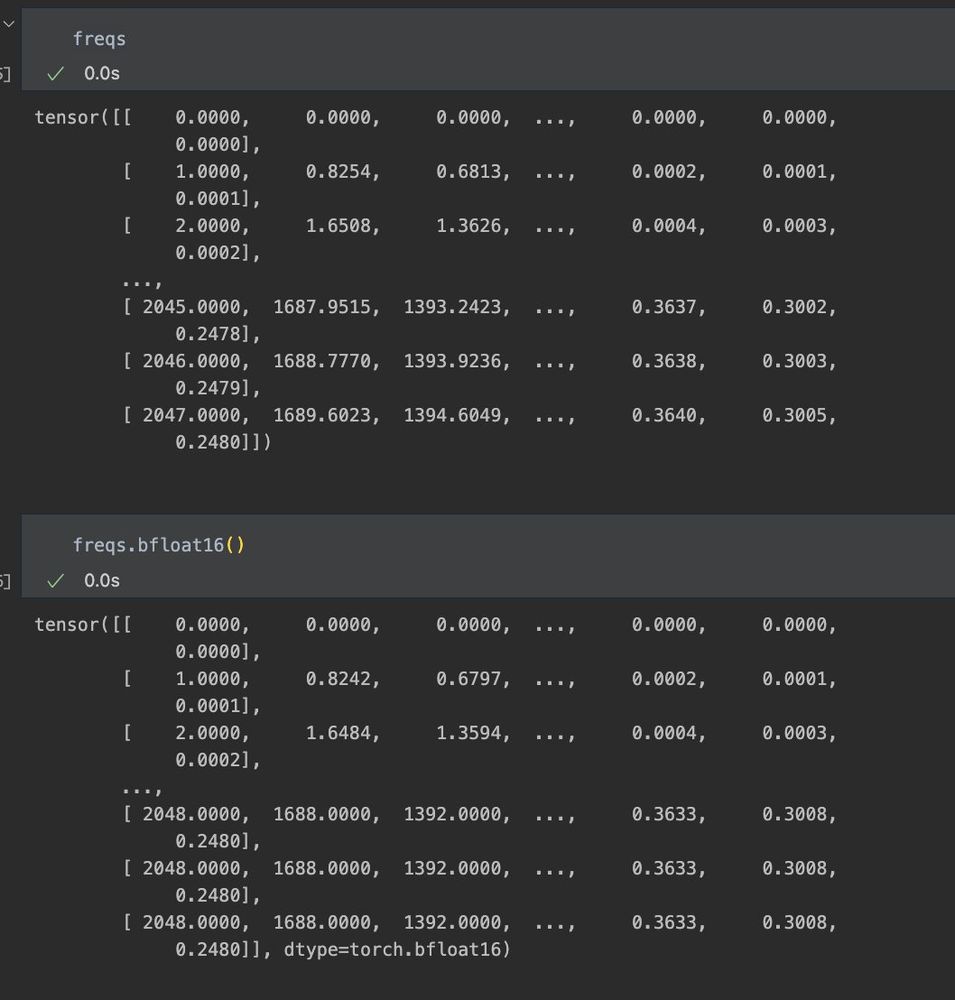

For a reasonable N of 2048, these are the computed frequencies prior to cos(x) & sin(x) for fp32 above and bf16 below.

Given how short the period is of simple trig functions, this difference is catastrophic for large values.

It’s an unstoppable force and all I can say is don’t hate the player (especially not the underdog) hate the game. Whether or not HF released this dataset your data is being used, you may as well also have access to its collection.

This is the big one and I can’t stress this enough. All of your data everywhere is being gathered and used anyway by private actors. The only fire you can fight back with is to play on that same field and democratize it. This anger is way mistargeted

Aren’t there datasets just like this for twitter and everything else imaginable? Idk why this is suddenly taboo, most making these datasets also aren’t sharing them publicly

🙄

Just added FSDP2 support for MARS and Muon!

that's what they all say

Awesome list, thanks!

Excellent writeup on GPU streams / CUDA memory

dev-discuss.pytorch.org/t/fsdp-cudac...

TLDR by default mem is proper to a stream, to share it::

- `Tensor.record_stream` -> automatic, but can be suboptimal and nondeterministic

- `Stream.wait` -> manual, but precise control

Incredible to see what is likely SOTA results coming out of open source with full reproducibility!

Happy to have helped provide the compute for this and hoping to support more awesome research like this!

First, my sincerest thanks to @leonardoai.bsky.social with the help of

@ethansmith2000.com for generously providing H100s to support this research to enable this release. Y'all rock, thanks so much! <3

Absolutely sick!

New NanoGPT training speed record: 3.28 FineWeb val loss in 4.66 minutes

Previous record: 5.03 minutes

Changelog:

- FlexAttention blocksize warmup

- hyperparameter tweaks

i trying to follow as many of my old moots as possible and new people as i find them. some of y'all changing your pfp is just mean spirited (im lazy and learned people's pfps not names)

Greetings xjdr

Untuned SOAP beats tuned adamw at ever single step

Yes @hessianfree.bsky.social can speak more to this

ADAM's been tuned but SOAP and PSGD just using default params, you love to see it.

There’s a void of PSGD hype that needs to be filled here

I goofed and never tested distributed saving, but now it works!

It was a little annoying as both SOAP and psgd maintain preconds as lists of varying size, which fail to be pickled. To fix this I hardcoded there to be a max of 4 (based on conv layers being 4d tensors).

I’ve generally preferred research to software engineering but I am growing a liking for building the tools used for research

Fancy seeing you here 👋

Self-proclaimed hessianfree guy going back on his word

Haven’t tested, but should be typical FSDP experience.