After iterating... you end up with a new app at your finger prints. What a time to be alive ;)

After iterating... you end up with a new app at your finger prints. What a time to be alive ;)

Step 3: Coded by Jules

Finally, it’s time to build. I setup a repo that contains my spec as a README, and the screens that I want to build which have been downloaded from Stitch.

Step 2: Designed by Stitch

I use the spec to explore the design of the app. Here is the Stitch project:

stitch.withgoogle.com/projects/272...

Step 1: Starting with the Spec

Captures what you are looking to build: it’s design and implementation. Sometimes I work with an AI to go deep on the definition up front, and other times I stay shallower and flush out the spec as I add more features.

★ Stitching with the new Jules API

The Jules team finished off another ship week with an initial version of their API, allowing you to integrate with your friendly asynchronous squid engineer.

In this post I show how I use Stitch to design a native app that uses the new API...

Not a bad start to a Sunday… with the footy on TV and using Jules to build an iOS app that uses it’s new API… designed by Stitch.

stitch.withgoogle.com/projects/272...

… Along with mini specs that contain relevant context, I am able to use a variety of models to get higher quality results.

This short post shares some details.

blog.almaer.com/pools-of-ext...

★ Pools of Extraction

I’ve started to notice some patterns in how I work on software projects. I create throwaway projects to learn from, and large repos with a bunch of ideas in. I sometimes extract targeted repos from them…

And then we have the ability to share public projects.

Being able to share your work to communicate and iterate is key... and we have more coming here too.

I would love to see any projects that you are game to share. Here is one of mine...

stitch.withgoogle.com/projects/180...

✨Stitch Design Variants: A Picture Really Is Worth a Thousand Words?

We have some great Stitch updates for you today, & I posted about a couple.

At first I talk about design variants and how to use the fact that your brain can grok a lot of images very quickly...

blog.almaer.com/stitch-desig...

Excited for you mate. Good peeps joining good peeps!

It has been incredibly fun to see @aerotwist.com, Dimitri, and the team bring this to life.

All of the power of the large variety of models, and you can just spec and ask for what you want. Delightful.

... for example, I often want to take an image and bring it to live with one of the new Google video models, so I ask for that and get a Breadboard flow that results in a running app:

opal.withgoogle.com?flow=drive:/...

I’m super excited about Opal, a new experiment from Google Labs.

It has changed my habits. Instead of asking for something in an AI chat app, for some tasks I create a micro app on the fly…

developers.googleblog.com/en/introduci...

OH “We got too many Product Managers who were good at competitive analysis but not deeply technical, so we ended up with fear based copy cat roadmaps.”

“Without the appropriate error handling, the null pointer caused the binary to crash.”

status.cloud.google.com/incidents/ow...

Cue the Rust crowd…

"no matter who you are, most of the smartest people work for someone else" — Bill Joy

We have used open source as a way to leverage this, and now we are using LLMs with massive sets of knowledge as a form of leverage.

en.wikipedia.org/wiki/Joy%27s...

I use playwright-mcp to drive the browser, but why not go directly to CDP to get full access to networking and performance as well as DOM etc?

Stefan Li wired this up with devtools/mcp! Love it!

autoconfig.io/devtools-mcp...

JVM devs, this one’s for you: Rod Johnson (Spring) just dropped an OSS agent framework.

JVM-native, Kotlin-based, and focused on agentic flows mixing LLM-prompted interactions with code and domain models.

#Java #Kotlin #AINativeDev #News #OSS

Which group will be the AI and which the humans?

One of the most interesting announcements at I/O got just a few seconds of airtime: Gemini Diffusion.

A diffusion-based language model that:

• Generates in parallel, not left-to-right

• Hits ~1500 tokens/sec

• Can self-correct mid-generation

Read all about it here: https://www.ainativedev.co/99j

If there are gaps that you want to fill in, this could have better results. It also allows for more parallelism, and doesn’t fall into the trap of being stuck on a path due to the words already generated.

I’m keen to see how this works for codegen!!

And today we see the zag. We have another tool in the toolbox, diffusion models for text. This isn’t new, and has been talked about for some time. Unlike next token, diffusion by its nature is a good fit for generating text in a non-sequential manner…

Gemini Diffusion!

I love a good zig and zag. We used to be in a world where image generation used diffusion techniques (from noise), and text used autoregresion (next token). ChatGPT added 4o image generation that went the other way and used autoregression …

en.wikipedia.org/wiki/OpenAI_...

Or wait no, it’s a CLI, oh wait no it’s a remote agent platform… and a new model family…

AI tools multiply, and so do config files.

“This has all given birth to a plethora of rules files that we need to manage.”

Vibe-rules CLI discovers and syncs them so you can code not babysit .yaml.

Details ➜ www.ainativedev.co/nbp

#AINativeDev #DevTools #AIDevelopment #CLI

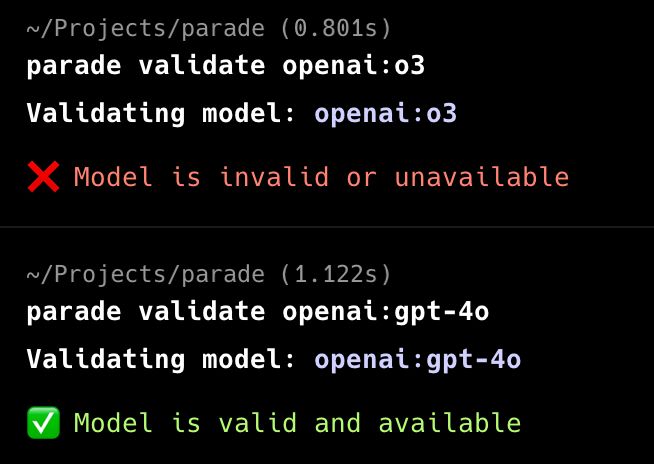

% parade list openai

I've been enjoying building simple tools writing a spec and having them generated.

`parade` uses Ink and Vercel's AI SDK to let me explore model names from various providers (because I am using the openai:gpt-4o type URI format in other tools).

github.com/dalmaer/parade

It’s a sin that this happened to Adam. A true world class frontend engineer and a world class human. I remember being so excited when we first spoke about him joining Chrome and then talking VisBug. And he’s gone from strength to strength. What an epic own goal.