Love the name as well

Love the name as well

I barely post anymore here. The engagement is so low for the non-biggest channels, barely existing.

Alignment is a two way street

Every now and then I read posts about 90% of coding will be done by AI this year and I can't lie it makes me a bit nervous. Then a study likes this drops and I can relax a bit

You’ve probably heard about how AI/LLMs can solve Math Olympiad problems ( deepmind.google/discover/blo... ).

So naturally, some people put it to the test — hours after the 2025 US Math Olympiad problems were released.

The result: They all sucked!

I think this a big part of it. Developers talk about their experience with GenAI coding, but we come from way different places. I do a lot of solo prototyping for work, a lot of my code is a conversation starter. In this stage llms make you. 2-3 times faster, but in later stages it's maybe 20-30%

bsky.app/profile/bram...

There are plenty of cases where survivorship bias doesn’t apply. We just don’t remember them.

Housekeeper: "I no longer think people should clean their own apartments"

Uber Driver: "I no longer think people should own their own car"

Do you think vibe coding is a gradual or fundamental change in technology?

I'm 179.5 cm, the metric equivalent. It's the most trustworthy length, no one would lie about it.

Errors with a 200 status code is like gift wrapping a turd

Have mixed feelings as well. One the one hand debugging while vibe coding is super frustrating. On the other hand, it has been amazing for throwaway demo's and prototypes. I can aim for things I wouldn't have the time for normally.

Excel is one of the safest messaging technology out there. No message leaves your device and no risk of the other person leaking information.

An infinite amount of monkeys with an LLM can write the entire the complete works of Shakespeare

My doctor told me he's into vibe surgeries lately

I like them, it's a good heuristic to not read any text around it

I think the reason why LLMs are overconfident is because we keep saying telling "You are an expert in" literally anything

"Why flake8? I use Ruff"

I wrote my bachelor thesis on paradoxes in self-reference and I dit that. Felt obligated to that.

Electron apps are the Monkey's Paw to someone wishing cheaper RAM

Anyone know best practices / tips for improving LLM quality on classifying long texts? For shorter input few-shot learning and finding the best examples work well, but this not very practical with longer texts. #databs

We'll have AI alignment before MS Word alignment

An agent is an LLM that uses tools.

A tool is someone who keeps saying '2025 is the year of the agents'

You said 'use a mixture of experts' so I invited every stakeholder in the company to the meeting

If I ask chatgpt for some advanced text processing tasks it will recommend contrived string manipulation instead of calling an llm. If I then ask it to use gpt it will use the openai library of an earlier major release

Today was a day where all emails found me well

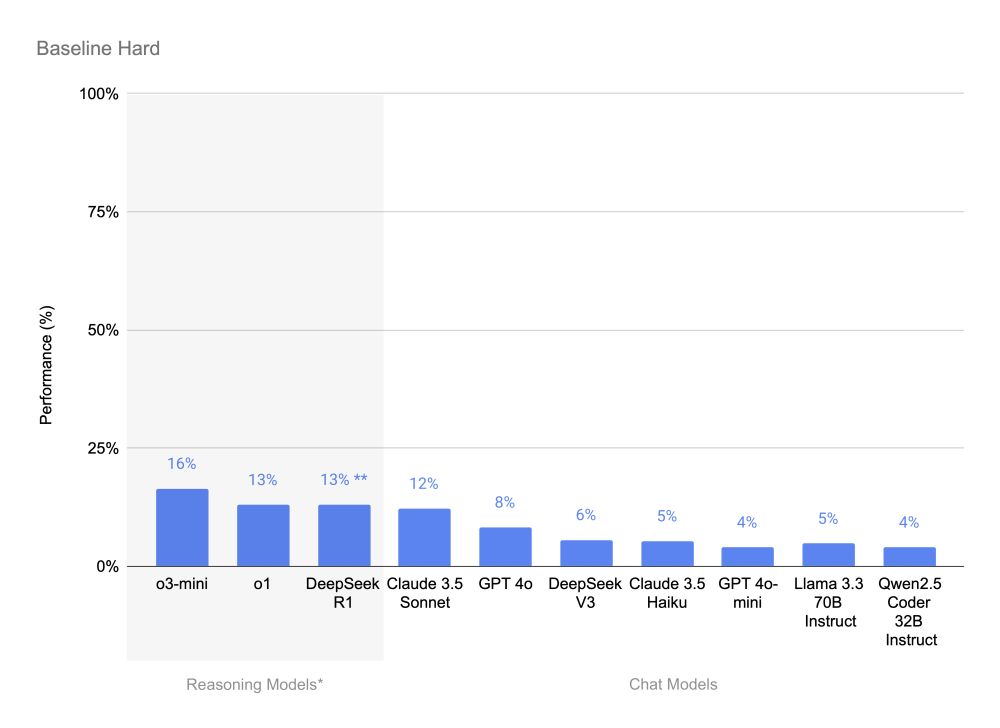

Interesting benchmark from Adyen on multi-step reasoning. New benchmarks are great in establishing a baseline for historic models, all new models should be treated with suspicion. #databs

huggingface.co/blog/dabstep

That's fine, just run it several times and in parallel. No downsides

Really want to like cursor but I am completely Pycharm brained it seems. Anyone else have the same ? Which copilot did you go for?